AI’s structural risks

AI’s structural risks

What could go wrong

Introduction

Artificial intelligence currently sits at the centre of global technological discourse. Venture capital, corporate strategy, media commentary, and academic research are increasingly aligned around the idea that AI will reshape nearly every aspect of modern life. Conferences, podcasts, and investor briefings often portray AI as an unstoppable force approaching a moment of singular transformation. According to these narratives, radical societal change is not a question of if but merely when.

Yet the everyday reality outside technology circles appears far less dramatic. Most industries still operate largely as they did a decade ago, with incremental digital improvements rather than sweeping AI-driven revolutions. While generative AI tools have certainly expanded capabilities in areas such as programming, content generation, and data analysis, the broader structural transformation of the economy remains incomplete. This gap between expectation and observable impact invites a deeper examination of the potential constraints and risks facing the AI sector.

Let’s think about what all could go wrong!

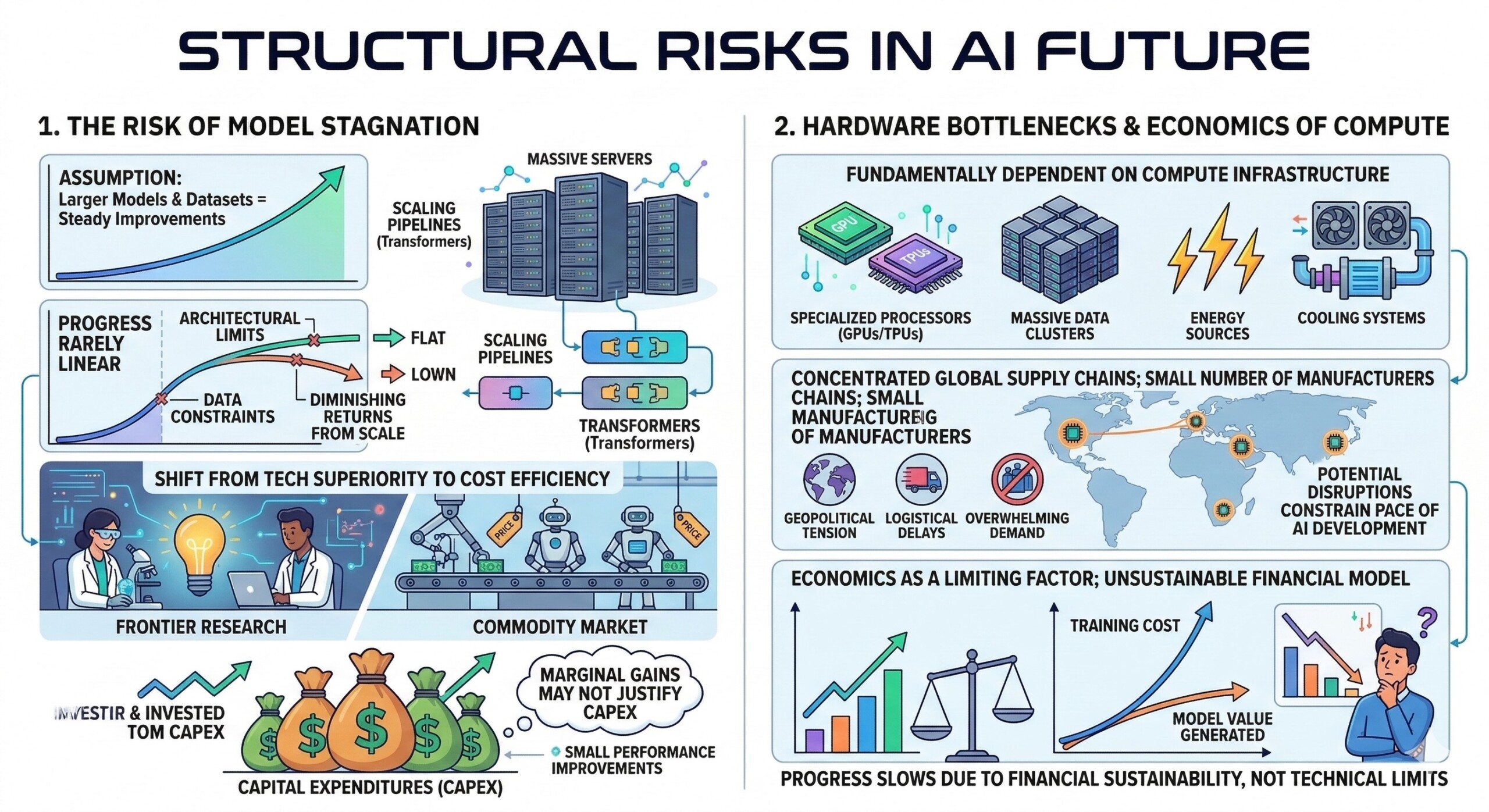

1. The risk of model stagnation

The current AI boom is built on a powerful assumption: that increasingly large models and datasets will continue to produce steady improvements in capability. This assumption has largely held true over the past decade, particularly with the rise of transformer-based architectures and large-scale training pipelines.

However, technological progress rarely follows a perfectly linear trajectory. If improvements in model performance begin to slow, due to whatever reason (architectural limits, data constraints, or diminishing returns from scale), the competitive environment could shift dramatically.

Then, companies that invested tens of billions of dollars into training infrastructure may find themselves competing primarily on cost efficiency rather than technological superiority. The industry could evolve into a commodity market where marginal gains in performance no longer justify the immense capital expenditures required for frontier research.

2. Hardware bottlenecks and economics of compute

AI development is fundamentally dependent on computing infrastructure. Training large models requires enormous clusters of specialized processors, along with massive energy consumption and advanced cooling systems. Global supply chains for these chips remain concentrated in a small number of manufacturers. Any disruption – geopolitical, logistical, or overwhelming demand – could significantly constrain the pace of AI development.

Also, the economics of compute may itself become a limiting factor. If the cost of training new models continues to grow faster than the value those models generate, companies may face declining returns on investment. Under such circumstances, progress could slow not because of technical limits, but because the financial model becomes unsustainable. An excellent collection of learning videos awaits you on our Youtube channel.

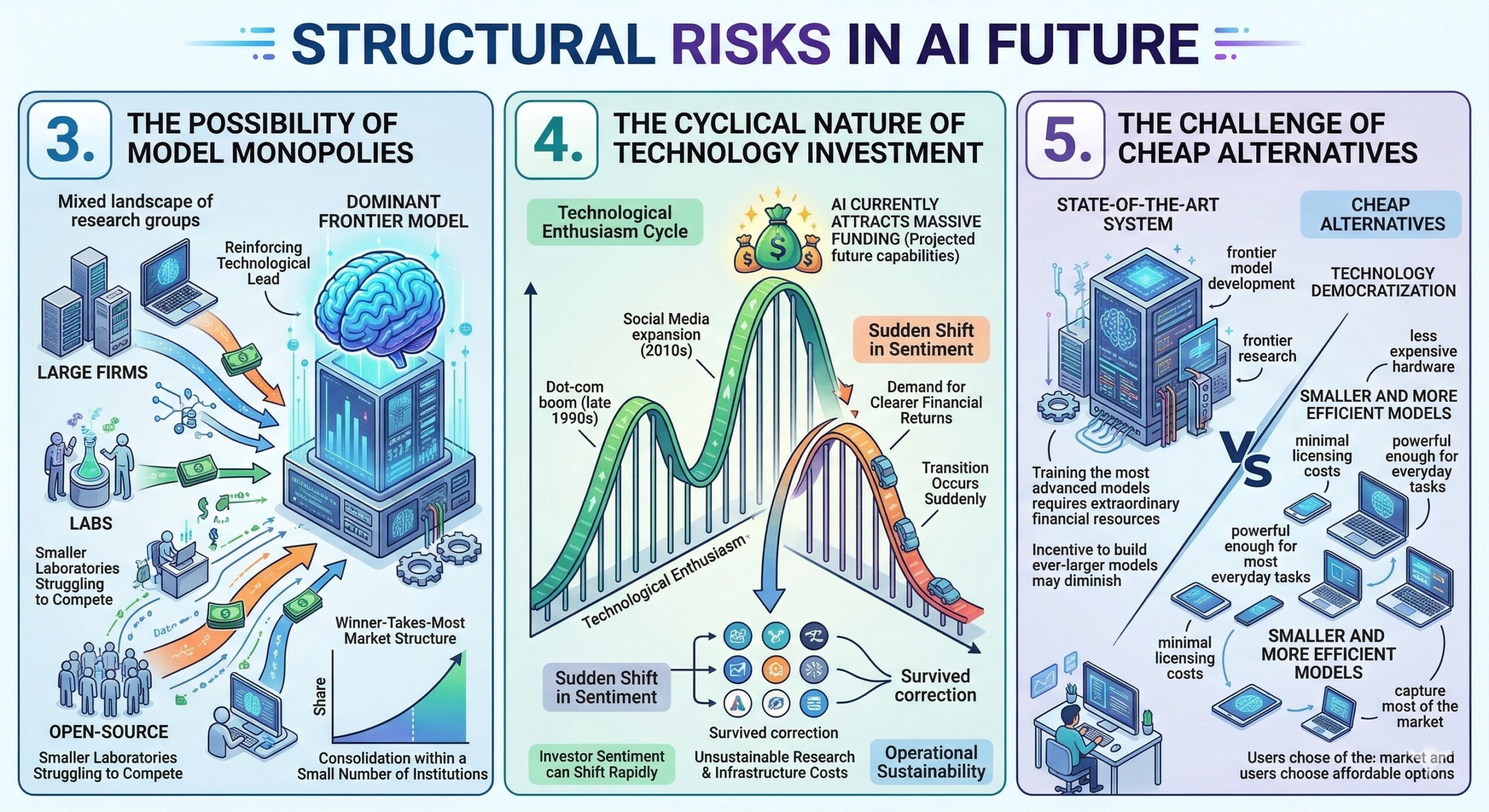

3. The possibility of model monopolies

AI research today is conducted by a mixture of large technology firms, independent research labs, and open-source communities. While this ecosystem appears competitive, it also contains the potential for extreme concentration. If a single organization develops a model that significantly outperforms all competitors, it could attract the majority of customers and revenue. That revenue could then be reinvested into even larger training runs, reinforcing the organization’s technological lead.

This dynamic resembles a classic winner-takes-most market structure, where a dominant player accumulates both capital and data advantages. In such an environment, smaller laboratories could struggle to remain competitive, leading to consolidation of AI development within a small number of institutions.

4. The cyclical nature of technology investment

Technological enthusiasm tends to follow cycles. The dot-com boom of the late 1990s and the social media expansion of the 2010s both demonstrated how investor sentiment can shift rapidly once growth expectations stabilize.

AI companies currently attract massive funding based largely on projected future capabilities rather than present-day profitability. At some point, investors may begin demanding clearer financial returns rather than continued expansion.

If that transition occurs suddenly, some organizations may find themselves unable to sustain the enormous research and infrastructure costs associated with cutting-edge AI development. The result could resemble previous technology corrections in which only a subset of companies survive the shift from speculative investment to operational sustainability. A constantly updated Whatsapp channel awaits your participation.

5. The challenge of cheap alternatives

Another potential disruption comes from the opposite direction: not technological stagnation, but technological democratization.

In recent years, smaller and more efficient models have become increasingly capable. These models can run on less expensive hardware and often have minimal licensing costs. If they become sufficiently powerful for most everyday tasks, many users may see little reason to pay premium subscription fees for state-of-the-art systems.

This scenario could weaken the economic foundation of frontier model development. Training the most advanced models requires extraordinary financial resources, and those investments depend on large-scale revenue streams. If cheaper alternatives capture most of the market, the incentive to build ever-larger models may diminish.

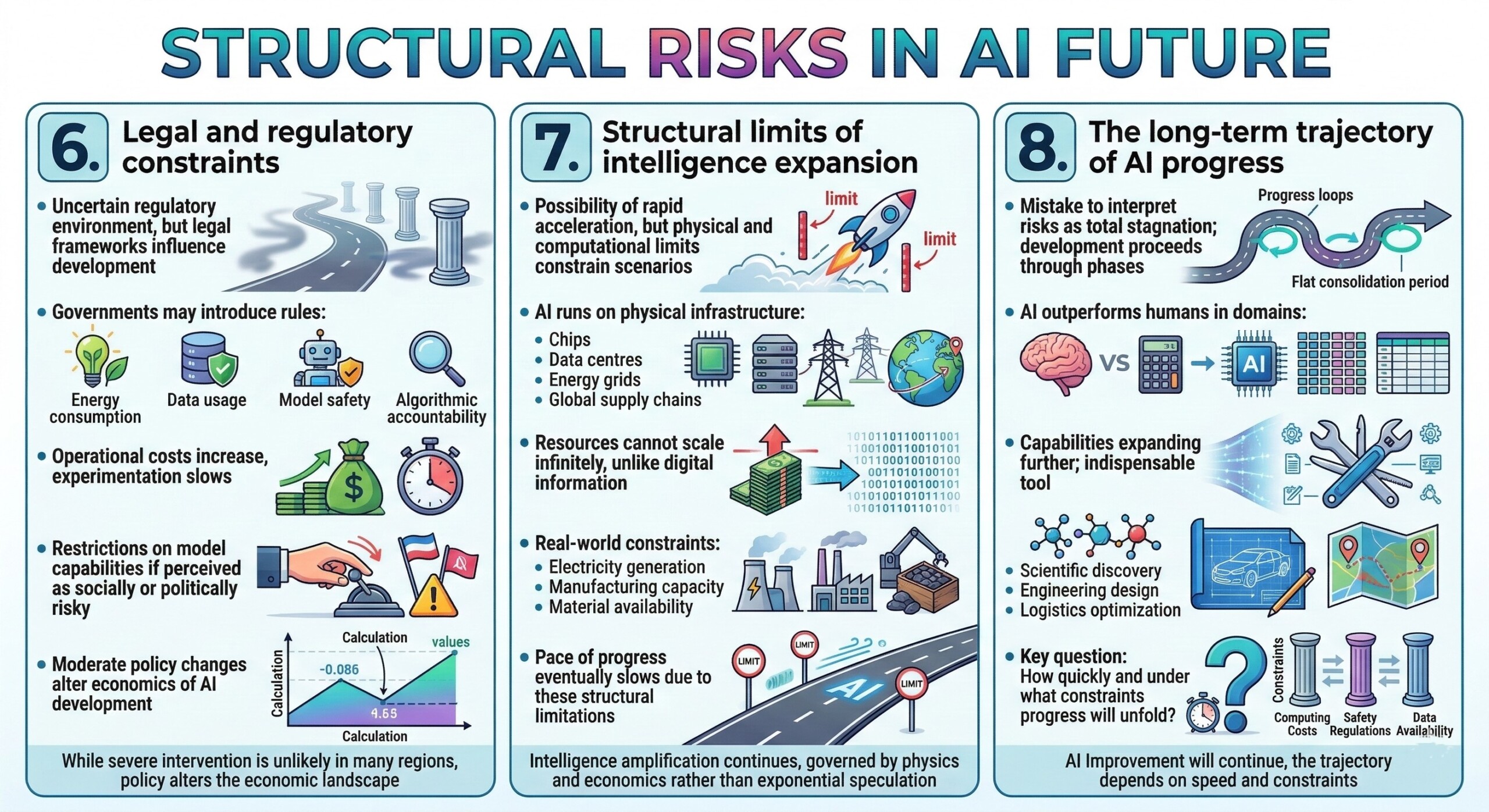

6. Legal and regulatory constraints

Although the regulatory environment surrounding AI remains uncertain, legal frameworks could still influence the direction of development.

Governments may introduce rules related to energy consumption, data usage, model safety, or algorithmic accountability. Some of these regulations could increase operational costs or slow experimentation. Others might impose restrictions on model capabilities if governments perceive certain applications as socially or politically risky.

While severe regulatory intervention remains unlikely in many regions, even moderate policy changes could alter the economics of AI development in significant ways. Excellent individualised mentoring programmes available.

7. Structural limits of intelligence expansion

Much of the excitement surrounding AI stems from the possibility of a rapid acceleration toward superintelligence. Yet physical and computational limits may constrain such scenarios.

AI systems ultimately run on physical infrastructure: chips, data centres, energy grids, and global supply chains. Unlike digital information, these resources cannot scale infinitely. Electricity generation, manufacturing capacity, and material availability impose real-world constraints on computational expansion.

Even if AI systems become increasingly capable, the pace of progress may eventually slow due to these structural limitations. Intelligence amplification may continue, but at a rate governed by physics and economics rather than exponential speculation.

8. The long-term trajectory of AI progress

Despite these risks, it would be a mistake to interpret them as evidence that AI will stagnate entirely. Historically, technological development tends to proceed through alternating phases of rapid progress and consolidation.

AI systems already outperform humans in several domains, including large-scale calculation, pattern recognition, and data processing. Future models will almost certainly expand these capabilities further. In many areas – scientific discovery, engineering design, logistics optimization – AI will likely become an indispensable tool.

The key question is not whether AI will continue improving, but how quickly and under what constraints that progress will unfold. Subscribe to our free AI newsletter now.

Summary

The most likely future lies somewhere between the extremes of boundless optimism and technological pessimism. AI will almost certainly continue expanding human capability across numerous fields. But its progress will not occur in isolation from the economic, physical, and institutional structures that support it. Understanding those constraints may be just as important as celebrating the technology’s remarkable achievements. Upgrade your AI-readiness with our masterclass.