Formal Methods and Verification in AI

Formal Methods and Verification in AI

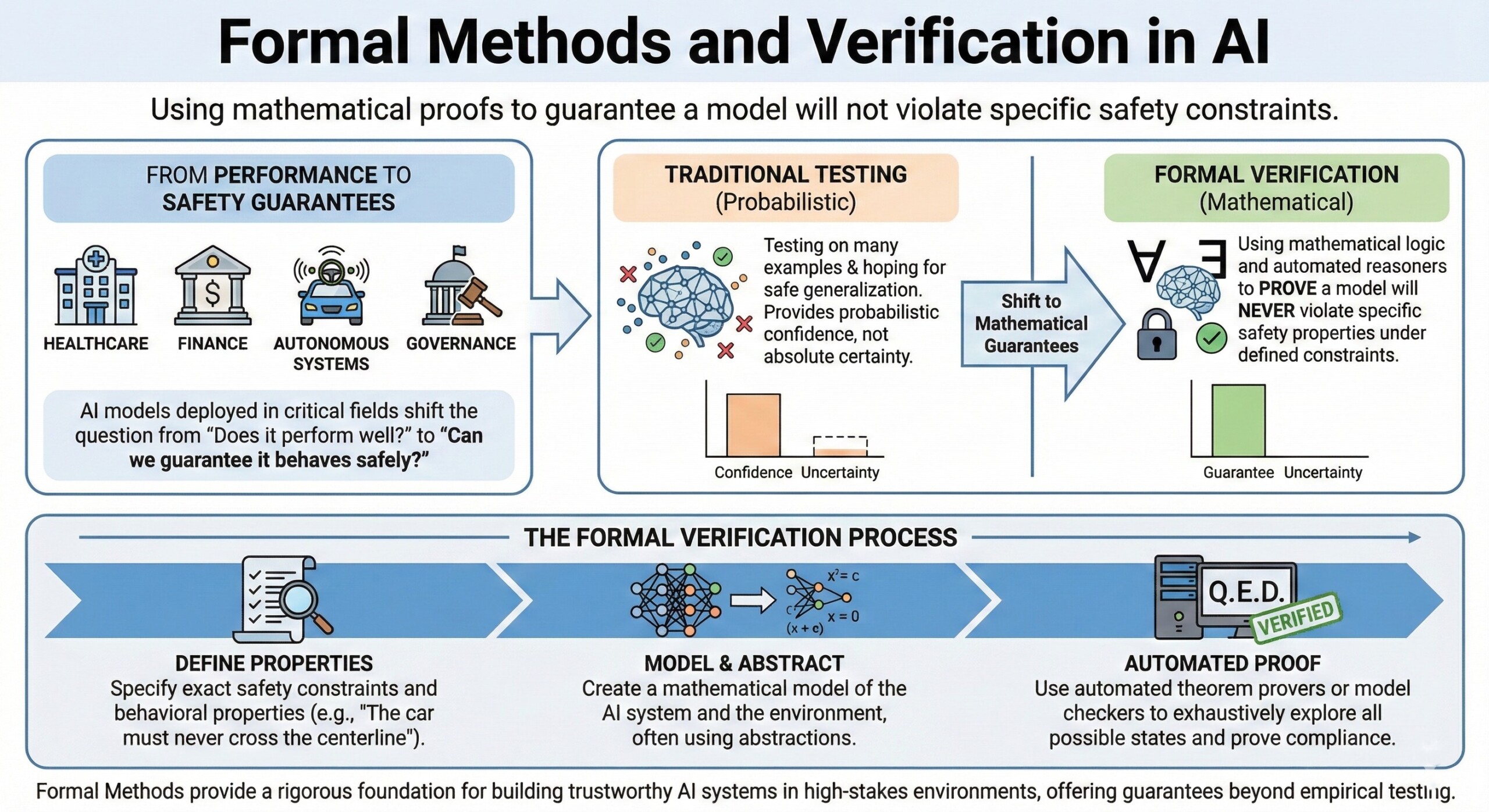

Using mathematical proofs to guarantee a model will not violate specific safety constraints

Designing powerful AI systems is no longer enough. As AI models are deployed in healthcare, finance, autonomous systems, and governance, the critical question shifts from “Does it perform well?” to “Can we guarantee it behaves safely under all conditions?”

Formal Methods and Verification bring mathematical rigor to AI development. Instead of testing models on many examples and hoping they generalize safely, formal verification attempts to prove that a model will never violate specific safety properties under defined constraints.

This represents a shift from probabilistic confidence to mathematical guarantees.

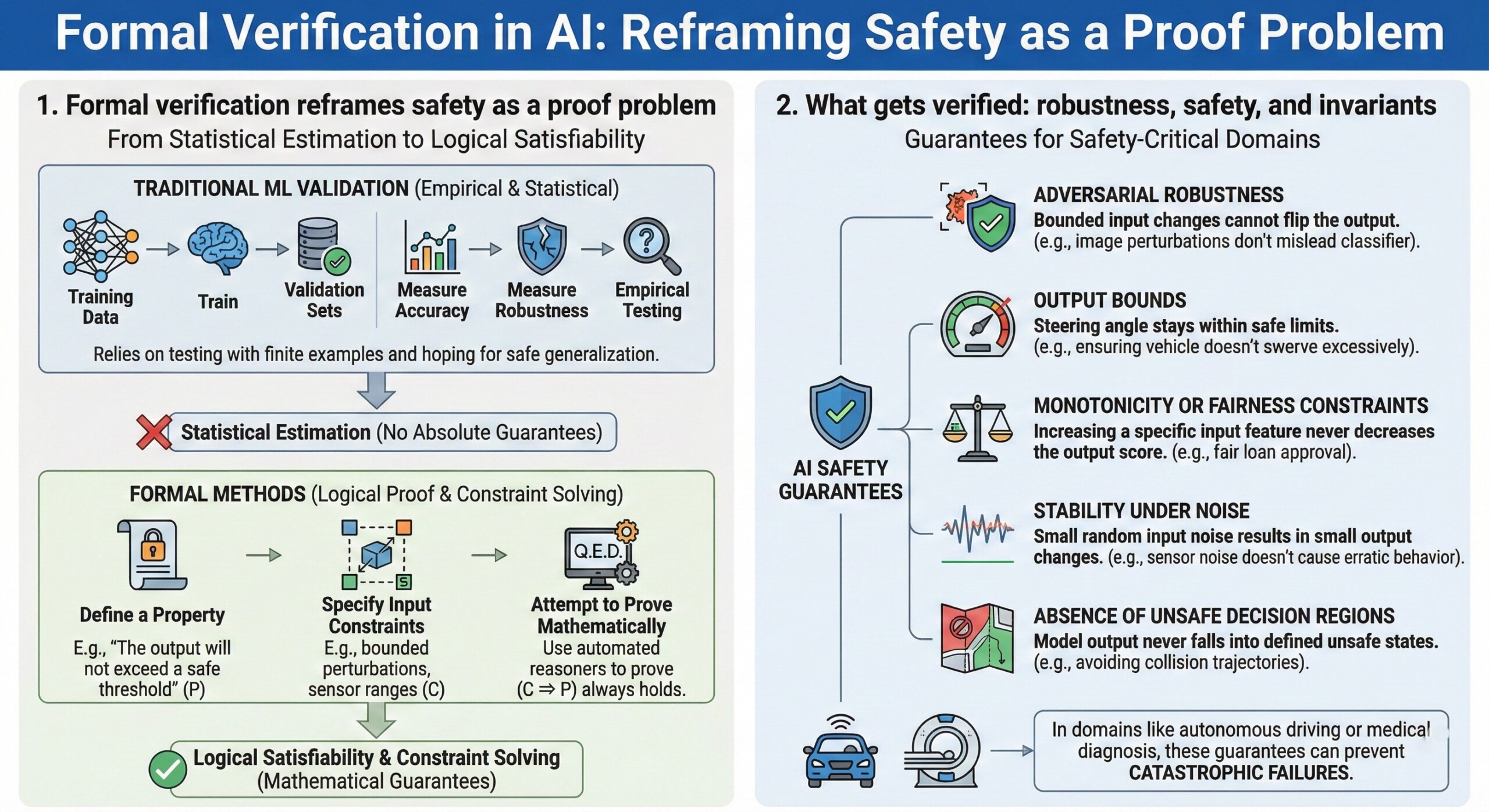

1. Formal verification reframes safety as a proof problem

Traditional ML validation relies on empirical testing: train the model, evaluate on validation sets, measure accuracy or robustness.

Formal methods instead:

- Define a property (e.g., “The output will not exceed a safe threshold”).

- Specify input constraints (e.g., bounded perturbations, sensor ranges).

- Attempt to prove mathematically that the property always holds.

This turns AI safety into a problem of logical satisfiability and constraint solving, rather than statistical estimation.

2. What gets verified: robustness, safety, and invariants

Formal verification in AI typically focuses on properties such as:

- Adversarial robustness (bounded input changes cannot flip the output)

- Output bounds (e.g., steering angle stays within safe limits)

- Monotonicity or fairness constraints

- Stability under noise

- Absence of unsafe decision regions

In safety-critical domains like autonomous driving or medical diagnosis, these guarantees can prevent catastrophic failures. An excellent collection of learning videos awaits you on our Youtube channel.

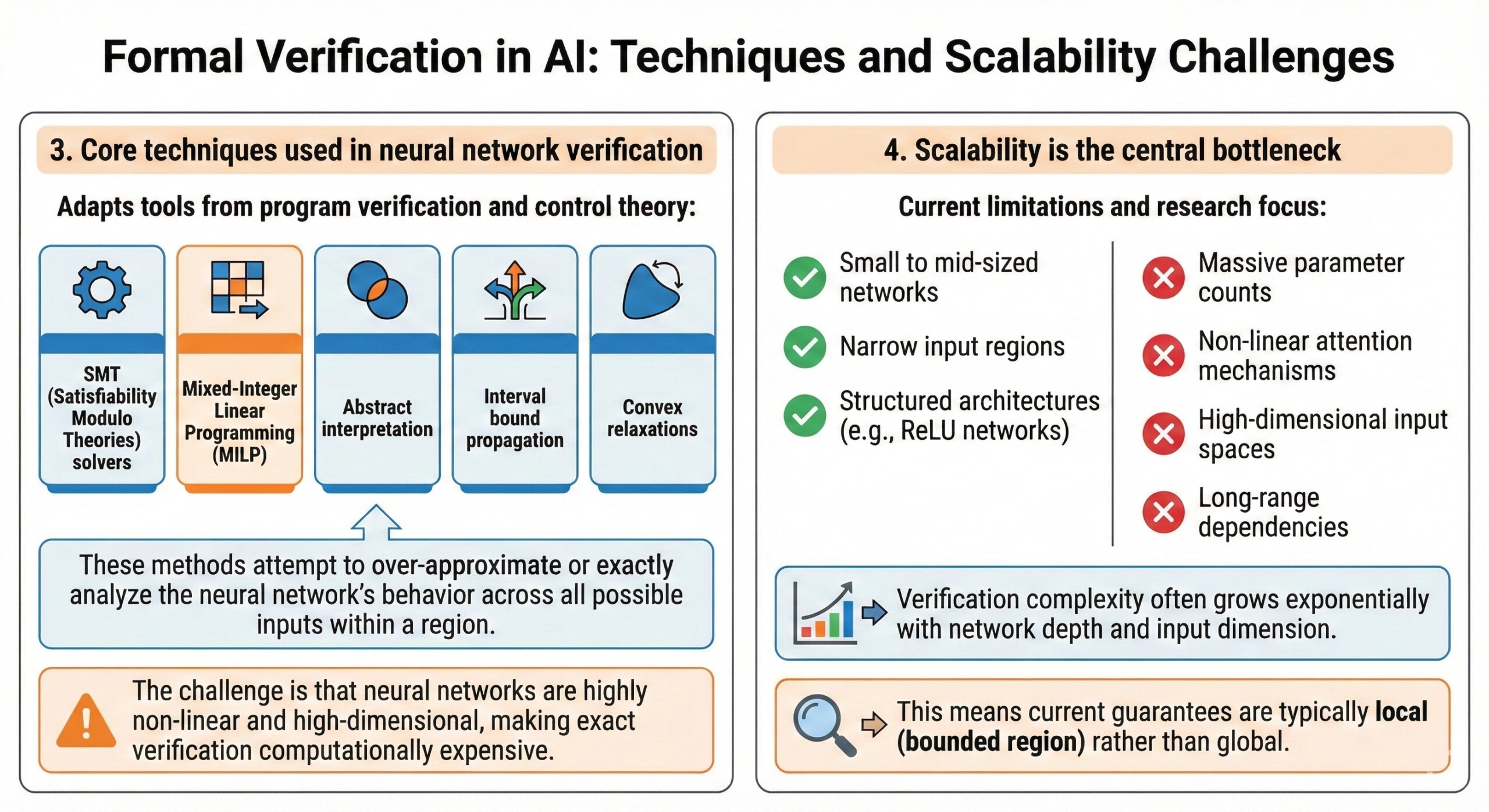

3. Core techniques used in neural network verification

Formal verification adapts tools from program verification and control theory:

Common techniques:

- SMT (Satisfiability Modulo Theories) solvers

- Mixed-Integer Linear Programming (MILP)

- Abstract interpretation

- Interval bound propagation

- Convex relaxations

These methods attempt to over-approximate or exactly analyze the neural network’s behavior across all possible inputs within a region.

The challenge is that neural networks are highly non-linear and high-dimensional, making exact verification computationally expensive.

4. Scalability is the central bottleneck

Formal methods work best for:

- Small to mid-sized networks

- Narrow input regions

- Structured architectures (e.g., ReLU networks)

But scaling to large transformers or frontier LLMs remains extremely difficult due to:

- Massive parameter counts

- Non-linear attention mechanisms

- High-dimensional input spaces

- Long-range dependencies

Verification complexity often grows exponentially with network depth and input dimension.

This means current guarantees are typically local (bounded region) rather than global. A constantly updated Whatsapp channel awaits your participation.

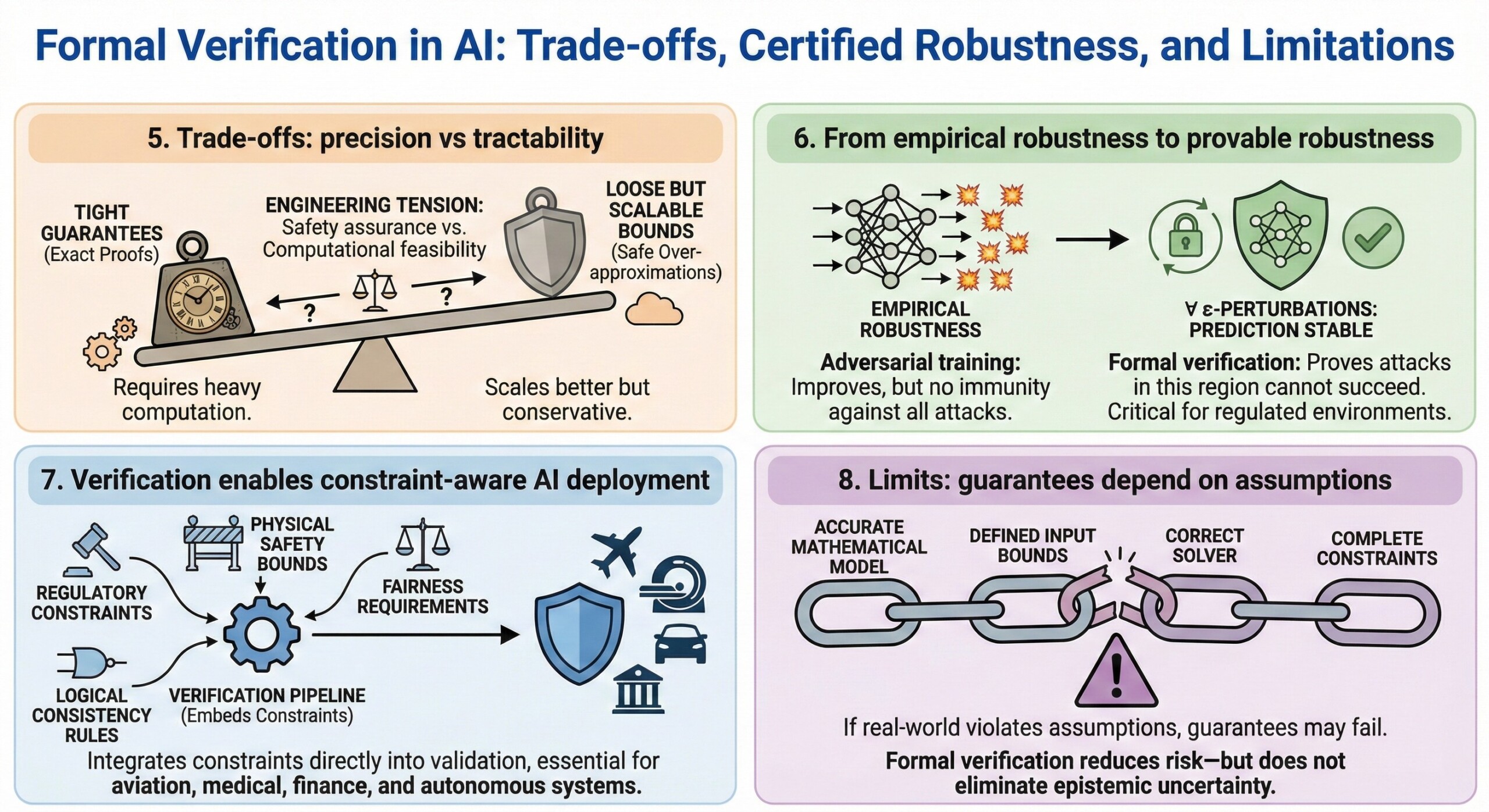

5. Trade-offs: precision vs tractability

Verification methods must balance:

- Tight guarantees (exact proofs)

- Loose but scalable bounds (safe over-approximations)

Tighter proofs require heavy computation.

Looser methods scale better but may be overly conservative.

This introduces a fundamental engineering tension between safety assurance and computational feasibility.

6. From empirical robustness to provable robustness

Adversarial training improves robustness empirically, but does not guarantee immunity.

Formal verification enables certified robustness, meaning:

For all perturbations within ε of the input, the prediction cannot change.

This moves safety from “we tested many attacks” to “we proved attacks in this region cannot succeed.”

That distinction is critical in regulated environments. Excellent individualised mentoring programmes available.

7. Verification enables constraint-aware AI deployment

Formal methods allow integration of:

- Regulatory constraints

- Physical safety bounds

- Fairness or monotonicity requirements

- Logical consistency rules

Instead of treating safety as a post-hoc audit step, verification embeds constraints directly into the model validation pipeline.

This is especially important in aviation, medical devices, finance, and autonomous systems.

8. Limits: guarantees depend on assumptions

Formal guarantees are only as strong as:

- The accuracy of the mathematical model

- The defined input bounds

- The correctness of the solver

- The completeness of specified constraints

If the real-world environment violates assumptions, guarantees may no longer hold.

Formal verification reduces risk—but does not eliminate epistemic uncertainty. Subscribe to our free AI newsletter now.

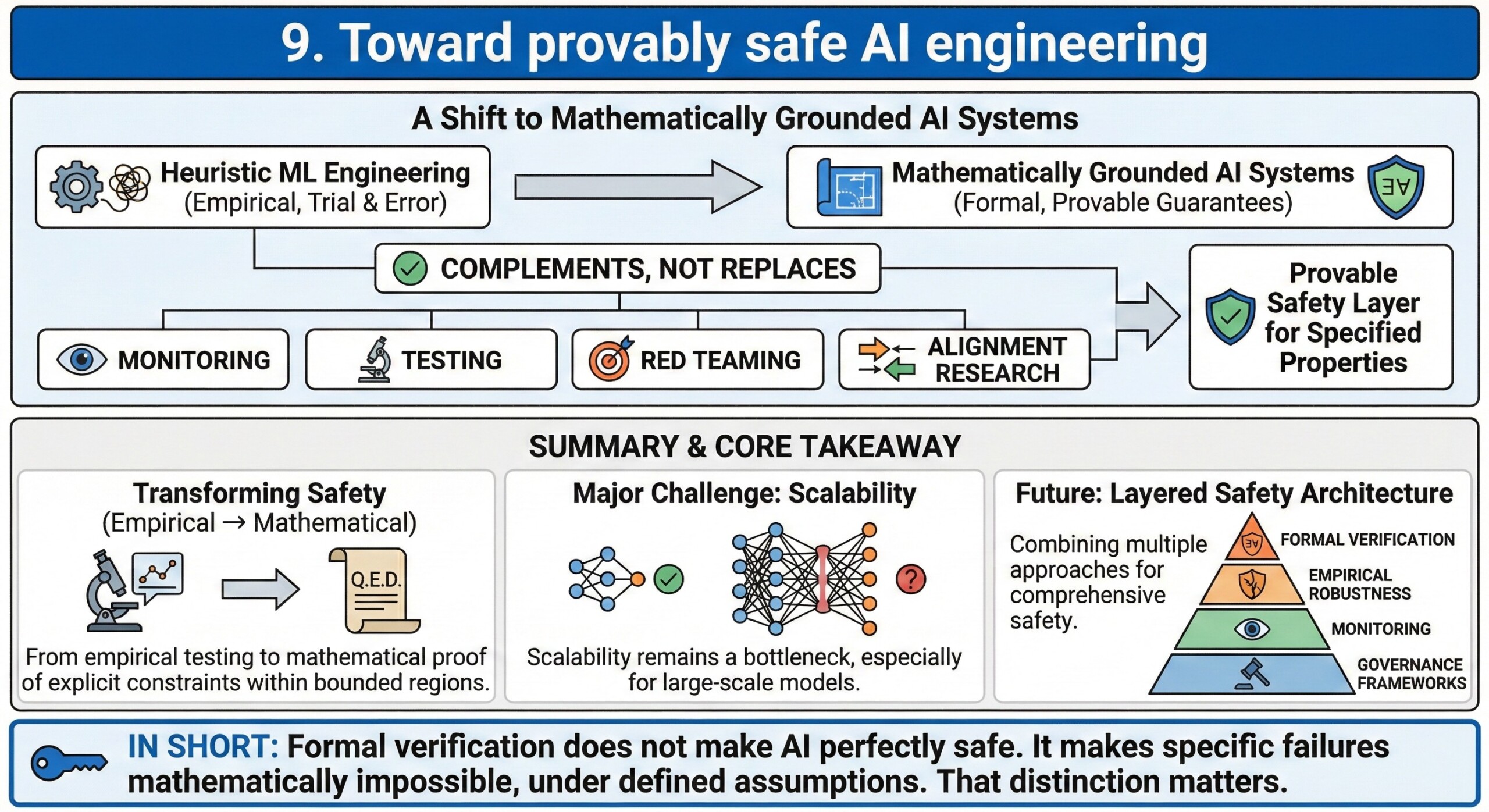

9. Toward provably safe AI engineering

Formal verification represents a shift from heuristic ML engineering to mathematically grounded AI systems.

However, it is not a replacement for:

- Monitoring

- Testing

- Red teaming

- Alignment research

Instead, it complements them by providing a layer of provable safety for clearly specified properties.

Summary

Formal Methods and Verification in AI transform safety from empirical testing into mathematical proof. By defining explicit constraints and proving that models cannot violate them within bounded regions, verification offers stronger guarantees than traditional validation.

Yet scalability remains the major challenge; especially for large-scale models. The future of safe AI will likely combine formal verification, empirical robustness, monitoring, and governance frameworks into a layered safety architecture.

In short:

Formal verification does not make AI perfectly safe.

It makes specific failures mathematically impossible, under defined assumptions.

That distinction matters. Upgrade your AI-readiness with our masterclass.