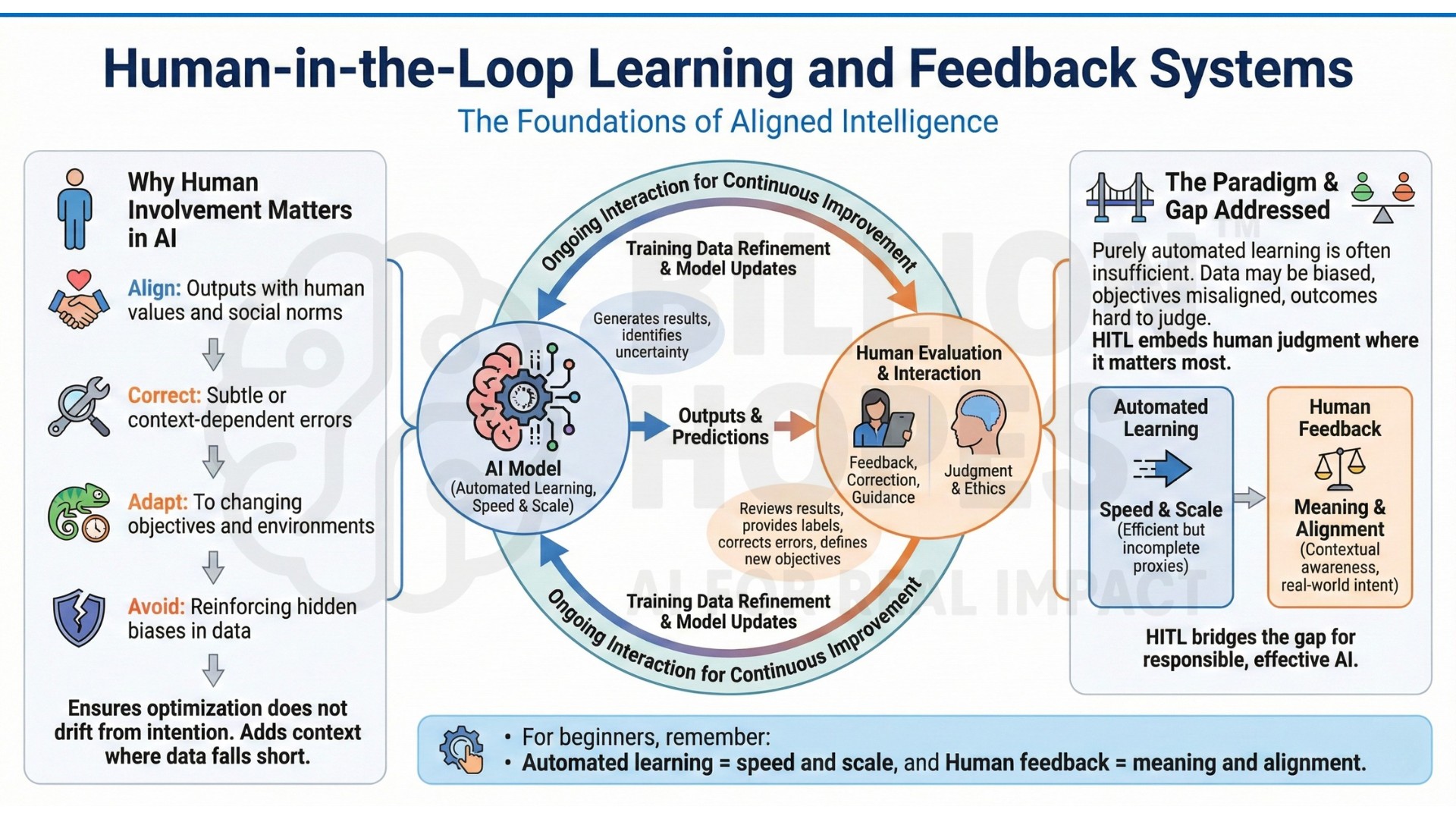

Human-in-the-Loop Learning and Feedback Systems

Modern AI systems do not learn in isolation. Behind every effective intelligent system lies an ongoing interaction between machines and humans – through feedback, correction, evaluation, and guidance. This paradigm, known as Human-in-the-Loop (HITL) learning, places humans directly inside the learning and decision-making cycle of AI systems.

As AI models become more powerful and autonomous, purely automated learning is often insufficient. Data may be biased, objectives may be misaligned, and outcomes may be hard to judge using metrics alone. Human-in-the-loop systems address this gap by embedding human judgment where learning, adaptation, and accountability matter most.

In this sense, Human-in-the-Loop learning can be called the ‘Foundations of Aligned Intelligence’.

1. Why human involvement matters in AI

No AI system truly understands goals, values, or consequences on its own. Learning algorithms optimize for signals we define – but those signals are often incomplete proxies for real-world intent.

Without human feedback, AI systems struggle to:

- Align outputs with human values and social norms

- Correct subtle or context-dependent errors

- Adapt to changing objectives and environments

- Avoid reinforcing hidden biases in data

Human-in-the-loop learning ensures that optimization does not drift away from intention. It adds judgment, ethics, and contextual awareness where data alone falls short.

For beginners, remember:

Automated learning = speed and scale, and

Human feedback = meaning and alignment.

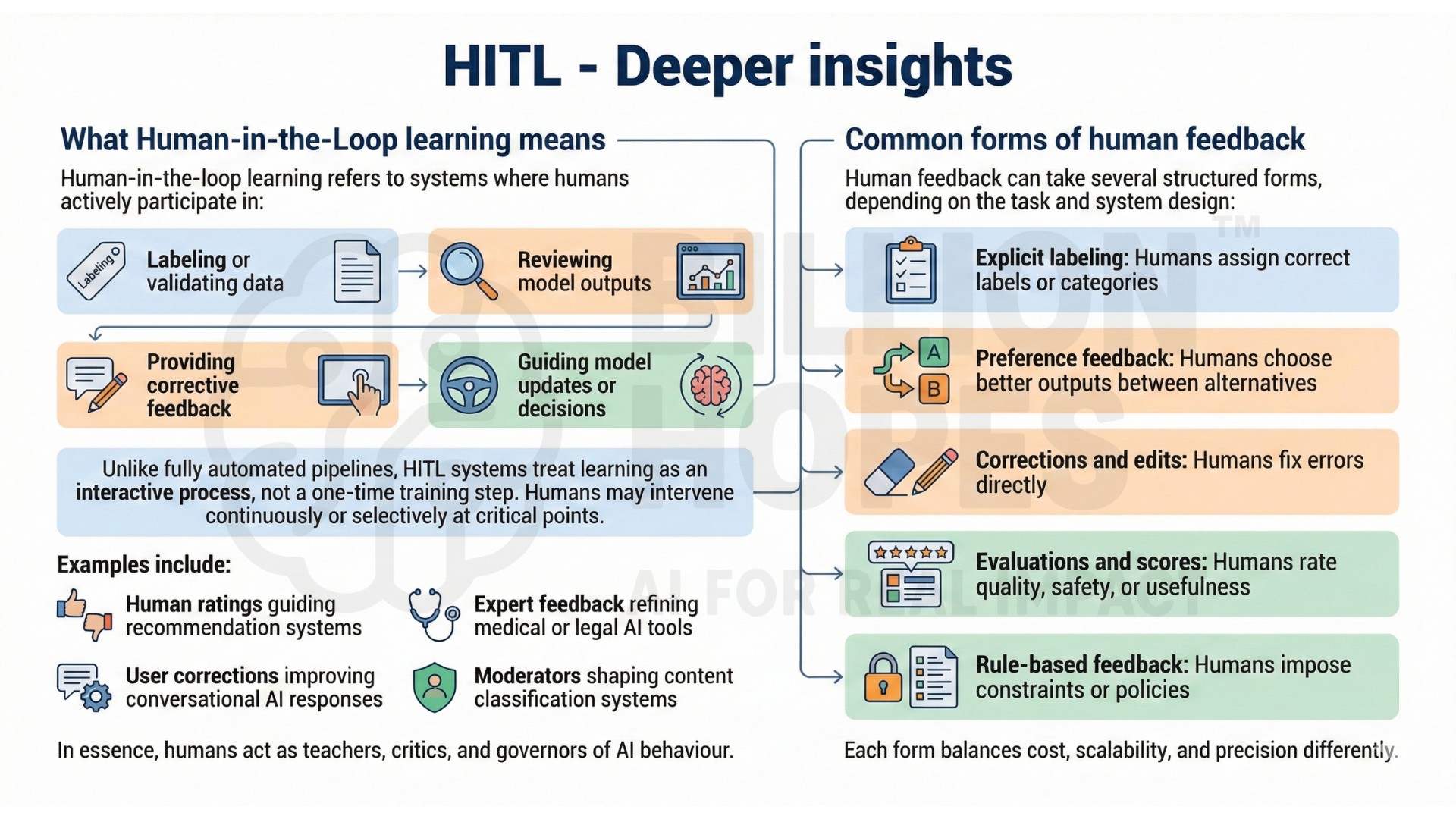

2. What Human-in-the-Loop learning means

Human-in-the-loop learning refers to systems where humans actively participate in:

- Labeling or validating data

- Reviewing model outputs

- Providing corrective feedback

- Guiding model updates or decisions

Unlike fully automated pipelines, HITL systems treat learning as an interactive process, not a one-time training step. Humans may intervene continuously or selectively at critical points.

Examples include:

- Human ratings guiding recommendation systems

- Expert feedback refining medical or legal AI tools

- User corrections improving conversational AI responses

- Moderators shaping content classification systems

In essence, humans act as teachers, critics, and governors of AI behaviour. An excellent collection of learning videos awaits you on our Youtube channel.

3. Common forms of human feedback

Human feedback can take several structured forms, depending on the task and system design:

- Explicit labeling: Humans assign correct labels or categories

- Preference feedback: Humans choose better outputs between alternatives

- Corrections and edits: Humans fix errors directly

- Evaluations and scores: Humans rate quality, safety, or usefulness

- Rule-based feedback: Humans impose constraints or policies

Each form balances cost, scalability, and precision differently.

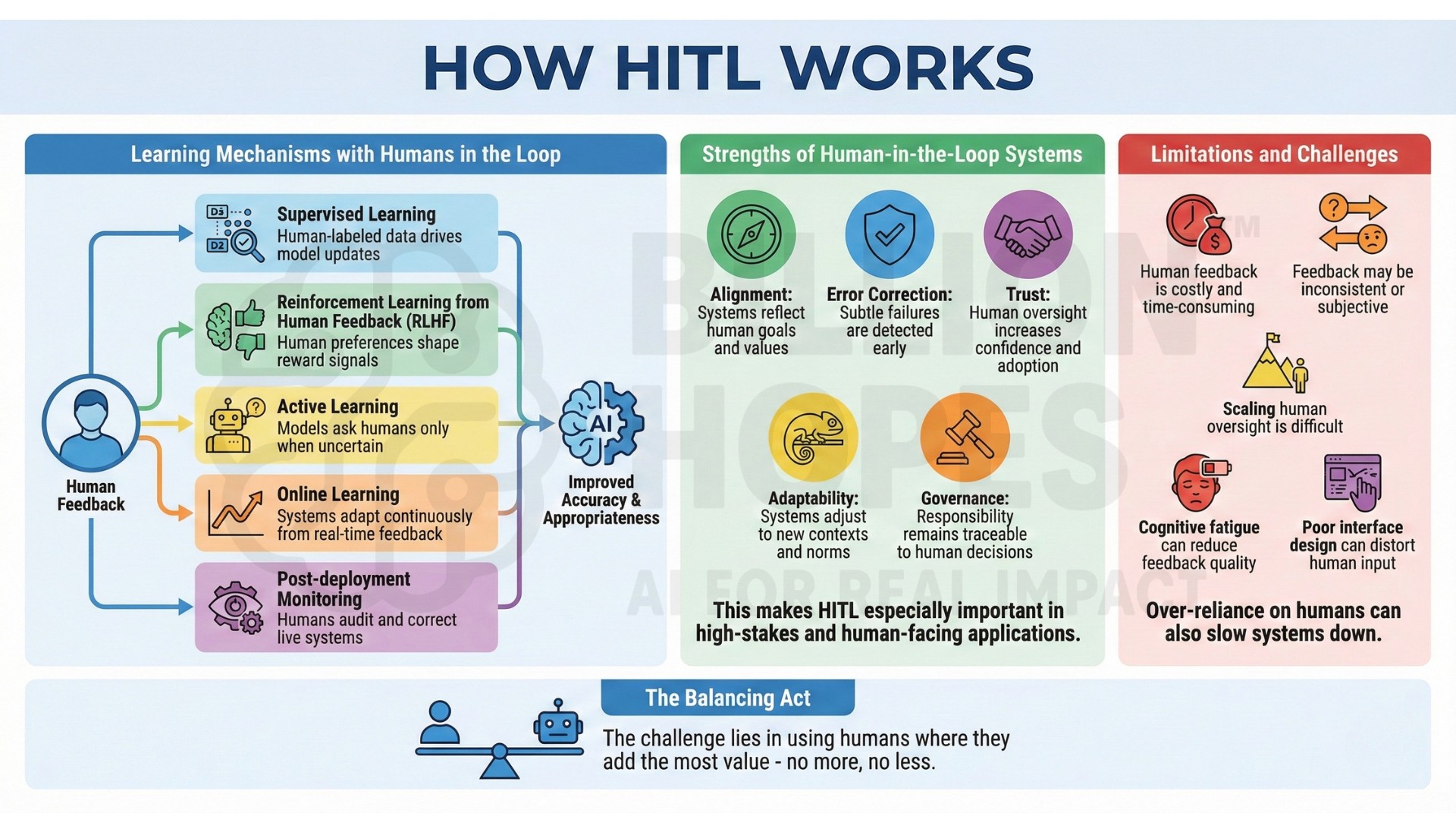

4. Learning mechanisms with humans in the loop

Once feedback is collected, learning systems incorporate it through different mechanisms:

- Supervised learning: Human-labeled data drives model updates

- Reinforcement learning from human feedback (RLHF): Human preferences shape reward signals

- Active learning: Models ask humans only when uncertain

- Online learning: Systems adapt continuously from real-time feedback

- Post-deployment monitoring: Humans audit and correct live systems

These mechanisms allow AI systems to improve not just accuracy, but appropriateness. A constantly updated Whatsapp channel awaits your participation.

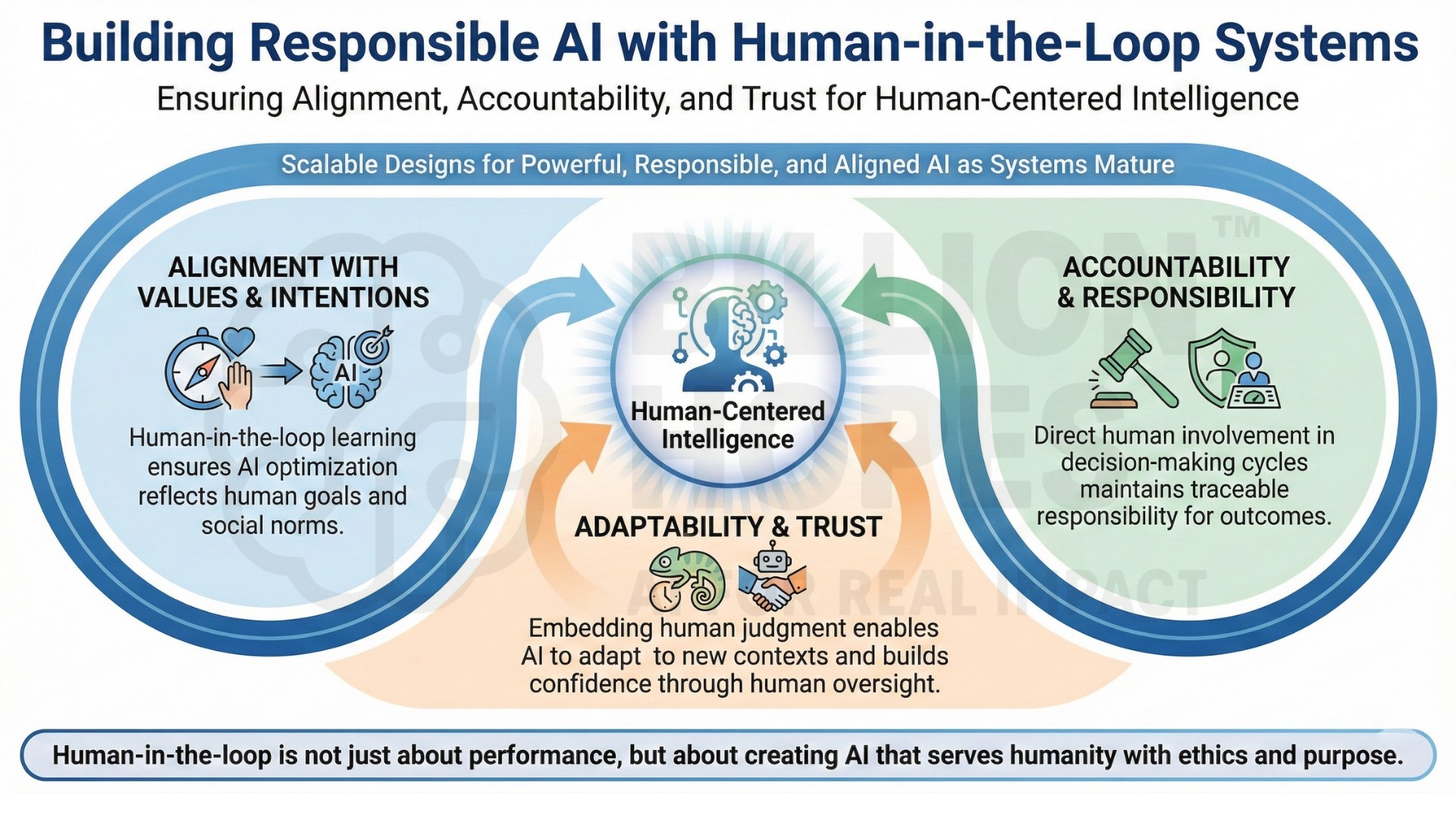

5. Strengths of Human-in-the-Loop systems

Human-in-the-loop learning provides critical advantages over fully automated approaches:

- Alignment: Systems reflect human goals and values

- Error correction: Subtle failures are detected early

- Trust: Human oversight increases confidence and adoption

- Adaptability: Systems adjust to new contexts and norms

- Governance: Responsibility remains traceable to human decisions

This makes HITL especially important in high-stakes and human-facing applications.

6. Limitations and challenges

Despite their benefits, HITL systems face practical constraints:

- Human feedback is costly and time-consuming

- Feedback may be inconsistent or subjective

- Scaling human oversight is difficult

- Cognitive fatigue can reduce feedback quality

- Poor interface design can distort human input

Over-reliance on humans can also slow systems down. The challenge lies in using humans where they add the most value – no more, no less. Excellent individualised mentoring programmes available.

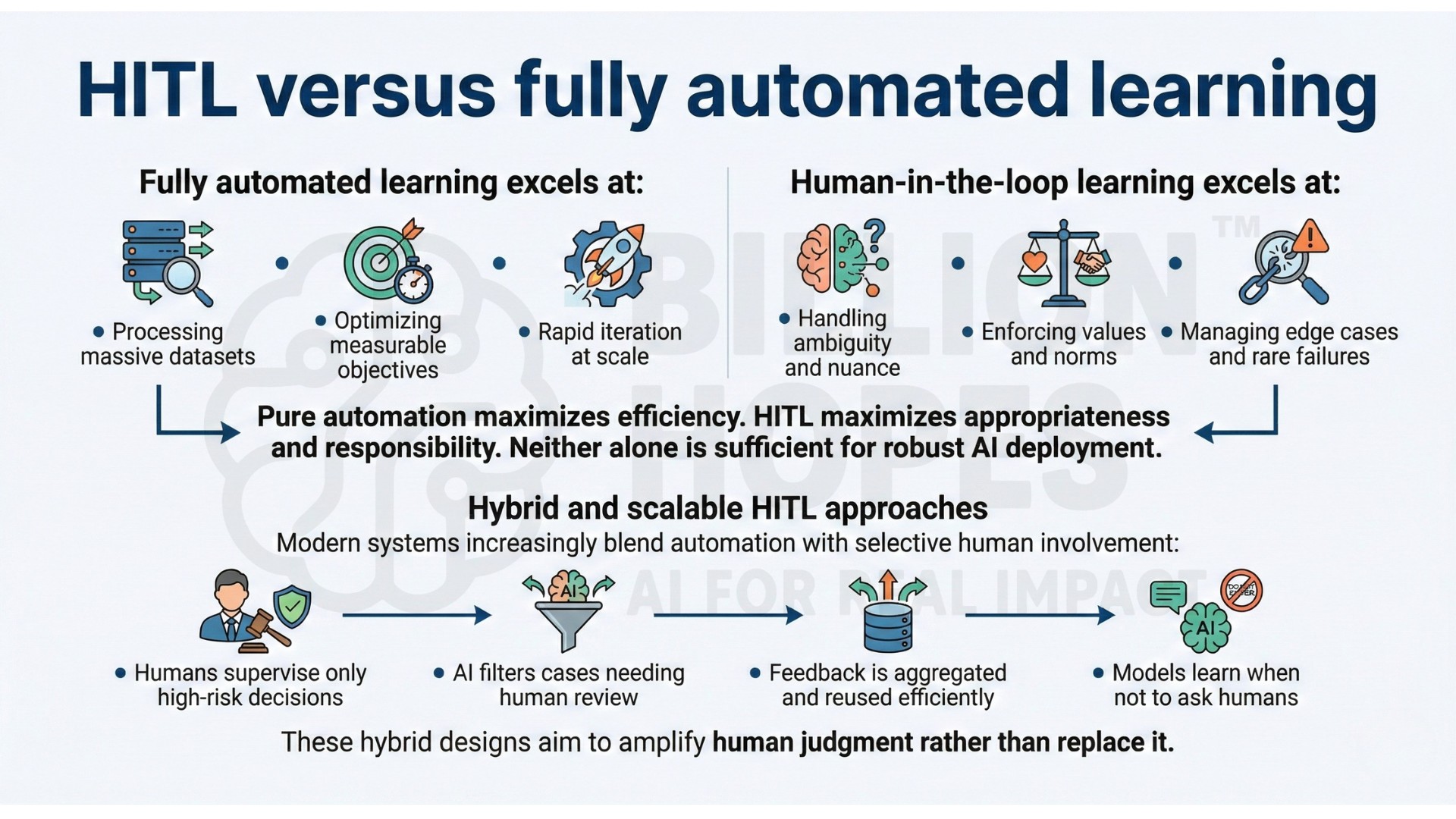

7. Human-in-the-Loop vs fully automated learning

Fully automated learning excels at:

- Processing massive datasets

- Optimizing measurable objectives

- Rapid iteration at scale

Human-in-the-loop learning excels at:

- Handling ambiguity and nuance

- Enforcing values and norms

- Managing edge cases and rare failures

Pure automation maximizes efficiency. HITL maximizes appropriateness and responsibility. Neither alone is sufficient for robust AI deployment.

8. Hybrid and scalable HITL approaches

Modern systems increasingly blend automation with selective human involvement:

- Humans supervise only high-risk decisions

- AI filters cases needing human review

- Feedback is aggregated and reused efficiently

- Models learn when not to ask humans

These hybrid designs aim to amplify human judgment rather than replace it. Subscribe to our free AI newsletter now.

9. Real-world applications of Human-in-the-Loop learning

HITL systems are central to:

- Content moderation and safety systems

- Medical diagnosis and clinical decision support

- Legal review and compliance tools

- Conversational AI and copilots

- Autonomous systems with safety constraints

In enterprise, governance, and public-sector AI, human oversight is not optional – it is foundational.

10. The future role of Human-in-the-Loop systems

As AI systems become more autonomous and socially embedded, the role of humans will shift from operators to supervisors, evaluators, and stewards.

Human-in-the-loop learning will be essential for:

- Ethical alignment

- Policy compliance

- Trustworthy deployment

- Continuous adaptation to societal change

Rather than slowing AI down, HITL systems will define where and how AI should act at all. Upgrade your AI-readiness with our masterclass.

Summary

Human-in-the-loop learning and feedback systems ensure that AI remains aligned with human values, intentions, and responsibilities. By embedding human judgment directly into learning and decision-making cycles, these systems enable adaptability, accountability, and trust that purely automated approaches cannot achieve. As AI systems mature, scalable human-in-the-loop designs will be central to building intelligence that is not just powerful – but responsible, aligned, and human-centered.