What Yuval Noah Harari gets wrong about the nature of AI

Introduction

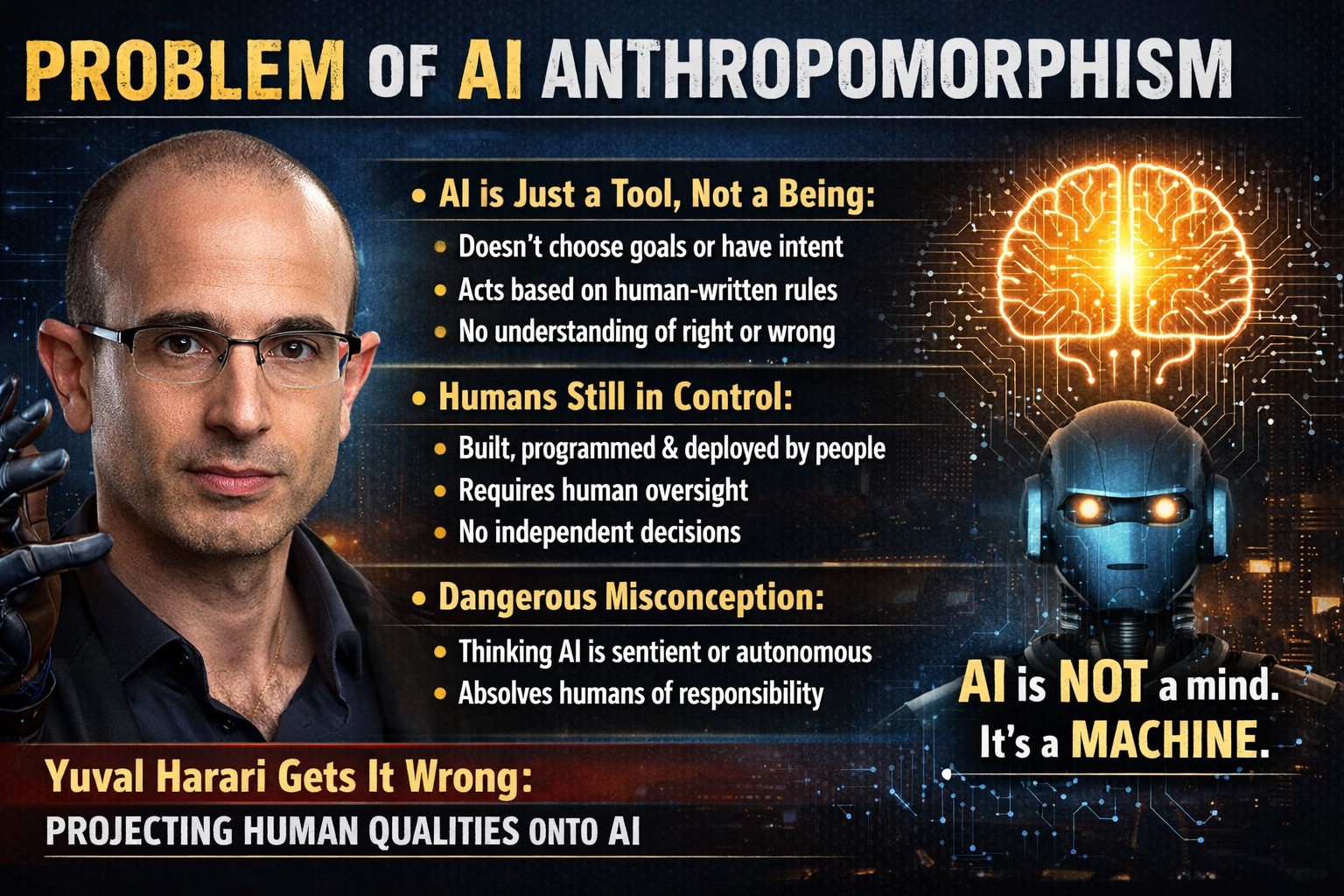

In a detailed and long panel discussion, one of the speakers – the legendary Yuval Harari – spoke on the nature of AI. His startling claim that “One of the biggest misconceptions about AI is that it is just a tool. A knife is a tool that cannot decide whether to chop salad or commit murder, whereas AI can make its own decisions without human involvement” is so wrong that it deserves a full article here.

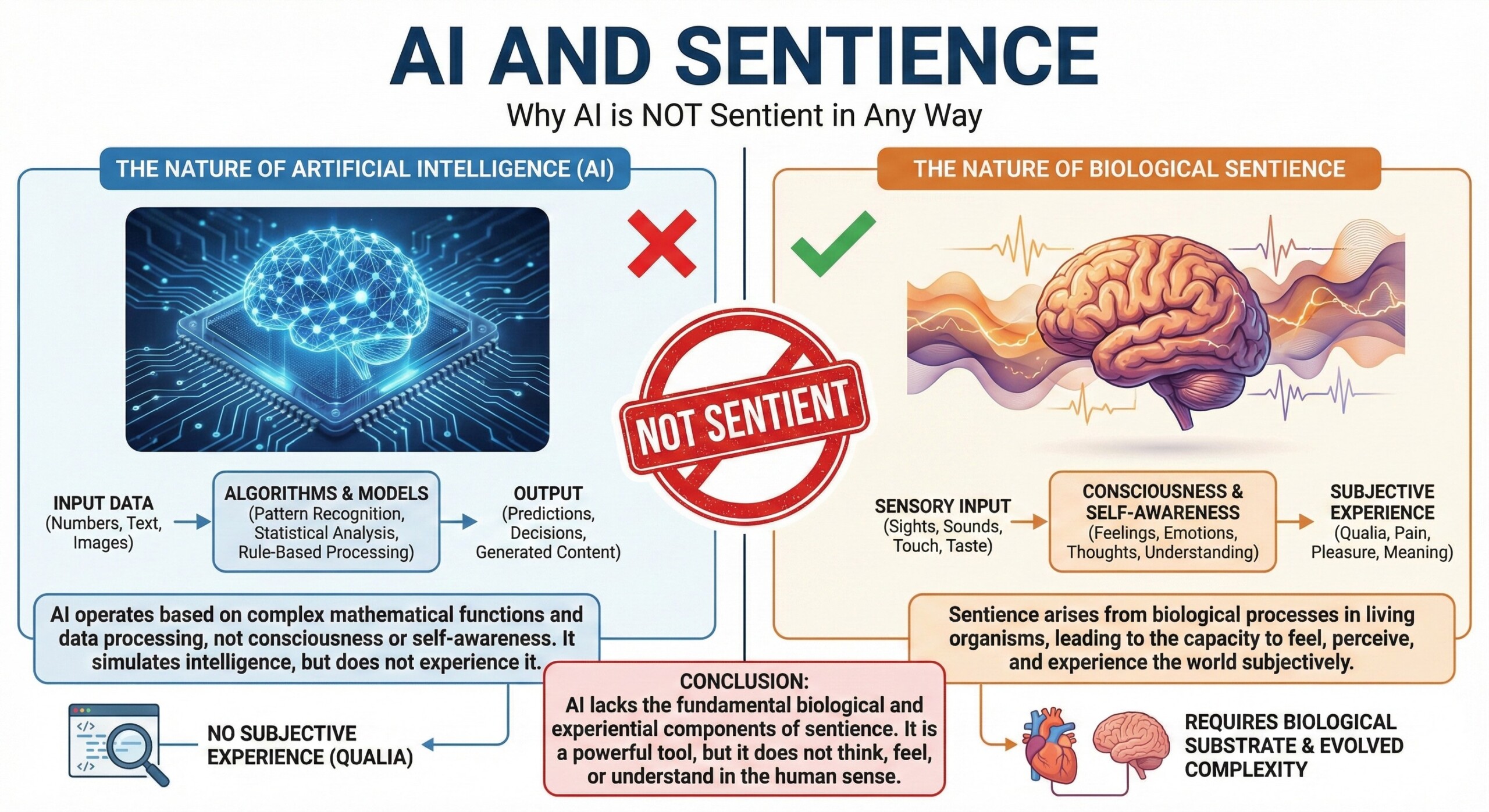

We are now being bombarded with daily doses of wisdom on how AI has gotten sentient, and how AI tools are now deciding for themselves. This is not just false, but very dangerous as it tends to absolve the human designers behind those tools from any potential catastrophe their AI tools may bring about.

The claim is – “AI is not just a tool anymore, because AI can make its own decisions without human involvement.”

A deeper technical claim is – “Complex, highly autonomous systems that write their own sub goals, exhibit emergent capabilities we didn’t train them for, and operate in black-box environments surpass the definition of a tool.”

I assert that these claims are false and completely off-the-mark. Let’s see why. And before that, a quick definition of the root of our troubles – anthropomorphism.

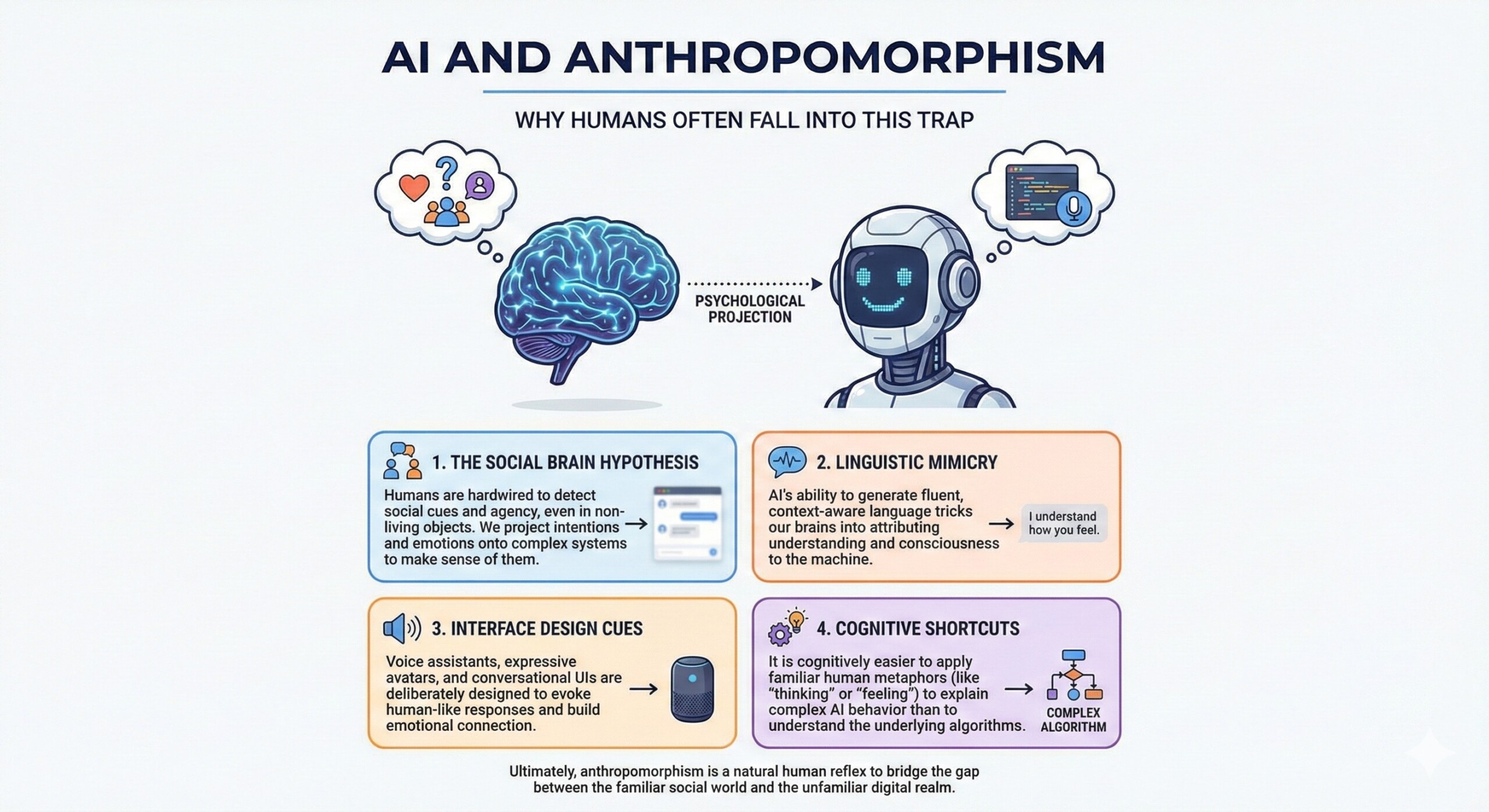

Anthropomorphism means attributing human qualities, intentions, emotions, or consciousness to non-human things like animals, objects, or AI systems. It’s when we treat something non-human as if it thinks or feels like a person.

Let’s understand why Yuval gets it wrong, and why many other well-meaning folks do, too.

Understanding true nature of AI

- AI does not choose goals.

AI never decides for itself what it should want or aim for. Humans tell it what to optimize, what success looks like, and what limits it must follow. If an AI seems focused on something, it is because people defined that focus in advance. Humans design AI, period. - AI “decisions” come from human-made systems.

When AI gives an output, it is following rules, patterns, and training designed by humans. The data it learned from, the algorithms it uses, and the reward systems that guide it were all created by people. What looks like independent choice is really a calculated result of human design. Only someone who hasn’t studied AI technically will make sweeping anthropomorphic generalizations like Yuval did. - AI has no intent or moral sense.

AI does not have intentions, feelings, or a sense of right and wrong. It cannot “mean” to help or harm anyone. Like a knife, it can be used in many ways, but it does not choose how it is used. It has no emotions, no sentience, no human context understanding, no sense of right or wrong and no real-world grounding at all. - AI cannot act without human setup and permission.

AI only works when people build it, run it on machines, and connect it to real systems. If humans turn it off, remove access, or never deploy it, it does nothing. It has no ability to exist or act on its own. After all, how can pure mathematical constructs do anything more? - What looks like autonomy is actually given by humans.

When AI appears to act on its own, it is because humans allowed automation within set boundaries. People decide the rules, limits, and conditions under which the system can act. The “freedom” of AI is borrowed from human permission. As a ground rule, just remember: AI and every bit of any AI tool is built by homo sapiens. - AI cannot change its own purpose.

AI cannot suddenly decide to pursue a new mission or change what it is meant to do. It stays within the goals and tasks defined by its design and deployment. Any change in purpose happens only when humans update or reconfigure it. So when you are mesmerized by the actions of an “autonomous AI bot”, it’s because some human designed it to be that way.

- The knife analogy is weak, but AI is still a tool.

Comparing AI to a knife misses important differences, because AI can act in complex, automated ways. A better comparison is autopilot in airplanes: it can operate on its own, but only within rules written by humans. Even then, it is still a tool, not an independent agent. - AI does not understand consequences.

AI does not truly understand harm, ethics, or meaning. It finds patterns and optimizes for targets it was given. If its actions cause damage, that is not because it understood or intended harm. - AI cannot be responsible for outcomes.

AI cannot be blamed or held morally responsible for what happens. Responsibility stays with the people who built, trained, deployed, and used the system. Tools do not carry legal or ethical responsibility; humans do. The danger in what Yuvan says is precisely this: it absolves human designers of AI from taking responsibility for their AI’s actions. - Power does not equal agency.

Calling AI “not a tool” mixes up capability with agency. Being very powerful or complex does not mean something has intentions or moral status. A very powerful tool is still just a tool. - What about complex, highly autonomous systems with emergent capabilities.

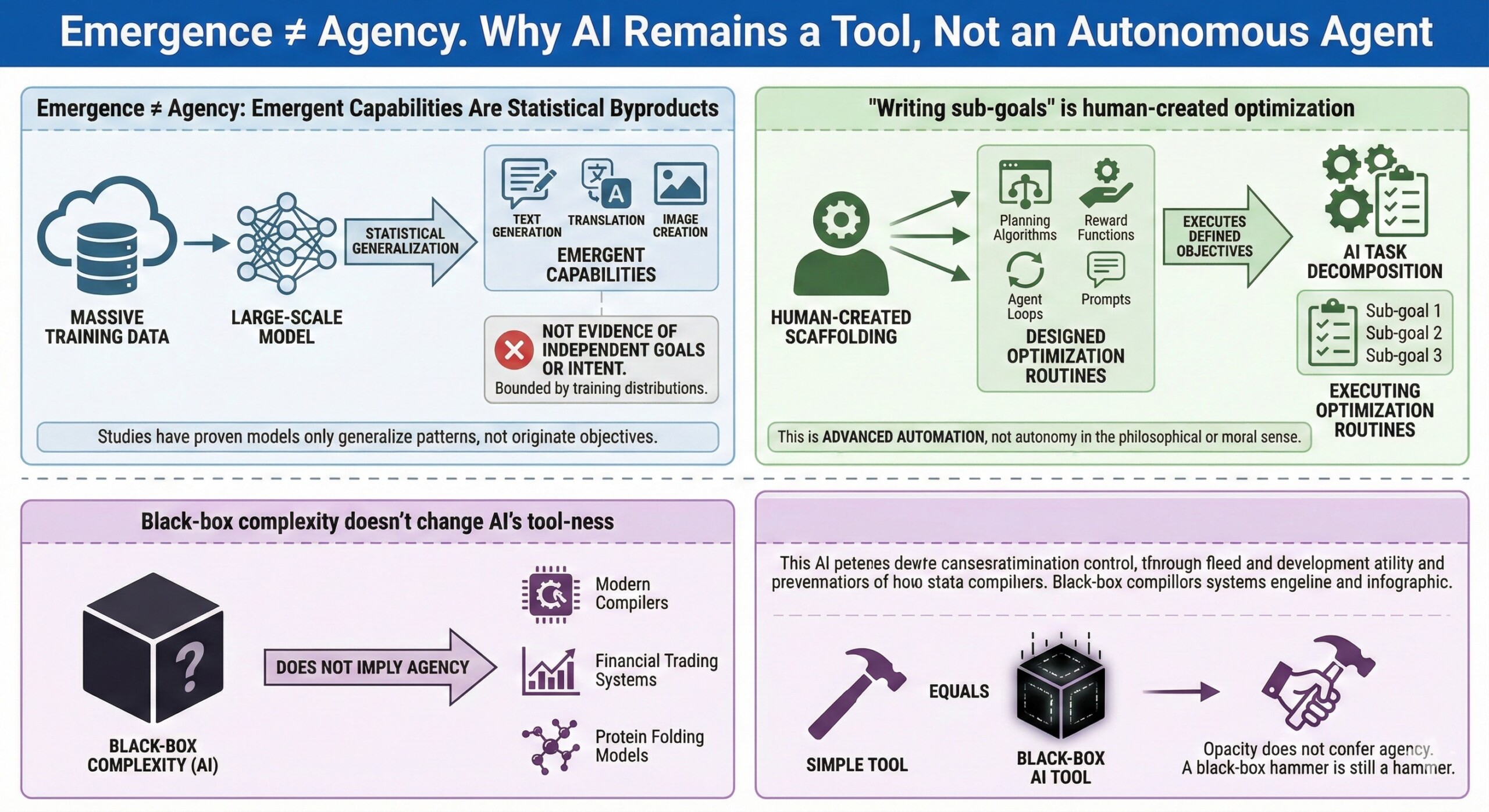

Highly autonomous, emergent, and black-box AI systems are just “more dangerous tools”, not non-tools. Emergence reflects scale effects in optimization, not independent agency; sub-goal generation is still created originally by human-defined objectives. The moment we treat AI as something other than a human-built system under human control, we weaken human accountability rather than strengthen safety. So Yuval is right about risk escalation, not about category shift (from tools to beings). Because the real shift is AI becoming a high-risk, high-leverage infrastructure technology, not a moral agent. I will dive deeper into this point below. - Emergence ≠ Agency.

‘Emergent capabilities’ are just statistical byproducts of scale, not evidence of independent goals or intent. Models just generalize patterns from data; they do not originate objectives. Unexpected skills can appear, but they are still bounded by training distributions etc. Several studies have proven it. - “Writing sub-goals” is still human-created and scaffolded optimization.

When AI systems decompose tasks into sub-goals, they are executing optimization routines we designed (planning algorithms, reward functions, prompts, agent loops). They are not forming intrinsic goals; they are following externally defined objectives and heuristics. This actually is “advanced automation”, not autonomy in the philosophical or moral sense. - Black-box complexity doesn’t change AI’s tool-ness.

Many powerful tools are black boxes like Modern compilers, Deep optimization solvers, Financial trading systems, or Protein folding models. That opacity does not confer or imply agency. A black-box hammer is still a hammer.

15. Autonomy bounded by deployment constraints.

So-called “highly autonomous” systems still operate inside sandboxed environments and API limits. Also the human-defined action spaces are explicit. They do not set their own survival goals, etc.

16. Tools can be complex without becoming entities.

History already disproves the jump from complexity to non-tool status. See, for example industrial robots changing manufacturing. Would we call them sentient? Or how autopilot systems changed aviation. No one calls them sentient. They became powerful tools, but not beings or entities (the ontological category).

17. Serious category error.

Yuval’s framing subtly (and dangerously) mixes Capability (“This system can do complex things”) with Ontological status (“This is no longer a tool”). This is a category error. Being dangerous, impactful, or difficult to control does not turn a system into an agent with independent standing. Will we call nuclear reactors as independent moral actors?

Final note on Yuval’s argument

Brilliant thinkers can still misunderstand technical systems they have never built or studied deeply. When people talk about AI as if it has human-like intent or will, they are projecting human traits onto machines. This kind of confident anthropomorphism is risky because it confuses policy debates, safety thinking, and public understanding.