AI Research vs Applied AI Careers

AI Research vs Applied AI Careers

Academia, labs, startups, and enterprises

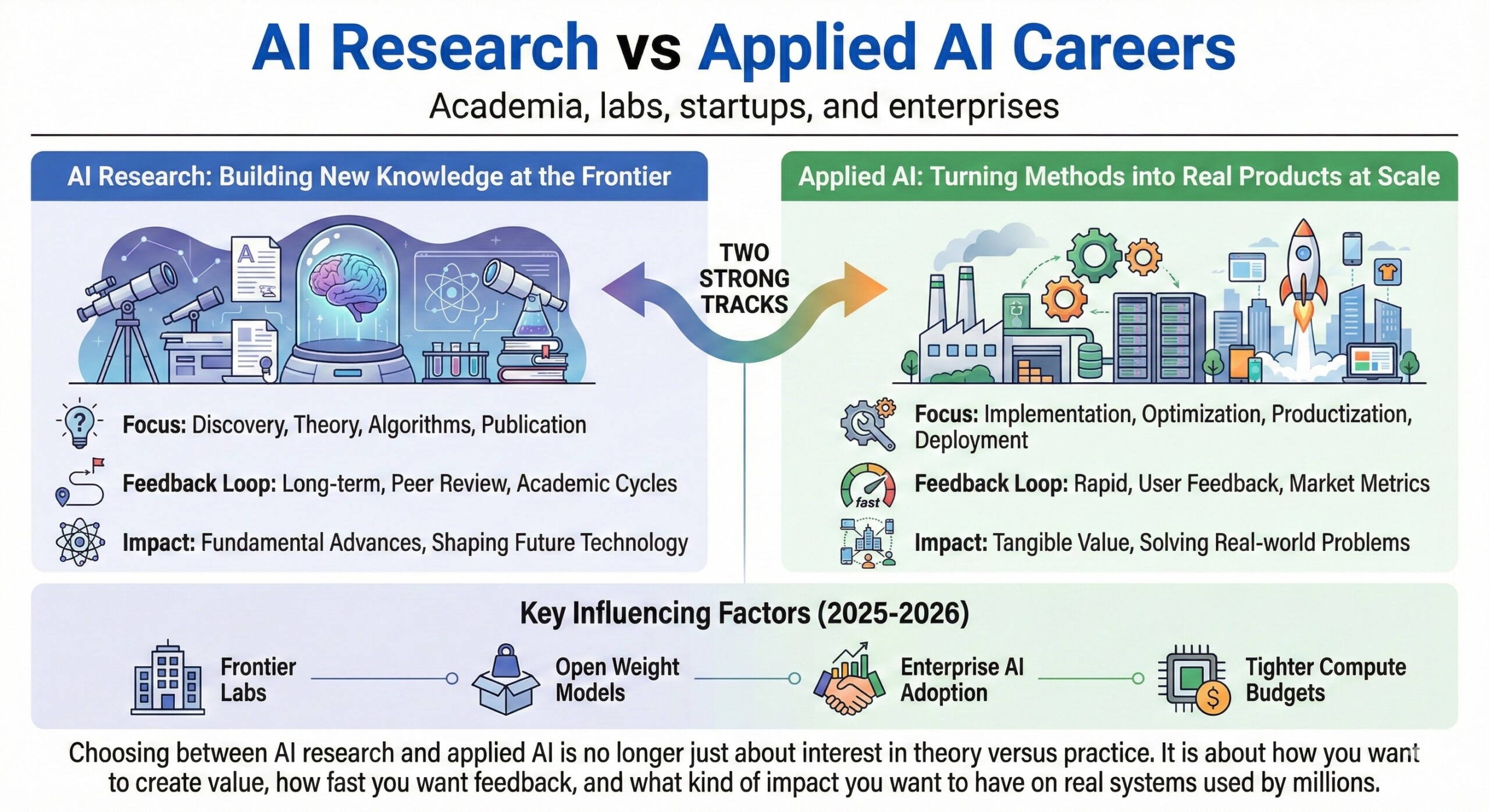

AI careers now split into two strong tracks. One builds new knowledge at the research frontier, and the other turns existing methods into real products at scale. Both paths are in demand, but they reward very different skills, temperaments, and risk tolerance.

In 2025 and 2026, this split became sharper due to the rise of frontier labs, open weight models, enterprise AI adoption, and tighter compute budgets. Choosing between AI research and applied AI is no longer just about interest in theory versus practice. It is about how you want to create value, how fast you want feedback, and what kind of impact you want to have on real systems used by millions.

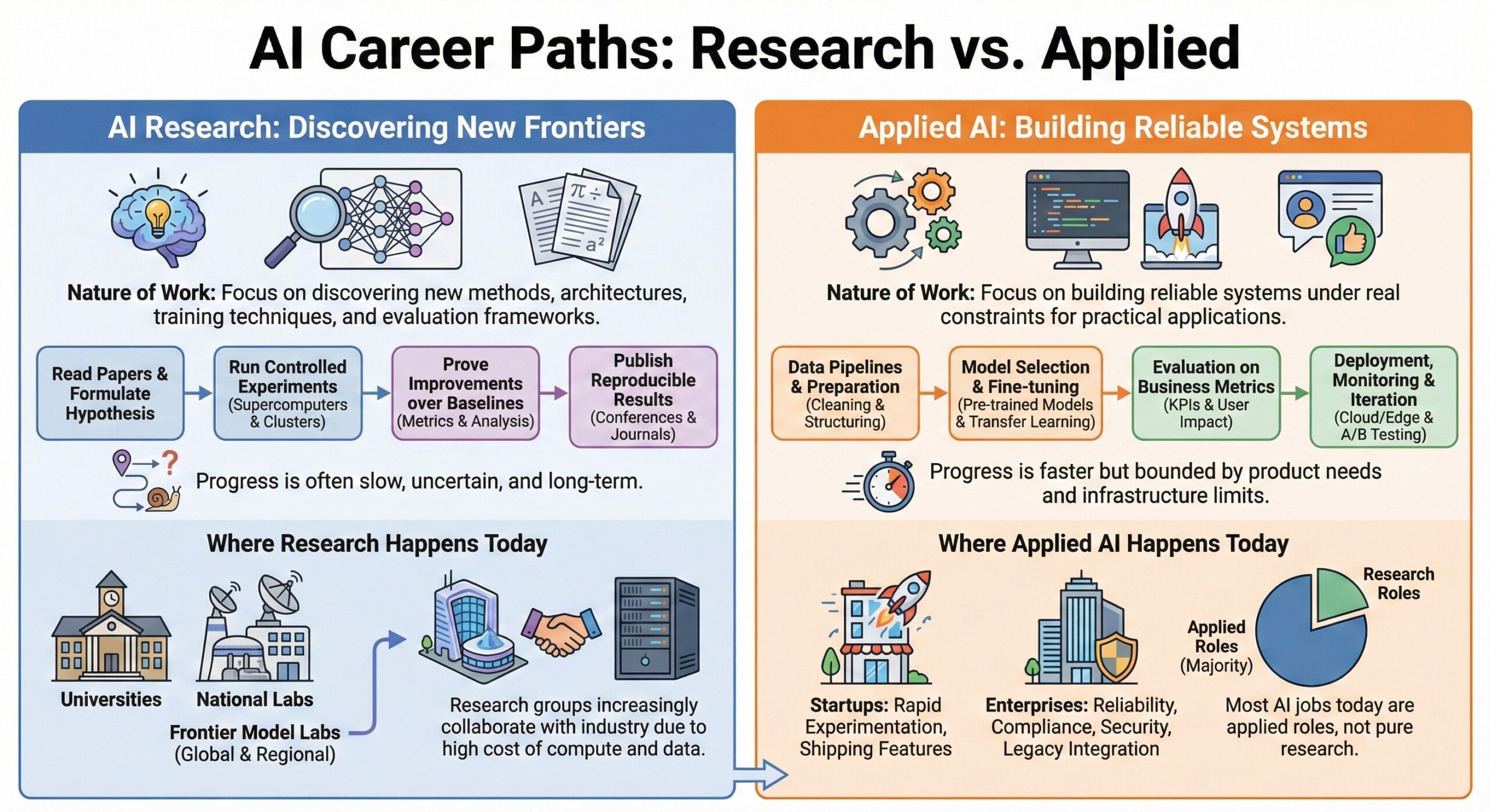

1. Nature of work and daily problem types

AI research focuses on discovering new methods, architectures, training techniques, or evaluation frameworks. The work involves reading papers, running controlled experiments, proving improvements over baselines, and publishing reproducible results. Progress can be slow and uncertain.

Applied AI focuses on building reliable systems under real constraints. The work involves data pipelines, model selection, fine tuning, evaluation on business metrics, deployment, monitoring, and iteration. Progress is faster but bounded by product needs and infrastructure limits.

2. Where the work happens today

Research careers cluster in universities, national labs, and frontier model labs. These include global labs and a growing number of regional labs focused on language, speech, and sovereign AI needs. Research groups increasingly collaborate with industry due to the high cost of compute and data.

Applied AI roles dominate startups and enterprises. Startups focus on rapid experimentation, shipping features, and finding product market fit. Enterprises focus on reliability, compliance, security, and integration with legacy systems. Most AI jobs today are applied roles, not pure research.

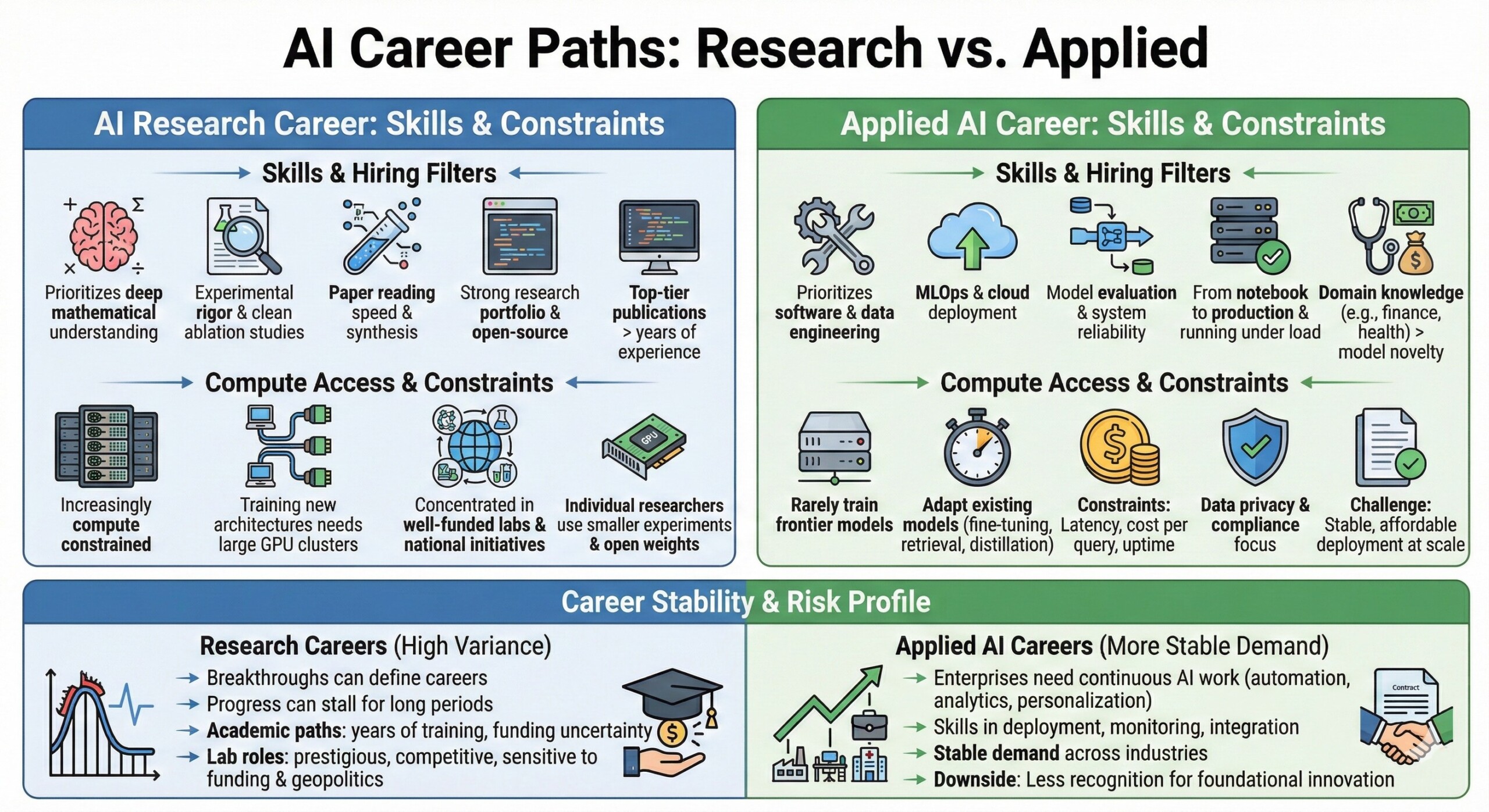

3. Skills and hiring filters

AI research hiring prioritizes deep mathematical understanding, experimental rigor, paper reading speed, and the ability to design clean ablation studies. A strong research portfolio, open-source contributions, or top tier publications matter more than years of experience.

Applied AI hiring prioritizes software engineering, data engineering, MLOps, cloud deployment, model evaluation, and system reliability. Companies value people who can take a model from notebook to production and keep it running under load. Domain knowledge such as finance, health, or manufacturing often matters more than pure model novelty.

4. Compute access and practical constraints

Research today is increasingly compute constrained. Training new architectures at scale requires access to large GPU clusters, optimized networking, and systems expertise. This has concentrated frontier research inside well-funded labs and national initiatives. Individual researchers rely more on smaller scale experiments and open weight models.

Applied AI teams rarely train frontier models. They adapt existing models using fine tuning, retrieval, distillation, and prompt engineering. Their constraints are latency, cost per query, uptime, data privacy, and compliance. The technical challenge is not raw model quality but stable, affordable deployment at scale.

5. Career stability and risk profile

Research careers are high variance. Breakthroughs can define careers, but progress can stall for long periods. Academic paths require years of training and face funding uncertainty. Lab roles are prestigious but competitive and sensitive to shifts in funding cycles and geopolitics around AI. Applied AI careers offer more stable demand.

Enterprises need continuous applied AI work across automation, analytics, personalization, and copilots. Skills in deployment, monitoring, and integration remain valuable even as model families change. The downside is less recognition for foundational innovation.

6. Impact, visibility, and feedback loops

Research impact is indirect and delayed. A good paper may influence future models years later. Feedback comes from peer review, citations, and downstream adoption. The reward is shaping the field itself.

Applied AI impact is immediate and visible. Your system either improves conversion, reduces cost, catches fraud, or fails in production. Feedback comes from users, metrics, and incident reports. The reward is seeing tangible value created quickly, even if the underlying methods are not novel.

7. How the two paths are converging

The boundary between research and applied AI is narrowing. Many applied teams now run controlled experiments, contribute to open source, and publish engineering research. Research labs increasingly care about deployment efficiency, inference cost, and real-world evaluation.

Hybrid roles are emerging. Examples include research engineers, applied scientists, and model infrastructure engineers.

These roles blend research thinking with production discipline. For most professionals, the best long-term path is not choosing one forever, but building the ability to move between research depth and applied execution.

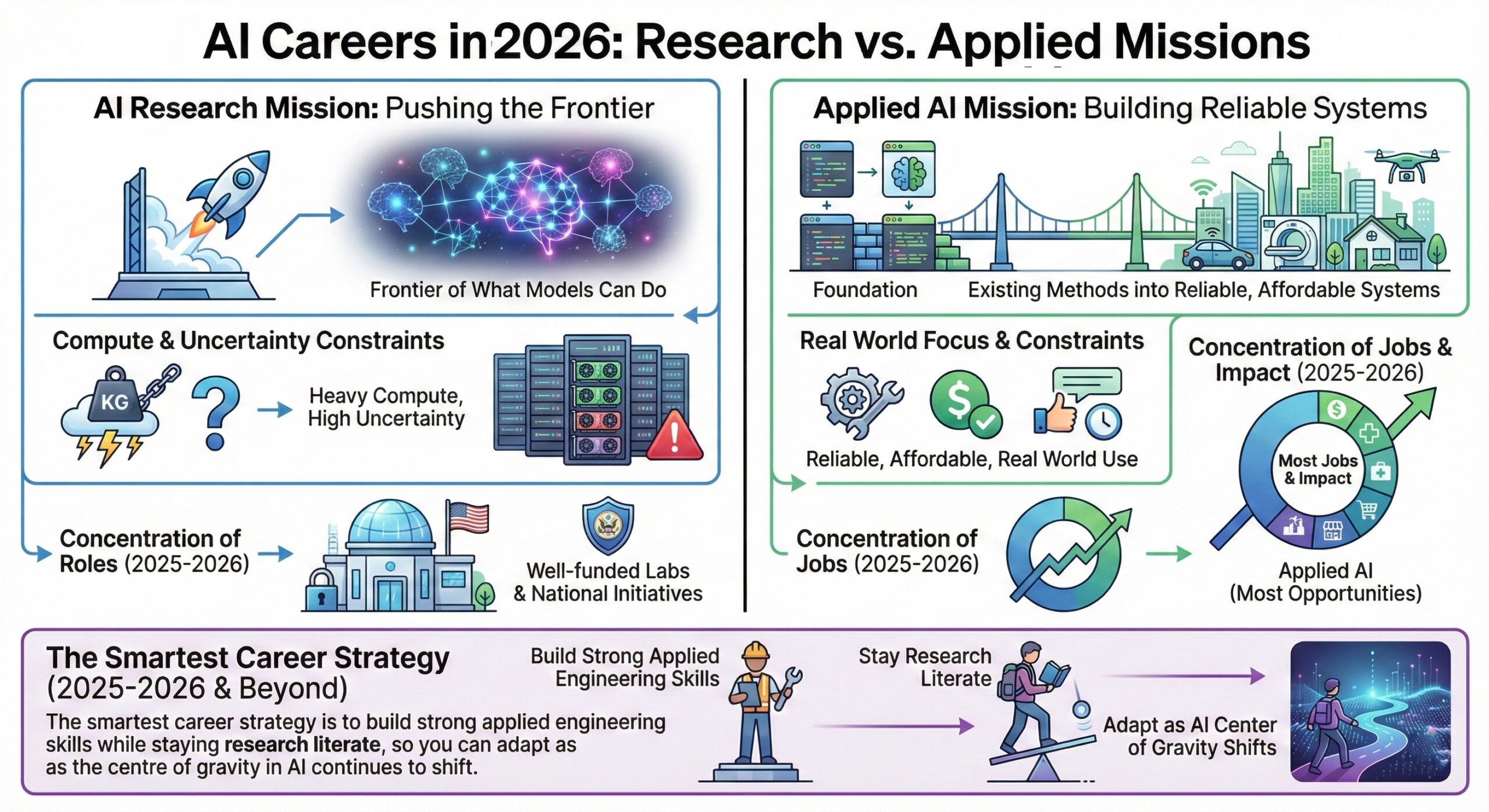

Summary

AI research and applied AI careers serve different missions. Research pushes the frontier of what models can do, under heavy compute and uncertainty constraints. Applied AI turns existing methods into reliable, affordable systems used in the real world. In 2025 and 2026, most jobs and impact sit in applied AI, while frontier research is concentrated in well-funded labs and national initiatives. The smartest career strategy is to build strong applied engineering skills while staying research literate, so you can adapt as the centre of gravity in AI continues to shift.