Human-in-the-Loop (HITL) & AI Oversight Roles – Human judgment in AI decision systems

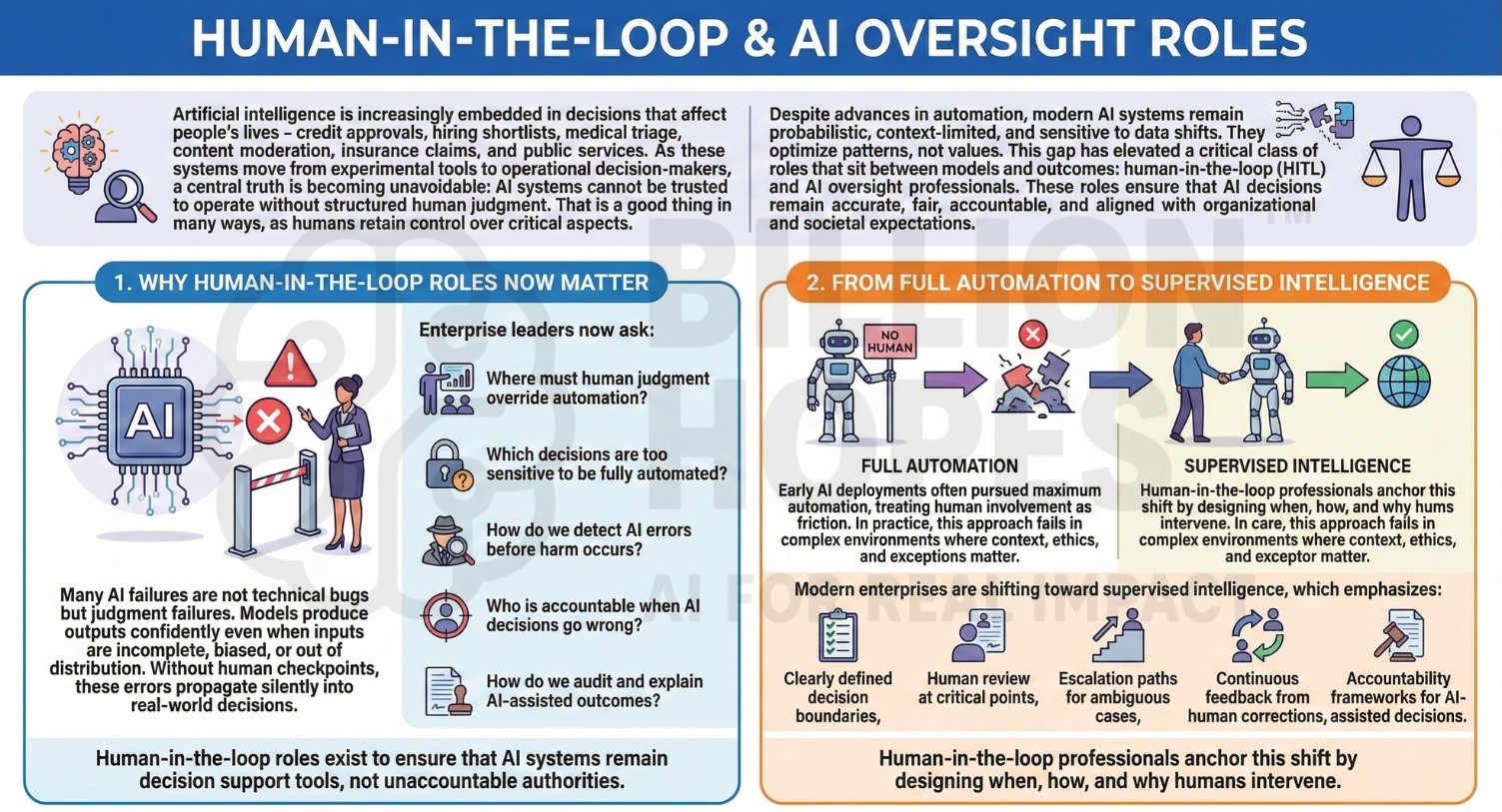

Artificial intelligence is increasingly embedded in decisions that affect people’s lives – credit approvals, hiring shortlists, medical triage, content moderation, insurance claims, and public services. As these systems move from experimental tools to operational decision-makers, a central truth is becoming unavoidable: AI systems cannot be trusted to operate without structured human judgment. That is a good thing in many ways, as humans retain control over critical aspects.

Despite advances in automation, modern AI systems remain probabilistic, context-limited, and sensitive to data shifts. They optimize patterns, not values. This gap has elevated a critical class of roles that sit between models and outcomes: human-in-the-loop (HITL) and AI oversight professionals. These roles ensure that AI decisions remain accurate, fair, accountable, and aligned with organizational and societal expectations.

1. Why human-in-the-loop roles now matter

Many AI failures are not technical bugs but judgment failures. Models produce outputs confidently even when inputs are incomplete, biased, or out of distribution. Without human checkpoints, these errors propagate silently into real-world decisions.

Enterprise leaders now ask:

- Where must human judgment override automation?

- Which decisions are too sensitive to be fully automated?

- How do we detect AI errors before harm occurs?

- Who is accountable when AI decisions go wrong?

- How do we audit and explain AI-assisted outcomes?

Human-in-the-loop roles exist to ensure that AI systems remain decision support tools, not unaccountable authorities.

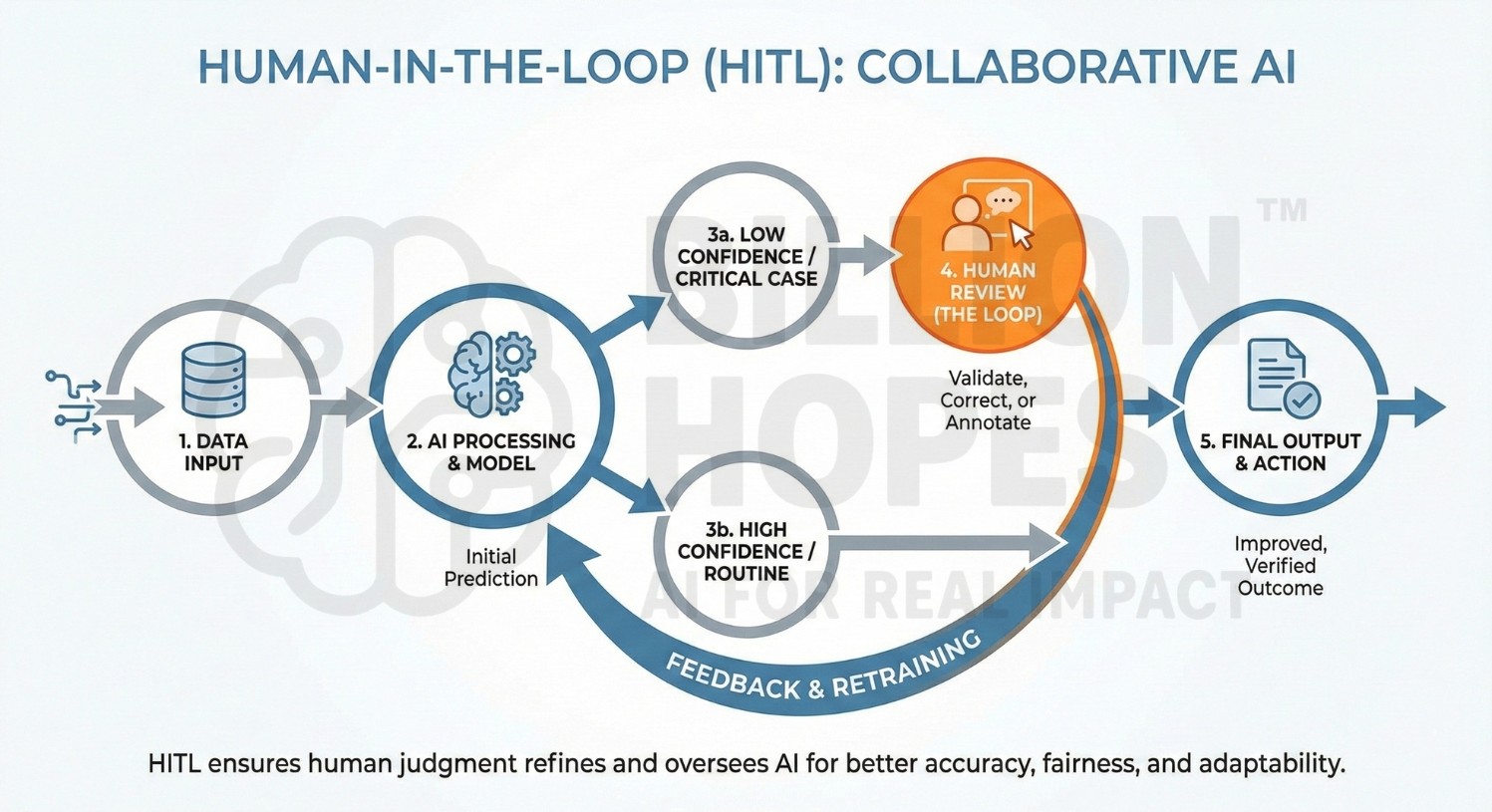

2. From full automation to supervised intelligence

Early AI deployments often pursued maximum automation, treating human involvement as friction. In practice, this approach fails in complex environments where context, ethics, and exceptions matter.

Modern enterprises are shifting toward supervised intelligence, which emphasizes:

- Clearly defined decision boundaries,

- Human review at critical points,

- Escalation paths for ambiguous cases,

- Continuous feedback from human corrections,

- Accountability frameworks for AI-assisted decisions.

Human-in-the-loop professionals anchor this shift by designing when, how, and why humans intervene.

An excellent collection of learning videos awaits you on our Youtube channel.

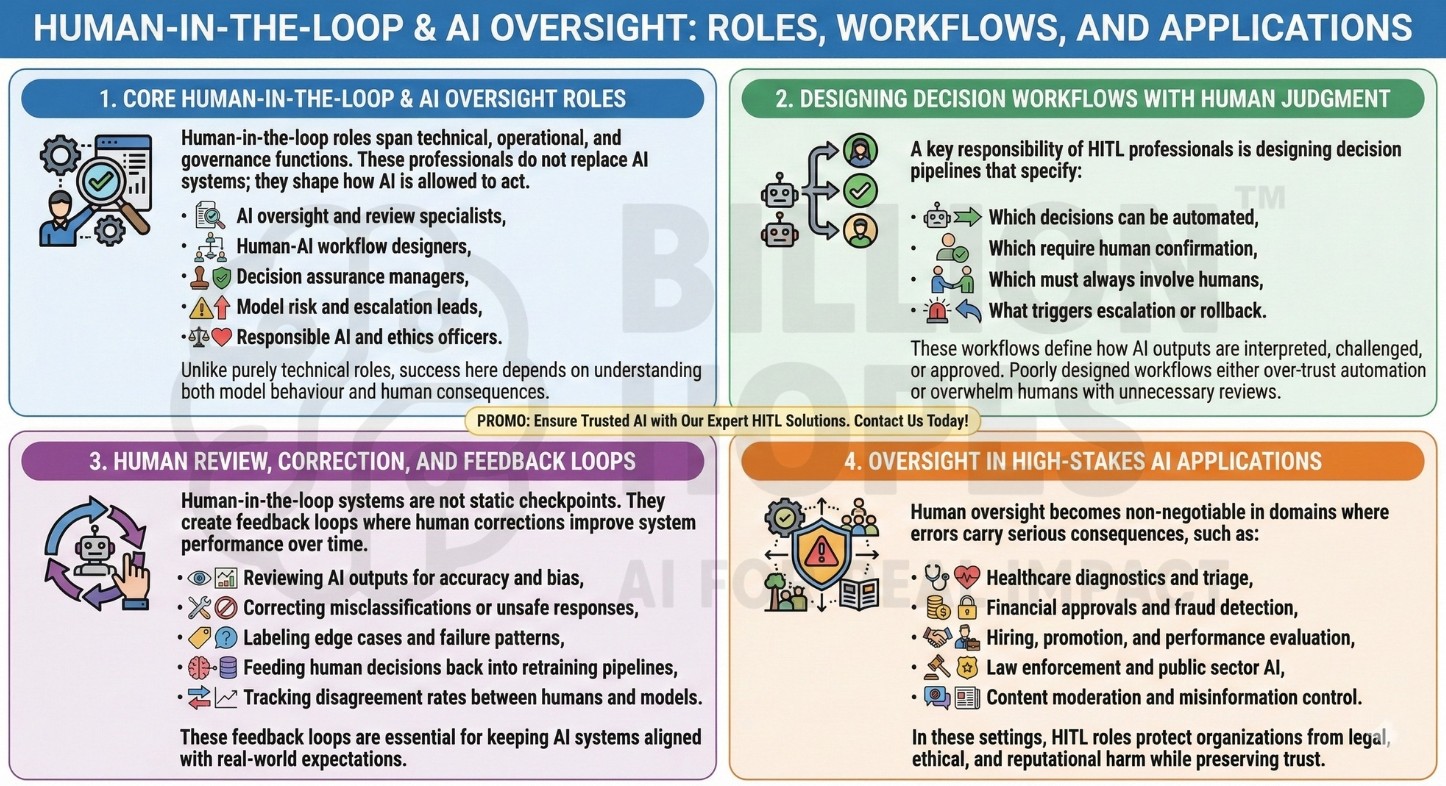

3. Core human-in-the-loop and AI oversight roles

Human-in-the-loop roles span technical, operational, and governance functions. These professionals do not replace AI systems; they shape how AI is allowed to act.

They may work as:

- AI oversight and review specialists,

- Human-AI workflow designers,

- Decision assurance managers,

- Model risk and escalation leads,

- Responsible AI and ethics officers.

Unlike purely technical roles, success here depends on understanding both model behaviour and human consequences.

4. Designing decision workflows with human judgment

A key responsibility of HITL professionals is designing decision pipelines that specify:

- Which decisions can be automated,

- Which require human confirmation,

- Which must always involve humans,

- What triggers escalation or rollback.

These workflows define how AI outputs are interpreted, challenged, or approved. Poorly designed workflows either over-trust automation or overwhelm humans with unnecessary reviews. A constantly updated Whatsapp channel awaits your participation.

5. Human review, correction, and feedback loops

Human-in-the-loop systems are not static checkpoints. They create feedback loops where human corrections improve system performance over time.

Key activities include:

- Reviewing AI outputs for accuracy and bias,

- Correcting misclassifications or unsafe responses,

- Labeling edge cases and failure patterns,

- Feeding human decisions back into retraining pipelines,

- Tracking disagreement rates between humans and models.

These feedback loops are essential for keeping AI systems aligned with real-world expectations.

6. Oversight in high-stakes AI applications

Human oversight becomes non-negotiable in domains where errors carry serious consequences, such as:

- Healthcare diagnostics and triage,

- Financial approvals and fraud detection,

- Hiring, promotion, and performance evaluation,

- Law enforcement and public sector AI,

- Content moderation and misinformation control.

In these settings, HITL roles protect organizations from legal, ethical, and reputational harm while preserving trust. Excellent individualised mentoring programmes available.

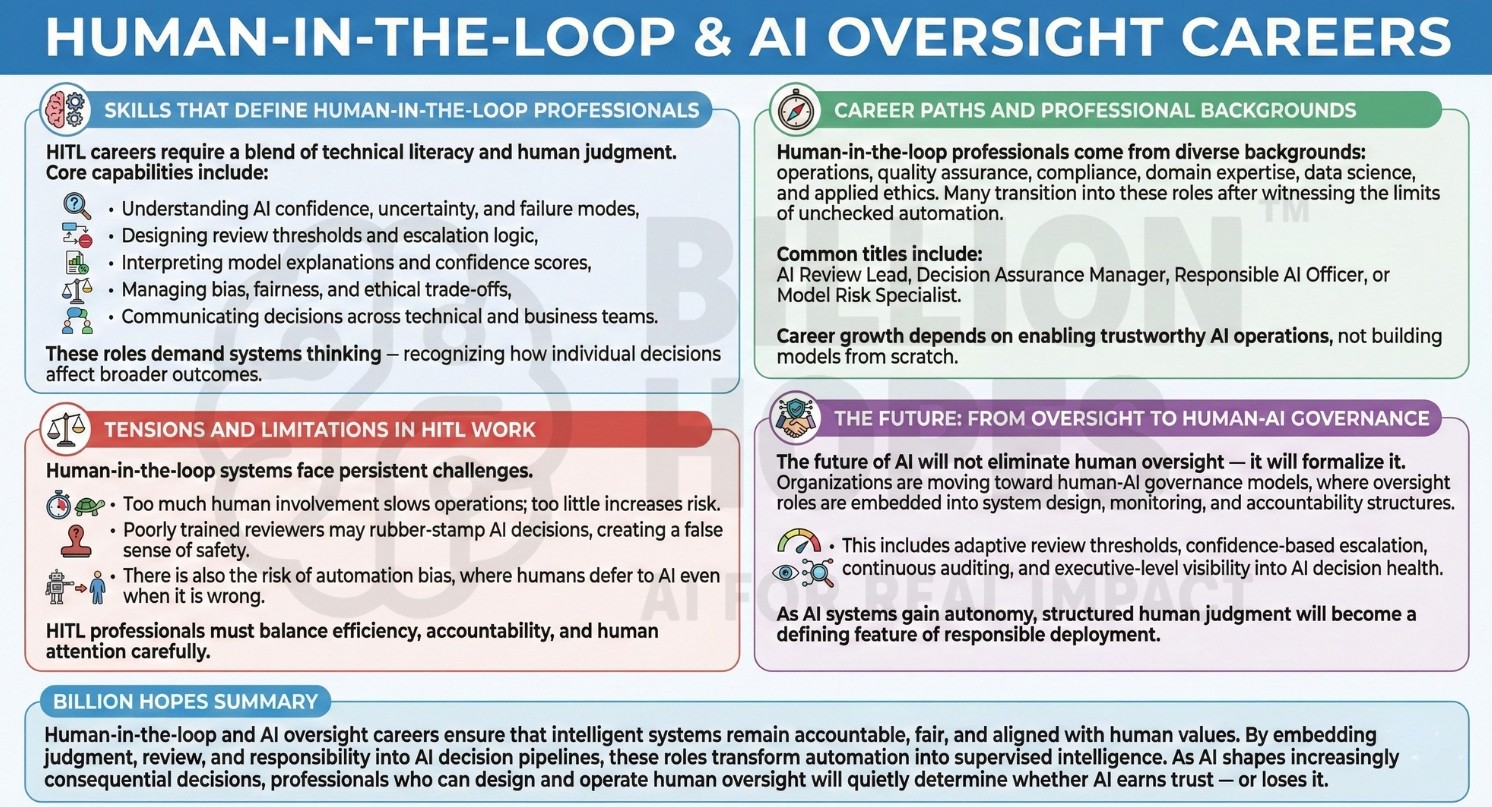

7. Skills that define human-in-the-loop professionals

HITL careers require a blend of technical literacy and human judgment.

Core capabilities include:

- Understanding AI confidence, uncertainty, and failure modes,

- Designing review thresholds and escalation logic,

- Interpreting model explanations and confidence scores,

- Managing bias, fairness, and ethical trade-offs,

- Communicating decisions across technical and business teams.

These roles demand systems thinking – recognizing how individual decisions affect broader outcomes.

8. Career paths and professional backgrounds

Human-in-the-loop professionals come from diverse backgrounds: operations, quality assurance, compliance, domain expertise, data science, and applied ethics. Many transition into these roles after witnessing the limits of unchecked automation.

Common titles include AI Review Lead, Decision Assurance Manager, Responsible AI Officer, or Model Risk Specialist. Career growth depends on enabling trustworthy AI operations, not building models from scratch. Subscribe to our free AI newsletter now.

9. Tensions and limitations in HITL work

Human-in-the-loop systems face persistent challenges. Too much human involvement slows operations; too little increases risk. Poorly trained reviewers may rubber-stamp AI decisions, creating a false sense of safety.

There is also the risk of automation bias, where humans defer to AI even when it is wrong. HITL professionals must balance efficiency, accountability, and human attention carefully.

10. The future: From oversight to human-AI governance

The future of AI will not eliminate human oversight – it will formalize it. Organizations are moving toward human-AI governance models, where oversight roles are embedded into system design, monitoring, and accountability structures.

This includes adaptive review thresholds, confidence-based escalation, continuous auditing, and executive-level visibility into AI decision health. As AI systems gain autonomy, structured human judgment will become a defining feature of responsible deployment.

Upgrade your AI-readiness with our masterclass.

Billion Hopes summary

Human-in-the-loop and AI oversight careers ensure that intelligent systems remain accountable, fair, and aligned with human values. By embedding judgment, review, and responsibility into AI decision pipelines, these roles transform automation into supervised intelligence. As AI shapes increasingly consequential decisions, professionals who can design and operate human oversight will quietly determine whether AI earns trust – or loses it.