India’s LLM strategy now is actually SLM (small language model)

1. Introduction

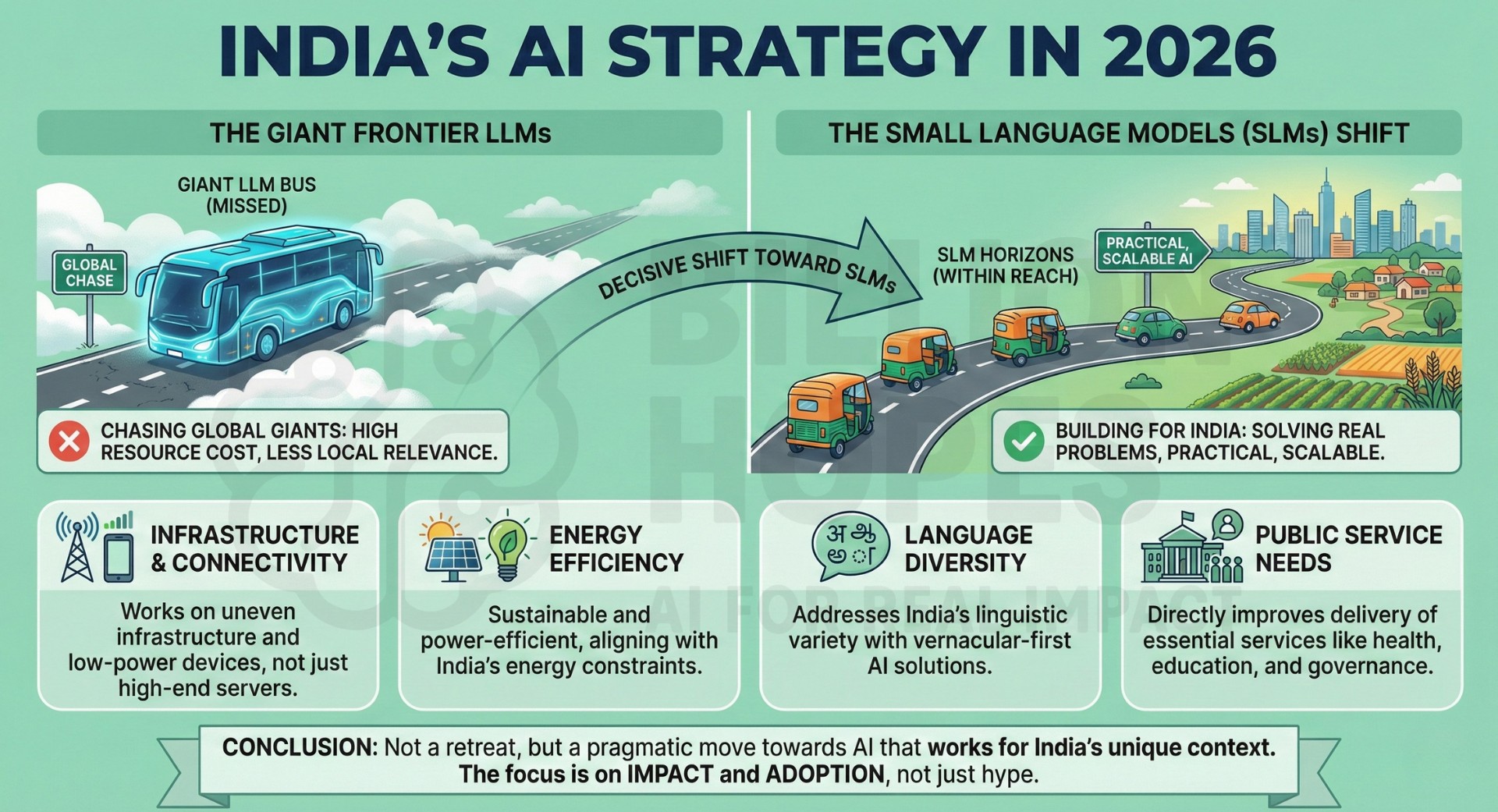

India’s AI strategy in 2026 is quietly but decisively shifting away from chasing giant frontier LLMs toward building Small Language Models (SLMs) that solve real Indian problems. This is perhaps not a retreat from ambition, but a move toward practical, scalable AI that works within India’s infrastructure, energy, language diversity, and public service needs. The LLM bus is missed, but the SLM horizons are within reach.

Ready to dive into this new world of application-oriented AI? Read on!

2. Fifteen points on India’s SLM strategy

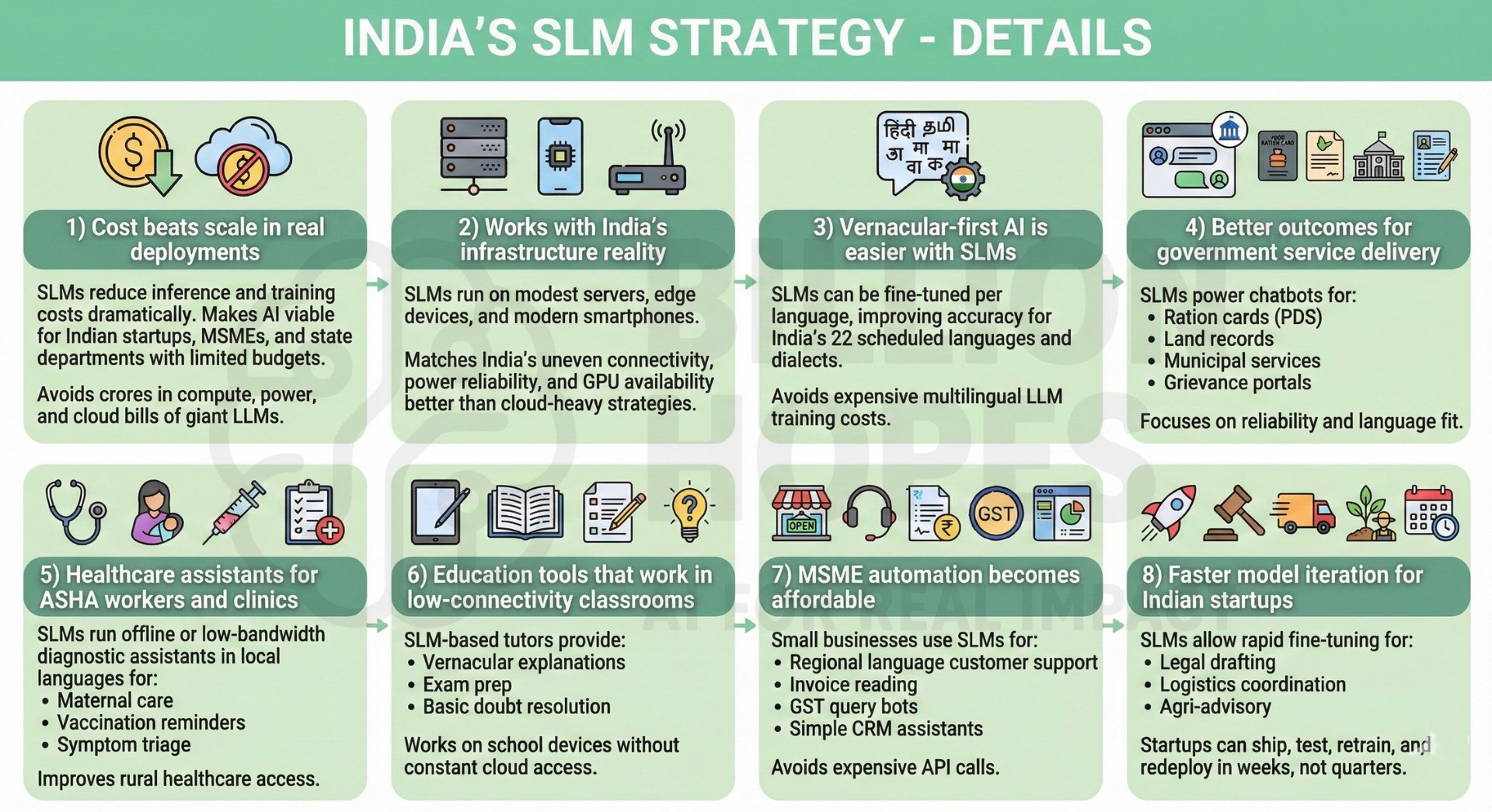

1) Cost beats scale in real deployments – Training and running giant LLMs costs crores in compute, power, and cloud bills. SLMs reduce inference and training costs dramatically, making AI viable for Indian startups, MSMEs, and state departments with limited budgets.

2) Works with India’s infrastructure reality – SLMs can run on modest servers, edge devices, and even modern smartphones. This matches India’s uneven connectivity, power reliability, and GPU availability far better than cloud-heavy LLM strategies.

3) Vernacular-first AI is easier with SLMs – India’s 22 scheduled languages and dialect diversity make multilingual LLM training extremely expensive. SLMs can be fine-tuned per language, improving accuracy for Hindi, Tamil, Telugu, Marathi, Bengali, and regional variants.

4) Better outcomes for government service delivery – SLMs can power chatbots for:

- ration cards (PDS)

- land records

- municipal services

- grievance portals

These systems need reliability and language fit more than creative general intelligence.

5) Healthcare assistants for ASHA workers and clinics – SLMs can run offline or low-bandwidth diagnostic assistants in local languages for:

- maternal care

- vaccination reminders

- symptom triage

This directly improves rural healthcare access.

6) Education tools that work in low-connectivity classrooms – SLM-based tutors can run on school devices to provide:

- vernacular explanations

- exam prep

- basic doubt resolution

without requiring constant cloud access.

7) MSME automation becomes affordable – Small businesses can use SLMs for:

- customer support in regional languages

- invoice reading

- GST query bots

- simple CRM assistants

without paying for expensive API calls to global LLMs.

8) Faster model iteration for Indian startups – SLMs allow rapid fine-tuning for:

- legal drafting

- logistics coordination

- agri-advisory

Startups can ship, test, retrain, and redeploy in weeks, not quarters.

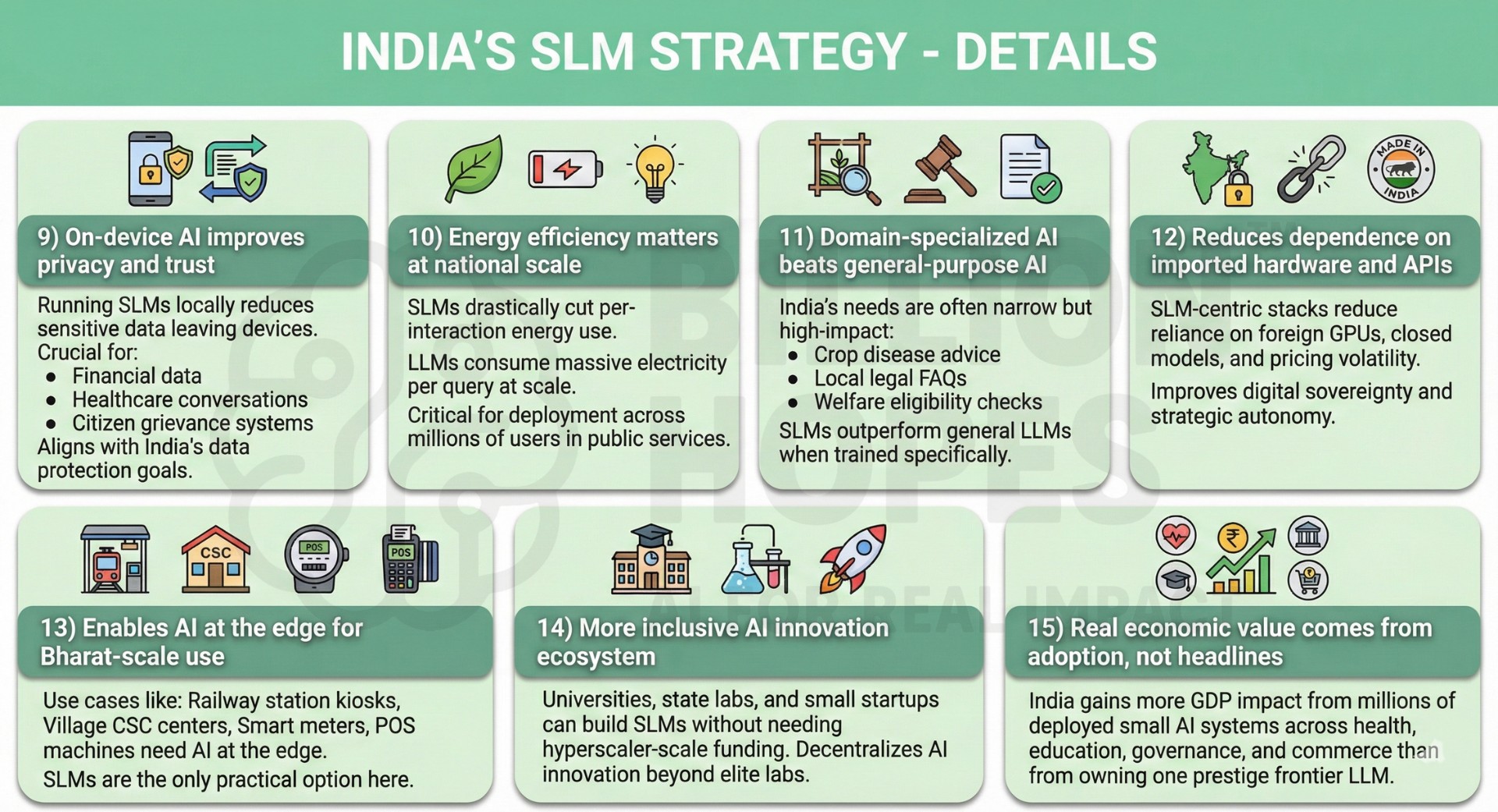

9) On-device AI improves privacy and trust – Running SLMs locally reduces sensitive data leaving devices. This is crucial for:

- financial data

- healthcare conversations

- citizen grievance systems

and aligns with India’s data protection goals.

10) Energy efficiency matters at national scale – LLMs consume massive electricity per query at scale. SLMs drastically cut per-interaction energy use, which matters when AI is deployed across millions of users in public services.

11) Domain-specialized AI beats general-purpose AI – India’s needs are often narrow but high-impact:

- crop disease advice

- local legal FAQs

- welfare eligibility checks

- SLMs outperform general LLMs when trained specifically for these tasks.

12) Reduces dependence on imported hardware and APIs – SLM-centric stacks reduce reliance on foreign GPUs, closed models, and pricing volatility. This improves digital sovereignty and strategic autonomy.

13) Enables AI at the edge for Bharat-scale use – Use cases like:

- railway station kiosks

- village CSC centers

- smart meters

- POS machines

- need AI at the edge. SLMs are the only practical option here.

14) More inclusive AI innovation ecosystem – Universities, state labs, and small startups can build SLMs without needing hyperscaler-scale funding. This decentralizes AI innovation beyond a few elite labs.

15) Real economic value comes from adoption, not headlines – India gains more GDP impact from millions of deployed small AI systems across health, education, governance, and commerce than from owning one prestige frontier LLM that few can afford to use.

3. Conclusion

India’s AI strategy is not about losing the LLM race – it’s about choosing the right race. Small Language Models deliver practical value where India actually needs AI: villages, classrooms, clinics, MSMEs, and public services. By prioritizing affordability, multilingual relevance, energy efficiency, and deployability, India is building AI that scales across people, not just parameters.