Diffusion Models & Generative Modeling Theory

Diffusion Models & Generative Modeling Theory

Underlying physics and stochastic differential equations powering image, video, and audio generation

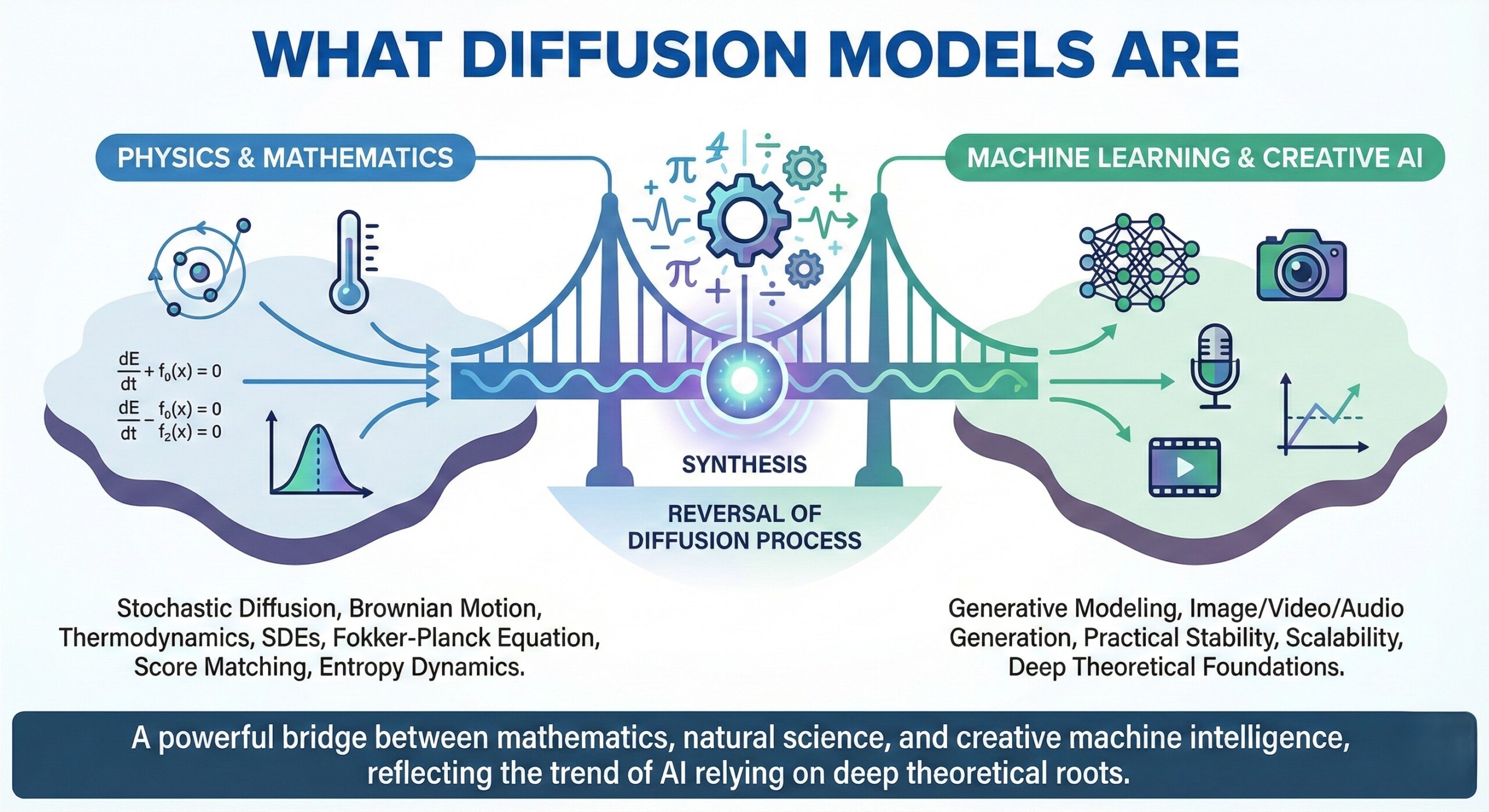

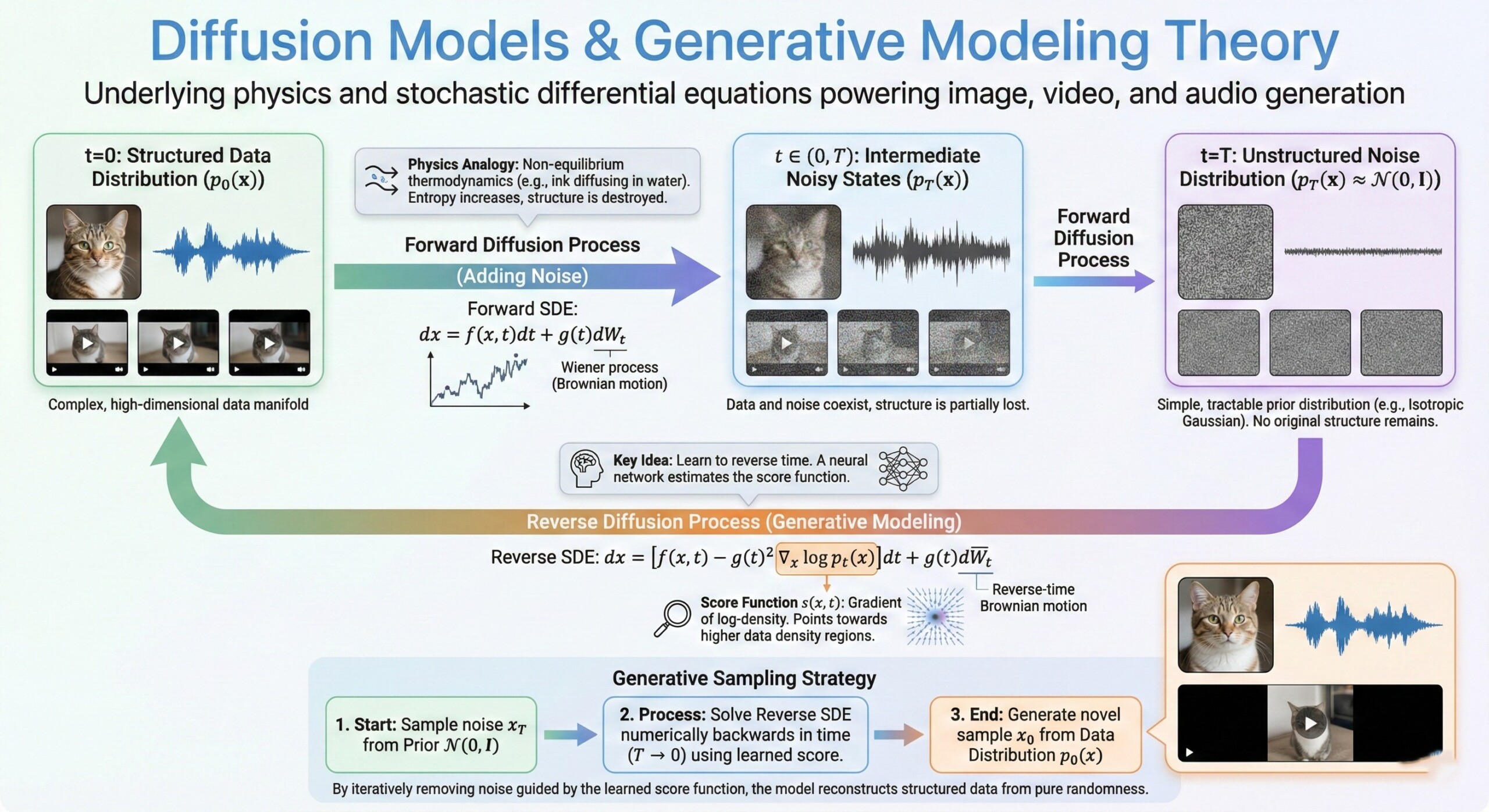

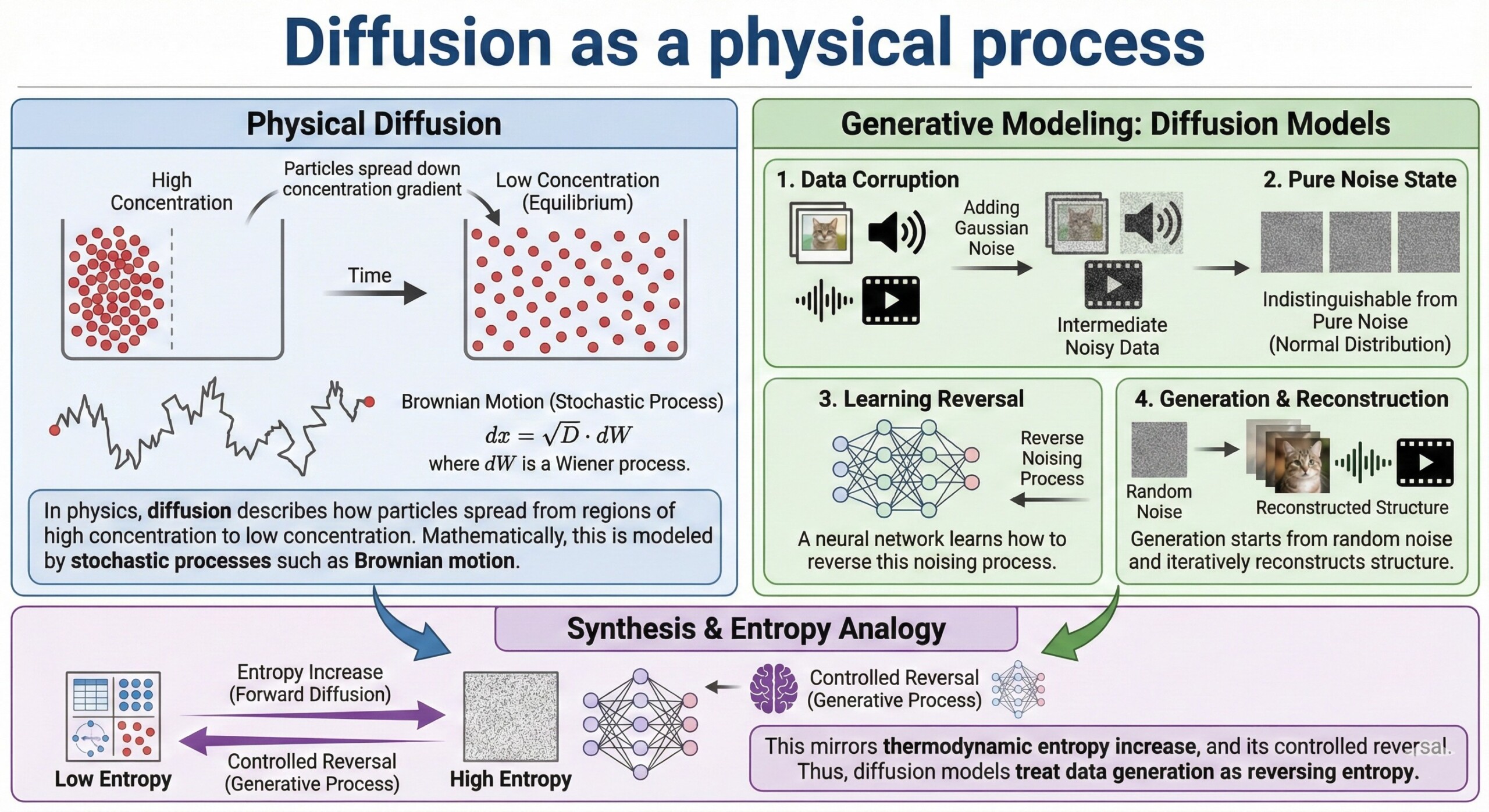

Diffusion models have emerged as one of the most powerful frameworks in modern generative AI. They power state-of-the-art systems such as OpenAI’s DALL·E, Stability AI’s Stable Diffusion, and Runway’s Gen-2. Unlike earlier generative approaches such as GANs (generative adversarial networks), diffusion models are grounded in deep mathematical principles derived from thermodynamics, stochastic processes, and differential equations. At their core, they simulate the physical process of diffusion – gradually transforming structured data into noise, and then learning how to reverse that process.

What makes diffusion models especially elegant is that they bridge physics and machine learning. The same mathematical tools used to model heat flow, particle motion, and Brownian dynamics are now used to generate high-resolution images, coherent videos, and realistic audio waveforms. This article explores the theoretical backbone of diffusion models – focusing on stochastic differential equations (SDEs), probability flows, entropy, and score matching – and explains how these ideas enable machines to generate rich, structured media.

1. Diffusion as a physical process

In physics, diffusion describes how particles spread from regions of high concentration to low concentration. Mathematically, this is modeled by stochastic processes such as Brownian motion.

In generative modeling:

- Data (image, audio, video) is gradually corrupted by adding Gaussian noise.

- After many small steps, the data becomes indistinguishable from pure noise.

- A neural network learns how to reverse this noising process.

- Generation starts from random noise and iteratively reconstructs structure.

- This mirrors thermodynamic entropy increase, and its controlled reversal.

Thus, diffusion models treat data generation as reversing entropy.

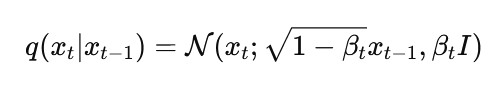

2. The Forward Process: adding noise (Markov Chain)

The forward process is a fixed Markov chain that progressively adds Gaussian noise:

Here:

- βt controls how much noise is added at each step.

- After many steps (T ≈ 1000), the data becomes nearly Gaussian.

- The process is analytically tractable.

- It does not require learning.

- It defines the probabilistic path from structure → chaos.

This step ensures a well-defined transformation from real data to noise. An excellent collection of learning videos awaits you on our Youtube channel.

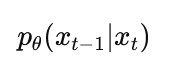

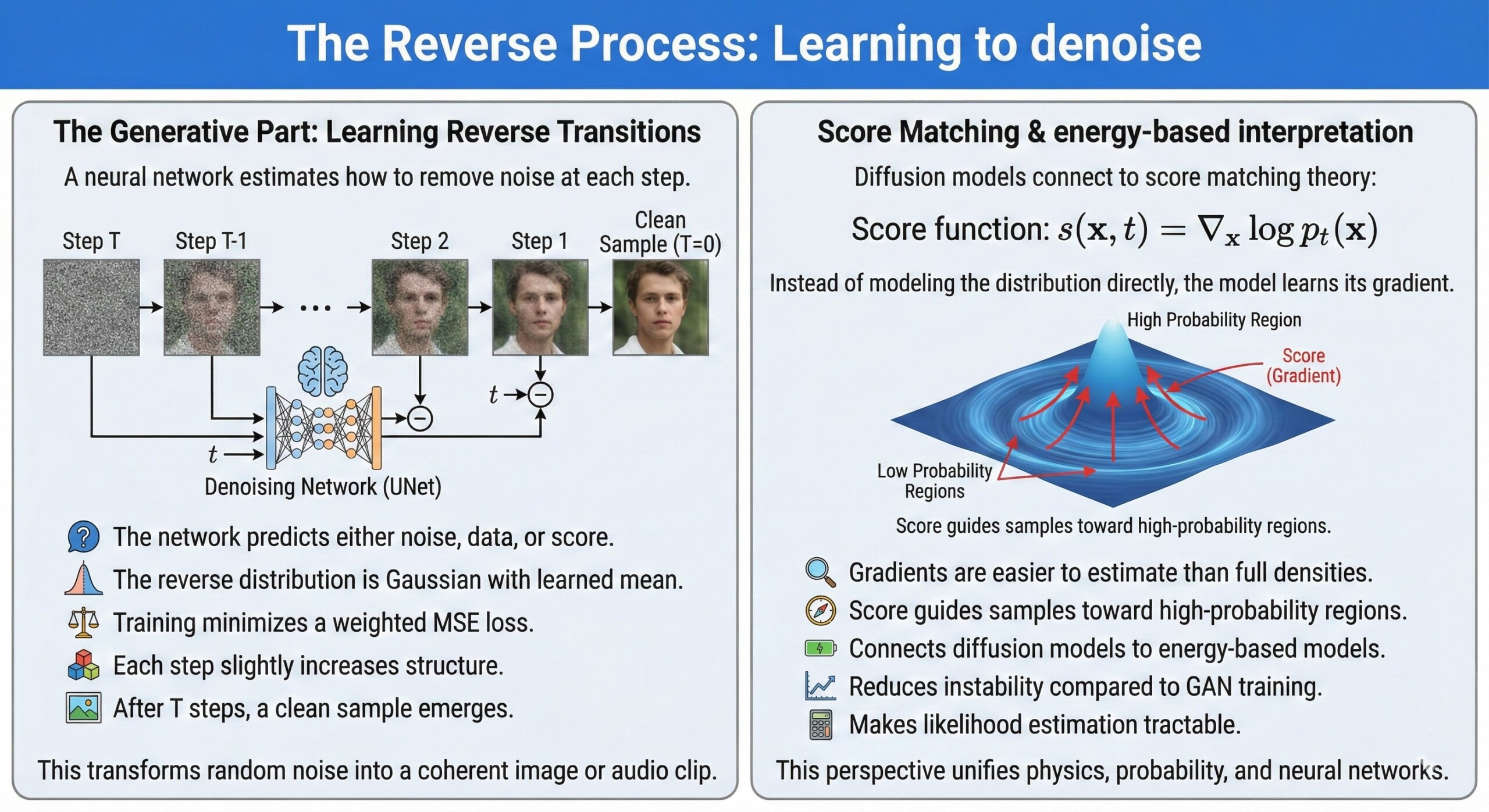

3. The Reverse Process: Learning to denoise

The generative part lies in learning the reverse transitions:

A neural network estimates how to remove noise at each step.

Key aspects:

- The network predicts either noise, data, or score.

- The reverse distribution is Gaussian with learned mean.

- Training minimizes a weighted MSE loss.

- Each step slightly increases structure.

- After T steps, a clean sample emerges.

This transforms random noise into a coherent image or audio clip.

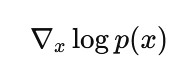

4. Score Matching & energy-based interpretation

Diffusion models connect to score matching theory:

The score function is:

Instead of modeling the distribution directly, the model learns its gradient.

Why this works:

- Gradients are easier to estimate than full densities.

- Score guides samples toward high-probability regions.

- Connects diffusion models to energy-based models.

- Reduces instability compared to GAN training.

- Makes likelihood estimation tractable.

This perspective unifies physics, probability, and neural networks. A constantly updated Whatsapp channel awaits your participation.

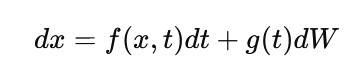

5. Stochastic Differential Equations (SDEs)

Modern diffusion models generalize discrete steps into continuous-time SDEs:

Where:

- f(x,t) is the drift term.

- g(t) controls diffusion strength.

- dW is Brownian motion.

- The process evolves continuously.

- Reverse-time SDE defines generation.

This continuous framework allows more flexible solvers and faster sampling.

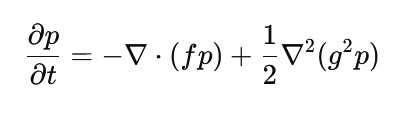

6. The Fokker-Planck Equation

The probability density evolution follows the Fokker–Planck equation:

Meaning:

- It describes how distributions change over time.

- Links microscopic noise to macroscopic density.

- Governs entropy increase.

- Explains convergence toward Gaussian noise.

- Provides theoretical grounding for generative flows.

This is where statistical physics directly meets generative AI. Excellent individualised mentoring programmes available.

7. Probability Flow ODE

Interestingly, diffusion SDEs have an equivalent deterministic ODE:

Implications:

- Sampling can be deterministic.

- Enables faster solvers.

- Provides exact likelihood computation.

- Connects to normalizing flows.

- Improves interpretability of trajectories.

This duality between SDE and ODE is mathematically profound.

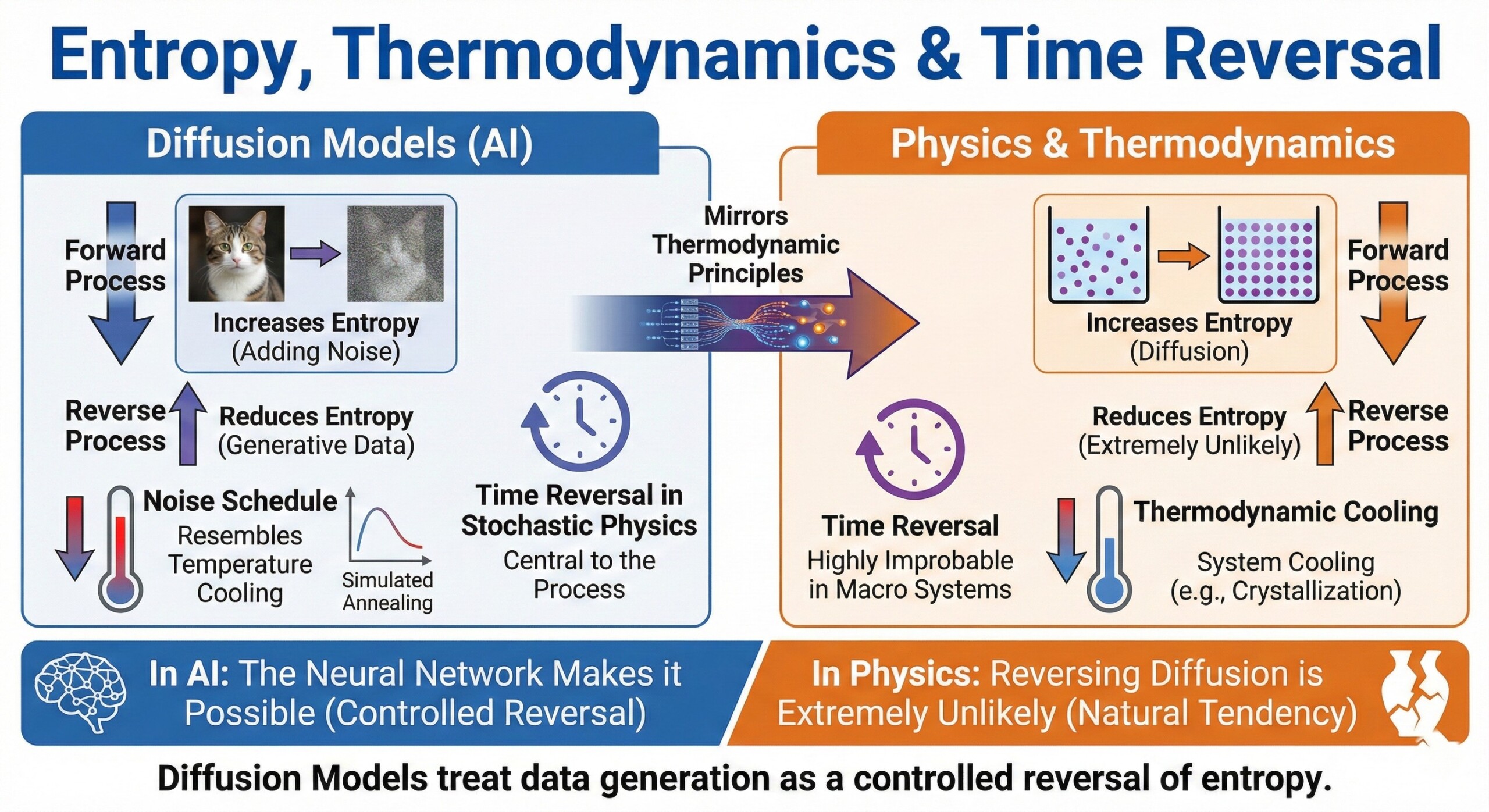

8. Entropy, Thermodynamics & Time Reversal

Diffusion models mirror thermodynamic principles:

- Forward process increases entropy.

- Reverse process reduces entropy.

- Noise schedule resembles temperature cooling.

- Similar to simulated annealing.

- Time reversal in stochastic physics is central.

In physics, reversing diffusion is extremely unlikely. In AI, the neural network makes it possible. Subscribe to our free AI newsletter now.

9. Extension to Images, Video, and Audio

The same mathematics generalizes across modalities:

- Images: Noise added to pixel space or latent space.

- Video: Diffusion over space-time tensors.

- Audio: Diffusion over waveform or spectrogram space.

- Text-to-X: Conditioning via cross-attention.

- Multimodal systems integrate embeddings from models like CLIP.

This universality explains why diffusion dominates generative media.

10. Practical Acceleration Techniques

While theory suggests 1000+ steps, practice improves efficiency:

- DDIM (deterministic sampling).

- DPM-Solver methods.

- Latent diffusion (operate in compressed space).

- Classifier-free guidance.

- Distillation into fewer steps.

These innovations make real-time generation possible. Upgrade your AI-readiness with our masterclass.

Summary

Diffusion models represent a remarkable synthesis of physics and machine learning. By modeling data generation as the reversal of a stochastic diffusion process, they leverage tools from Brownian motion, thermodynamics, and stochastic differential equations. Concepts like score matching, entropy dynamics, and the Fokker-Planck equation provide not just mathematical elegance, but also practical stability and scalability.

The success of diffusion models in powering image, video, and audio generation systems reflects a broader trend: the most powerful AI systems increasingly rely on deep theoretical foundations. As generative AI advances, diffusion theory – rooted in statistical physics – may remain one of the most important bridges between mathematics, natural science, and creative machine intelligence.