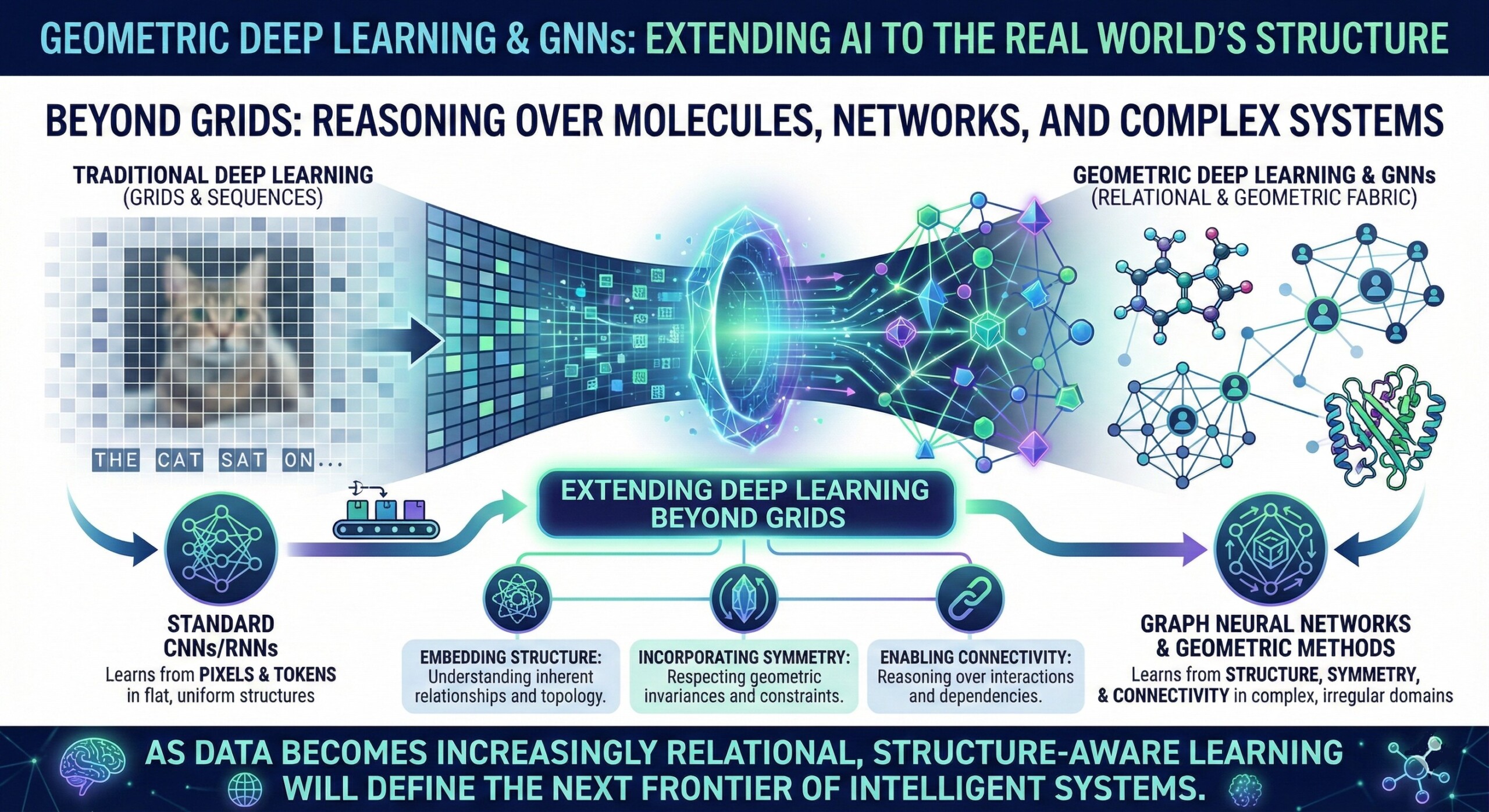

Geometric Deep Learning & Graph Neural Networks (GNNs) – Extending deep learning to non-Euclidean data

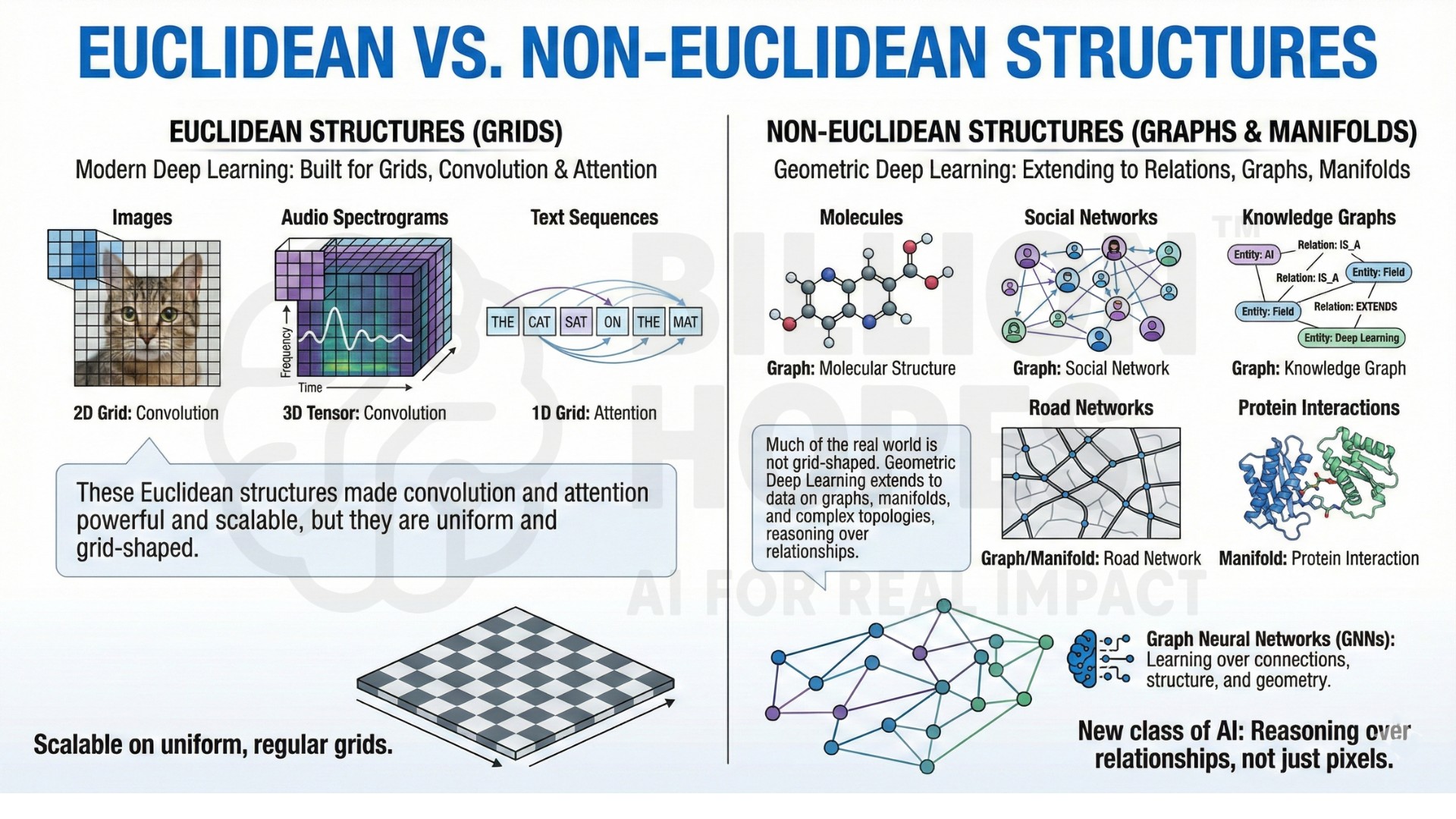

Modern deep learning models were built for grids: images, audio spectrograms, and text sequences. These Euclidean structures made convolution and attention powerful and scalable, but much of the real world is not grid-shaped.

Molecules, social networks, knowledge graphs, road networks, and protein interactions are relational structures. Geometric Deep Learning extends deep learning to these non-Euclidean domains, where data lives on graphs, manifolds, and complex topologies.

This shift creates a new class of AI systems that reason over relationships rather than pixels alone. Graph Neural Networks (GNNs) sit at the centre of this movement, enabling learning over connections, structure, and geometry rather than just features.

1. Why non-Euclidean data changes how AI learns

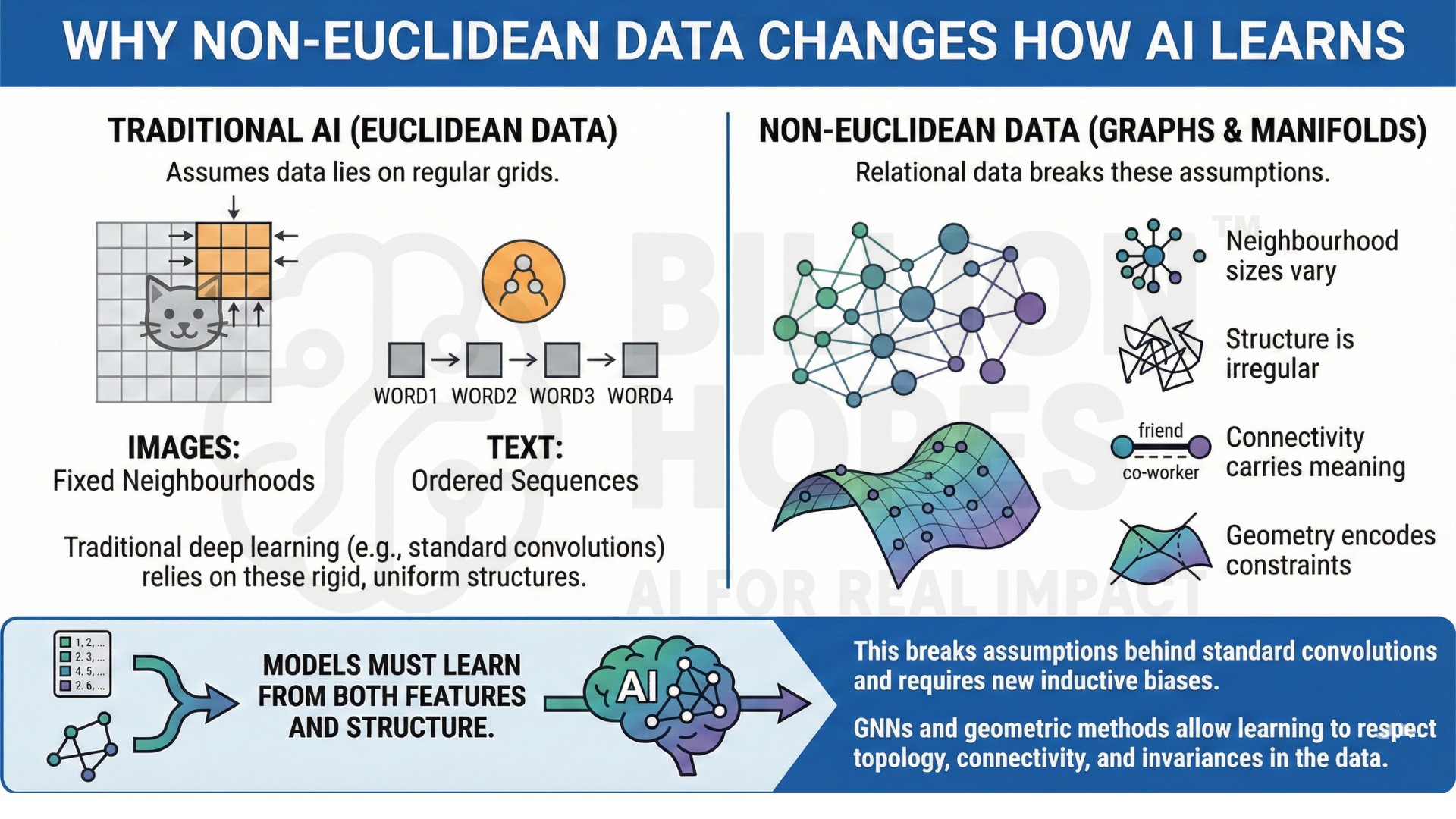

Traditional deep learning assumes data lies on regular grids. Images have fixed neighbourhoods. Text has ordered sequences. Graphs and manifolds do not.

In relational data:

• Neighbourhood sizes vary

• Structure is irregular

• Connectivity carries meaning

• Geometry encodes constraints

Models must learn from both features and structure. This breaks assumptions behind standard convolutions and requires new inductive biases. GNNs and geometric methods allow learning to respect topology, connectivity, and invariances in the data.

2. From grid-based deep learning to geometric learning

Early deep learning successes came from CNNs and Transformers operating on Euclidean spaces. As researchers moved to molecules, networks, and physical systems, grid assumptions failed.

Geometric Deep Learning introduces:

• Message passing over graphs

• Equivariance to symmetries and transformations

• Learning on manifolds and meshes

• Structure-aware aggregation of information

This transition marks a shift from pattern recognition on pixels to reasoning over relationships and geometry. An excellent collection of learning videos awaits you on our Youtube channel.

3. Core building blocks of Graph Neural Networks

GNNs generalize neural networks to graphs through a few core components:

• Nodes with feature representations

• Edges encoding relationships or interactions

• Message passing between connected nodes

• Aggregation functions over neighborhoods

• Update rules that combine structure and features

These components allow models to propagate information across a network, enabling relational reasoning over multiple hops.

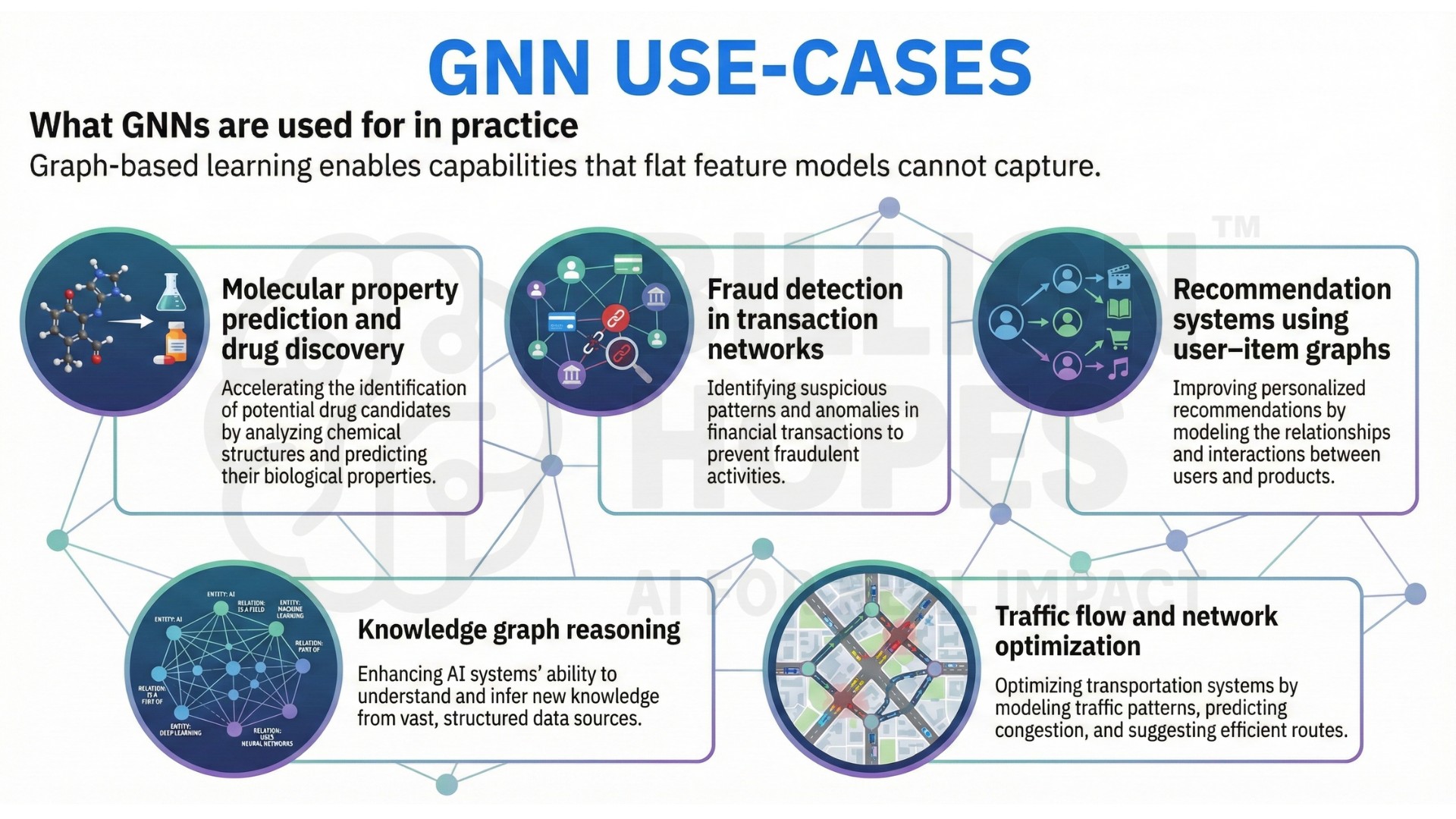

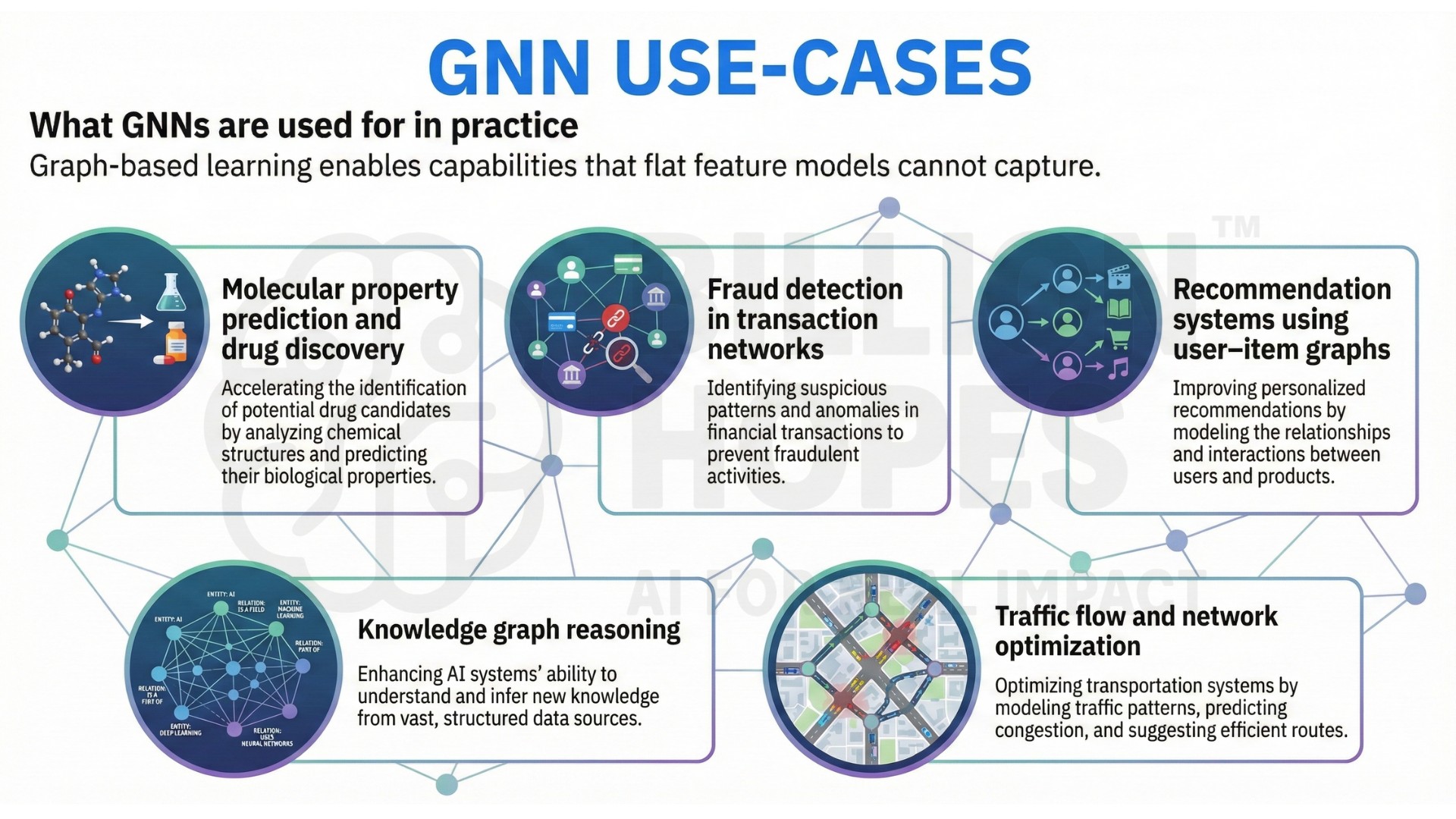

4. What GNNs are used for in practice

Graph-based learning enables capabilities that flat feature models cannot capture.

Examples include:

• Molecular property prediction and drug discovery

• Fraud detection in transaction networks

• Recommendation systems using user–item graphs

• Knowledge graph reasoning

• Traffic flow and network optimization

Careers here focus on:

• Modeling relational structure correctly

• Designing graph schemas and features

• Interpreting learned representations

• Handling scale and sparsity in large graphs

GNNs enable learning from connections, not just attributes. A constantly updated Whatsapp channel awaits your participation.

5. Inductive biases, symmetry, and equivariance

Geometric Deep Learning is driven by the idea of encoding the right symmetries into models.

Key concepts include:

• Invariance to permutations of nodes

• Equivariance to rotations and translations in physical systems

• Respecting graph isomorphism constraints

• Structure-aware pooling and readout functions

These inductive biases improve generalization and sample efficiency by aligning models with the geometry of the data.

6. Limitations and challenges of GNNs

Despite their power, Graph Neural Networks face structural and practical constraints that limit their reliability in real-world systems. As message passing layers deepen, node representations tend to become increasingly similar, a phenomenon known as oversmoothing, which causes the model to lose the ability to distinguish between different nodes and structures. This limits how deep and expressive GNN architectures can be in practice.

GNNs’ structural constraints:

• Oversmoothing in deep message-passing networks

• Scalability limits on large graphs

• Sensitivity to graph construction choices

• Difficulty handling dynamic or evolving graphs

• Interpretability challenges in relational embeddings

Scalability remains a major bottleneck when working with large graphs containing millions or billions of nodes and edges. Full-graph message passing is computationally expensive and memory-intensive, often requiring sampling, partitioning, or approximation techniques that can introduce bias or information loss. Performance and accuracy trade off sharply as graph size increases.

Interpretability remains a challenge because GNNs learn distributed relational embeddings that are difficult to map back to simple, human-understandable explanations. Understanding why a model flagged a node as risky, predicted a molecular property, or linked two entities often requires specialized explainability methods that are still immature.

Designing effective GNNs requires careful control of depth, neighbourhood sampling, and graph representations. Excellent individualised mentoring programmes available.

Skills that define geometric deep learning practitioners

Practitioners working with GNNs need hybrid capabilities:

• Graph theory and network science fundamentals

• Deep learning architectures and training dynamics

• Feature engineering for nodes, edges, and graphs

• Understanding symmetry, invariance, and equivariance

• Scaling training and inference on large relational datasets

The field sits at the intersection of machine learning, mathematics, and domain structure.

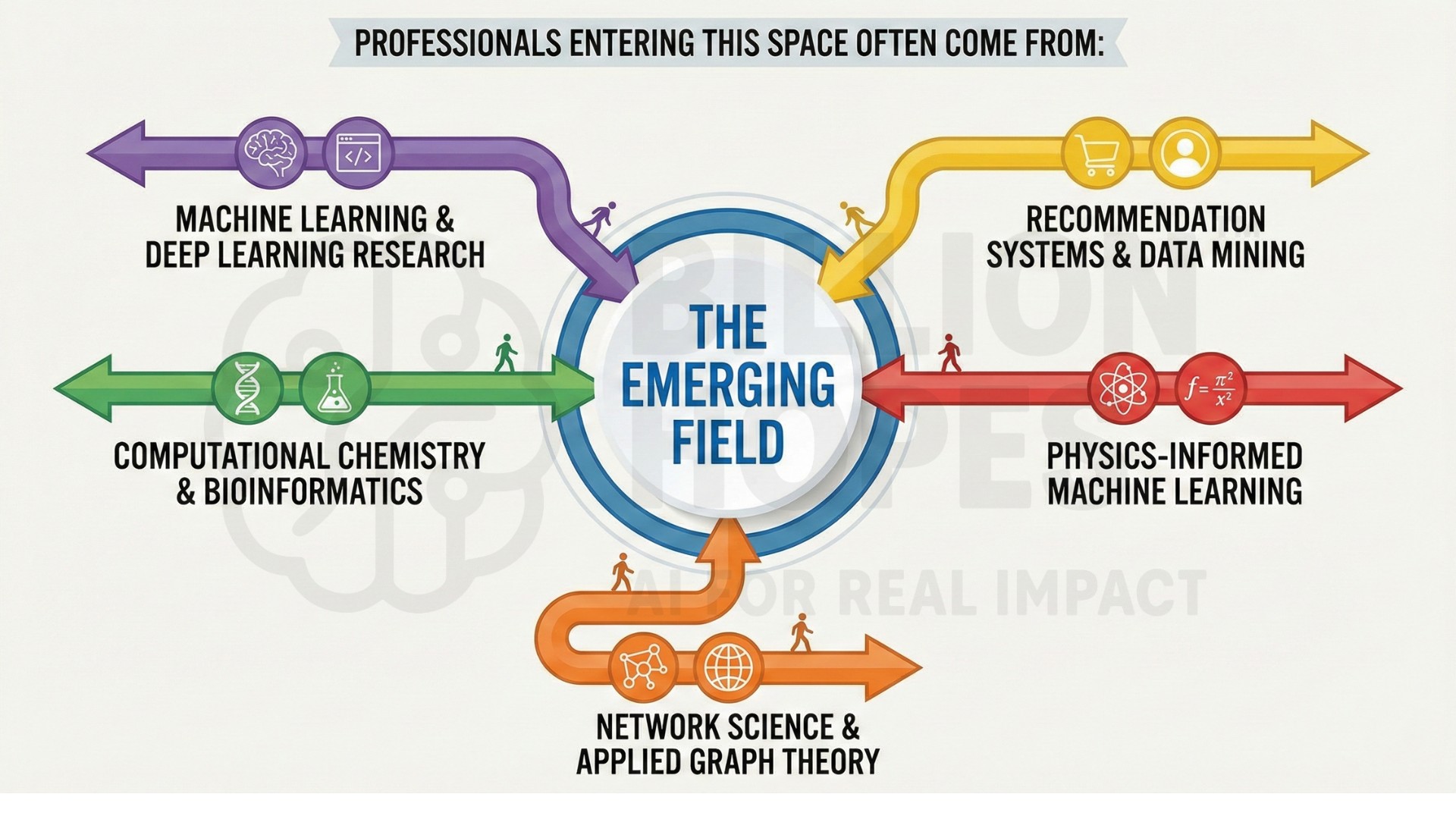

8. Backgrounds and entry paths into GNN work

Professionals entering geometric deep learning and Graph Neural Network work typically come from domains where relationships, structure, and interactions matter as much as raw features. Many start in machine learning and deep learning research, then encounter limits of CNNs or Transformers when problems involve networks, interactions, or structured dependencies rather than grids or sequences.

Computational chemistry and bioinformatics are major entry points because molecules, proteins, and biological pathways are naturally graph-structured. Researchers in these fields often turn to GNNs when traditional feature-based models fail to capture molecular interactions, binding structures, or relational dependencies between biological entities.

Network science and applied graph theory provide strong conceptual foundations for GNN work. Practitioners with backgrounds in social network analysis, communication networks, or infrastructure modeling often move into GNNs when they seek learning systems that can operate directly on network structure rather than engineered summaries of connectivity.

Across these backgrounds, a common trigger for adopting GNNs is the failure of flat models to capture relational structure. When performance plateaus despite more data or larger networks, practitioners discover that the missing signal lies not in features alone, but in how entities are connected, constrained, and embedded in structure.

Professionals entering this space often come from:

• Machine learning and deep learning research

• Computational chemistry and bioinformatics

• Network science and applied graph theory

• Recommendation systems and data mining

• Physics-informed machine learning

Many discover GNNs when flat models fail to capture relational structure in their domain. Subscribe to our free AI newsletter now.

9. Tensions and trade-offs in geometric learning

GNN-based systems involve persistent trade-offs:

• Expressivity versus scalability

• Depth versus oversmoothing

• Structural fidelity versus computational cost

• Generalization versus graph-specific tuning

• Accuracy versus interpretability

These trade-offs shape model design and deployment decisions in real-world systems.

10. The future: Structure-aware AI systems

The future of AI will move beyond flat representations toward structure-aware intelligence. Geometric Deep Learning will play a central role in modeling physical systems, biological interactions, and relational knowledge.

As AI systems expand into science, infrastructure, and complex networks, practitioners who can align learning algorithms with geometry and structure will determine whether models capture real-world constraints – or merely learn shallow correlations. Upgrade your AI-readiness with our masterclass.

Summary

Geometric Deep Learning and Graph Neural Networks extend deep learning beyond grids into the relational and geometric fabric of the real world. By embedding structure, symmetry, and connectivity into learning systems, these approaches enable AI to reason over molecules, networks, and complex systems rather than just pixels and tokens. As data becomes increasingly relational, structure-aware learning will define the next frontier of intelligent systems.