In-Context Learning & Emergent Behaviour

In-Context Learning & Emergent Behaviour

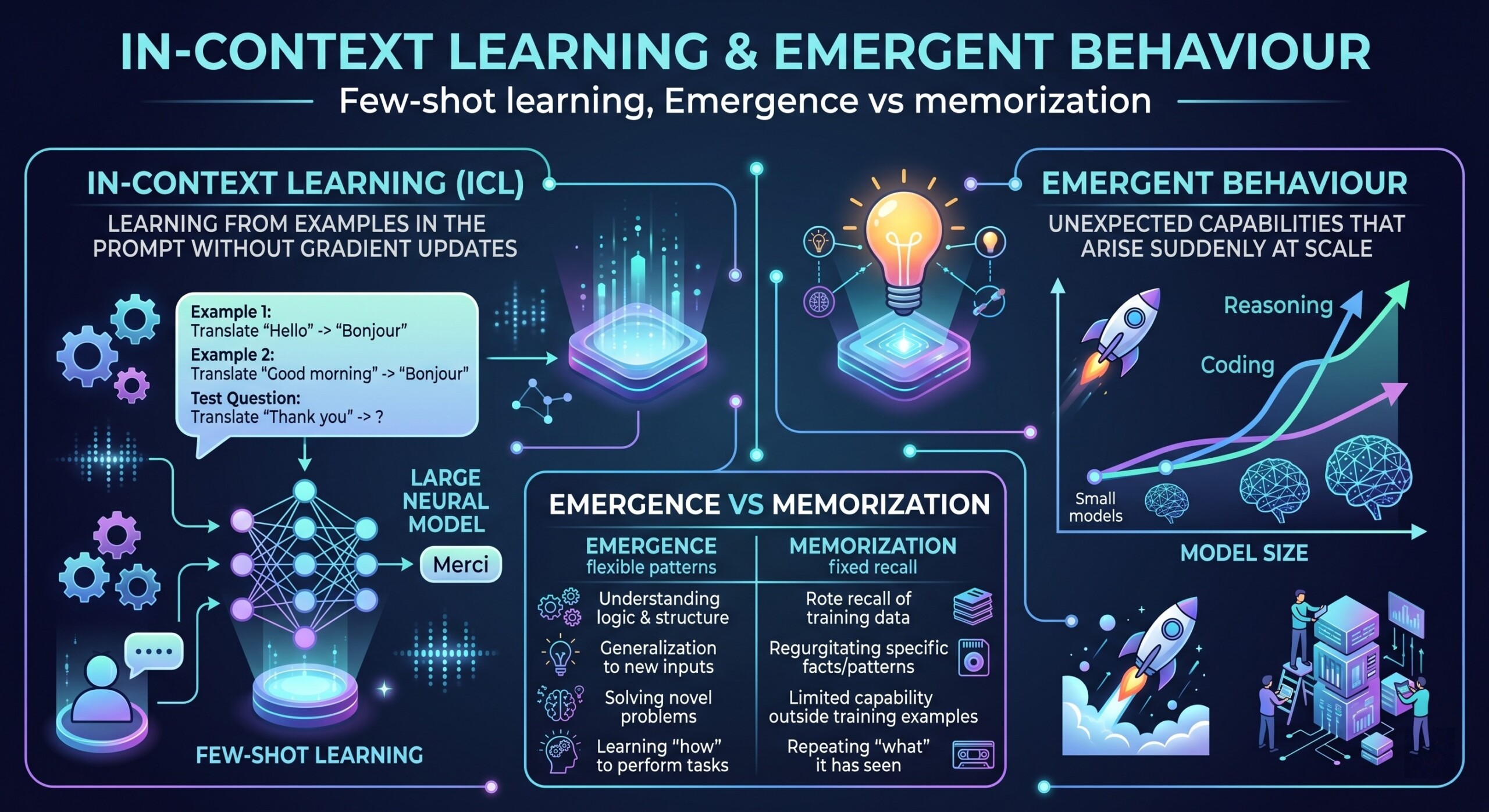

Few-shot learning, Emergence vs memorization

Introduction

Modern AI systems – especially large language models (LLMs) – have demonstrated abilities that seem almost surprising, even to their creators. Unlike traditional machine learning systems that require explicit training for each task, these models can perform new tasks simply by being shown a few examples within a prompt. This capability is known as in-context learning. Alongside it, another fascinating phenomenon has emerged: models begin to exhibit complex behaviours that were not explicitly programmed or anticipated, often referred to as emergent behaviour.

Together, these ideas challenge classical notions of learning, generalization, and intelligence. They raise fundamental questions: Are these models truly “learning” in real time? Or are they cleverly leveraging patterns memorized during training? Where is the boundary between genuine reasoning and sophisticated pattern matching? Let us explore these themes in depth, focusing on few-shot learning, the mechanics of in-context learning, and the debate between emergence and memorization.

Let’s dive deep into the topic.

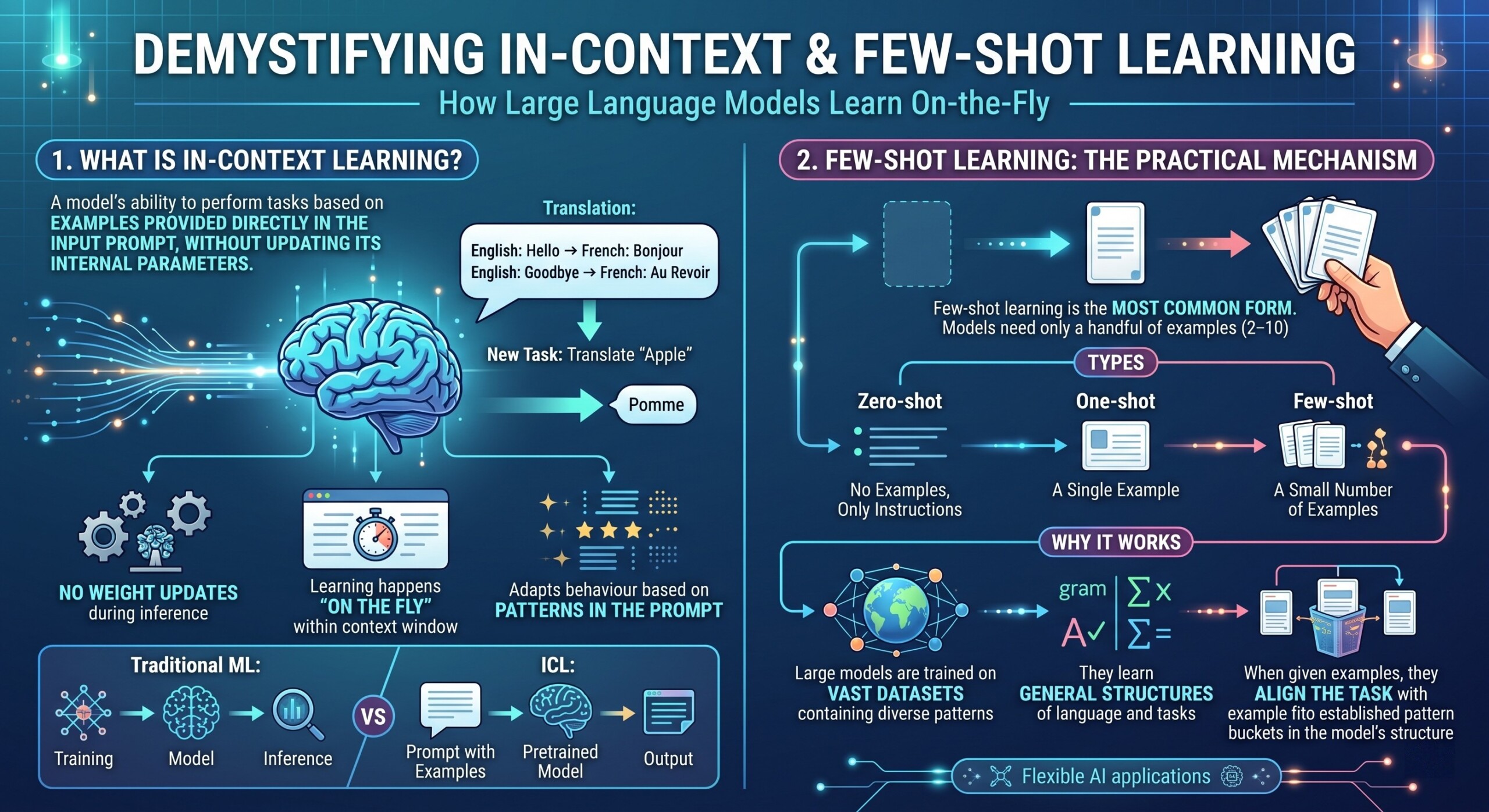

1. What is In-Context Learning?

In-context learning refers to a model’s ability to perform tasks based on examples provided directly in the input prompt, without updating its internal parameters.

For instance, if you give a model a few examples of translating English to French, it can continue translating new sentences correctly – even if it was never explicitly fine-tuned for that exact task.

Key characteristics:

- No weight updates occur during inference

- Learning happens “on the fly” within the context window

- The model adapts behaviour based on patterns in the prompt

This is fundamentally different from traditional machine learning, where training and inference are separate phases. An excellent collection of learning videos awaits you on our Youtube channel.

2. Few-Shot Learning: The Practical Mechanism

Few-shot learning is the most common form of in-context learning. Instead of thousands of labeled examples, the model needs only a handful (often 2–10 examples) to understand the task.

Types:

- Zero-shot: No examples, only instructions

- One-shot: A single example

- Few-shot: A small number of examples

Why it works:

- Large models are trained on vast datasets containing diverse patterns

- They learn general structures of language and tasks

- When given examples, they align the task with known patterns

Few-shot learning is what makes modern AI systems flexible and usable in real-world applications without retraining.

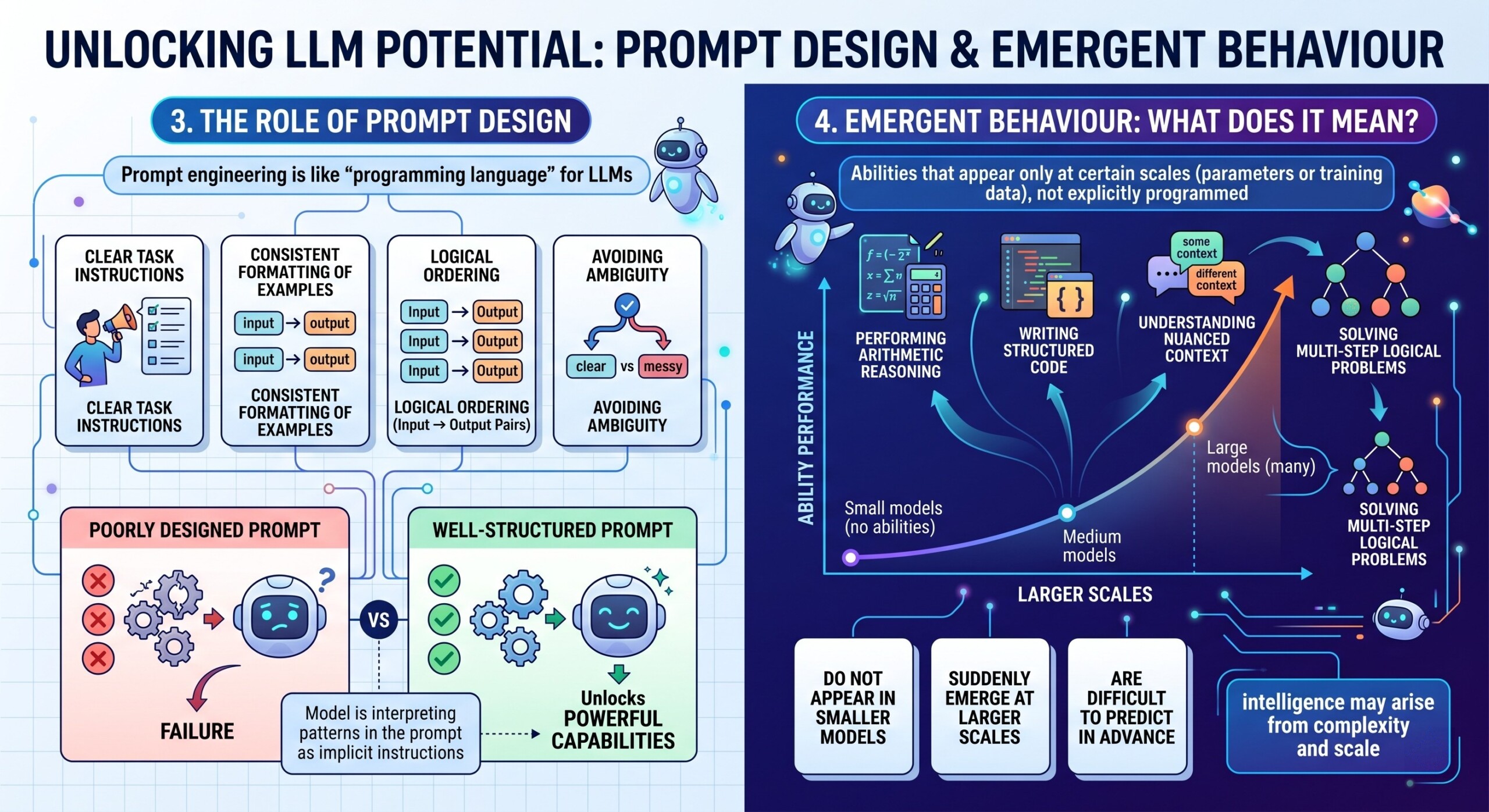

3. The Role of Prompt Design

In-context learning depends heavily on how the input is structured. This brings us to prompt engineering, which acts as the “programming language” for LLMs.

Important elements:

- Clear task instructions

- Consistent formatting of examples

- Logical ordering (input → output pairs)

- Avoiding ambiguity

A poorly designed prompt can completely fail, while a well-structured one can unlock powerful capabilities.

This reveals an important insight: the model is not just responding – it is interpreting patterns in the prompt as implicit instructions. A constantly updated Whatsapp channel awaits your participation.

4. Emergent Behaviour: What Does It Mean?

Emergent behaviour refers to abilities that appear only when models reach a certain scale (in terms of parameters or training data), and were not explicitly programmed.

Examples include:

- Performing arithmetic reasoning

- Writing structured code

- Understanding nuanced context

- Solving multi-step logical problems

These abilities:

- Do not appear in smaller models

- Suddenly emerge at larger scales

- Are difficult to predict in advance

This challenges the idea that intelligence must be explicitly engineered – it may instead arise from complexity and scale.

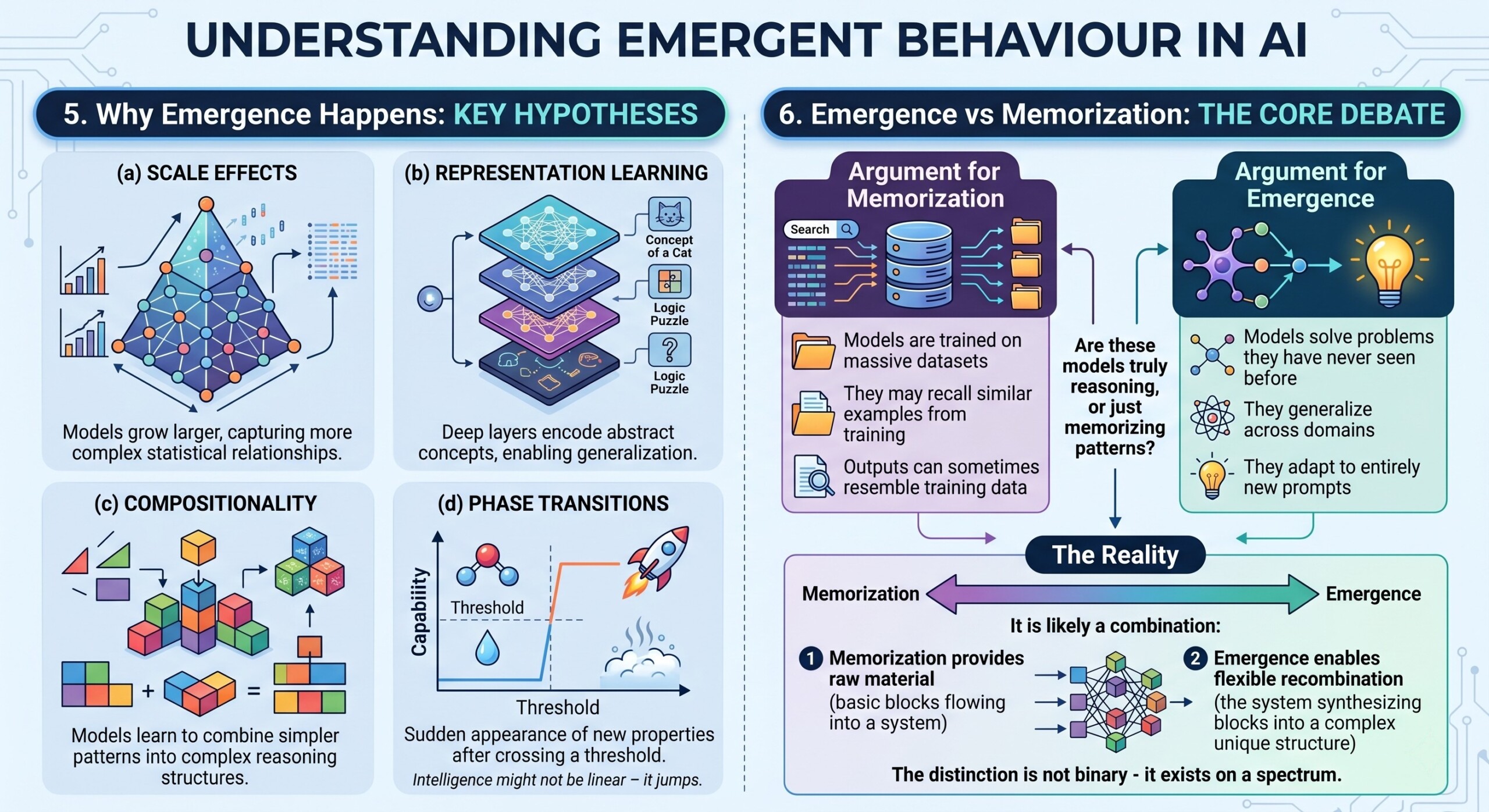

5. Why Emergence Happens

Several hypotheses explain emergent behaviour:

a. Scale Effects

As models grow larger, they capture more complex statistical relationships.

b. Representation Learning

Deep layers encode abstract concepts, enabling generalization beyond training data.

c. Compositionality

Models learn to combine simpler patterns into more complex reasoning structures.

d. Phase Transitions

Some researchers compare emergence to physical systems, where new properties appear suddenly after crossing a threshold.

Emergence suggests that intelligence might not be linear – it can jump in capability once certain conditions are met. Excellent individualised mentoring programmes available.

6. Emergence vs Memorization: The Core Debate

One of the most important questions in AI today is:

Are these models truly reasoning, or just memorizing patterns?

Argument for Memorization

- Models are trained on massive datasets

- They may recall similar examples from training

- Outputs can sometimes resemble training data

Argument for Emergence

- Models solve problems they have never seen before

- They generalize across domains

- They adapt to entirely new prompts

The Reality

It is likely a combination:

- Memorization provides raw material

- Emergence enables flexible recombination

The distinction is not binary – it exists on a spectrum.

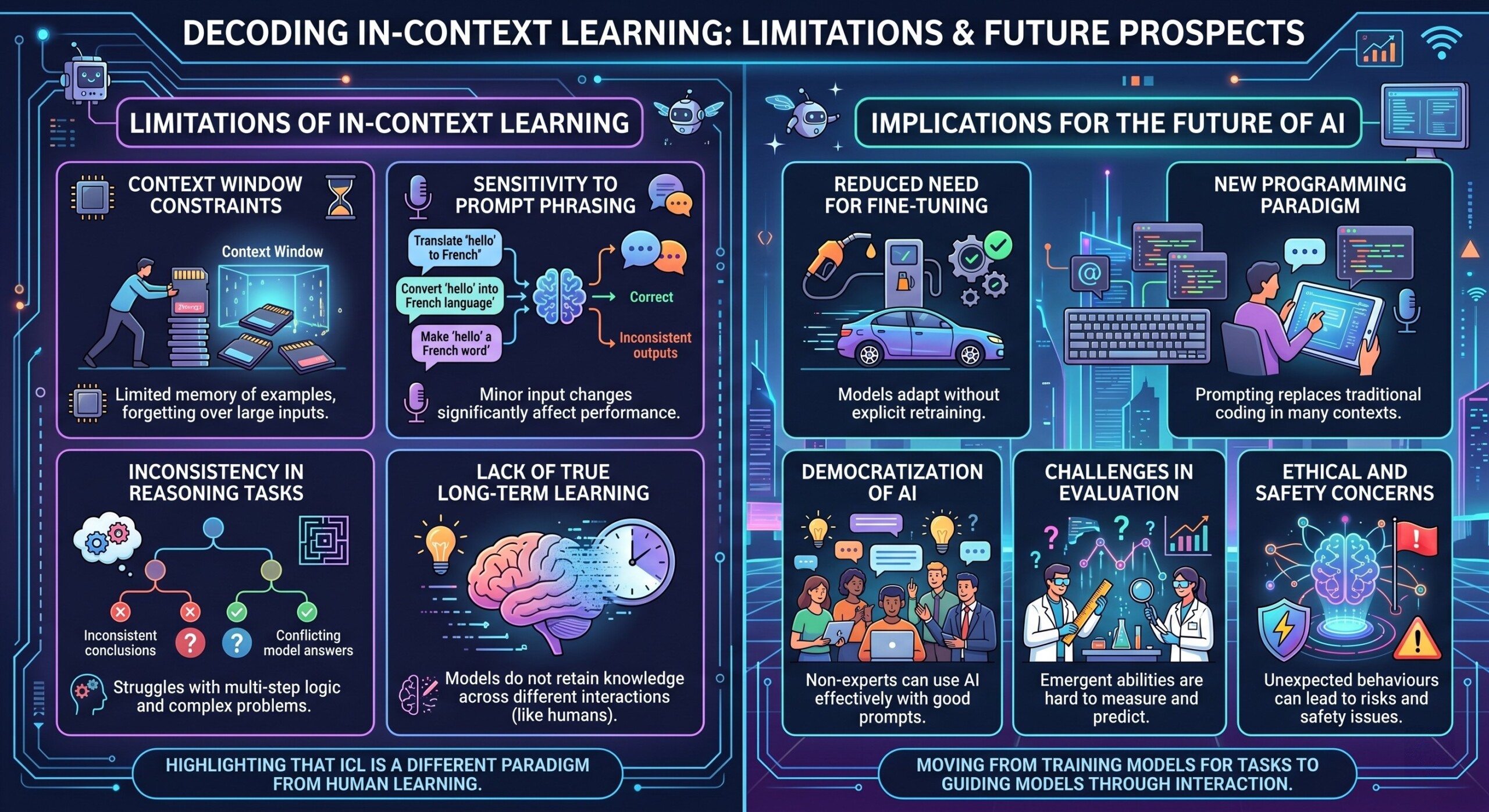

7. Limitations of In-Context Learning

Despite its power, in-context learning has clear limitations:

- Context window constraints: Limited memory of examples

- Sensitivity to prompt phrasing

- Inconsistency in reasoning tasks

- Lack of true long-term learning

Unlike humans, models do not retain knowledge from one interaction to another (unless explicitly designed to do so).

This highlights that in-context learning is not equivalent to human learning- it is a different paradigm. Subscribe to our free AI newsletter now.

8. Implications for the Future of AI

The combination of in-context learning and emergent behaviour has profound implications:

- Reduced Need for Fine-Tuning

Models can adapt dynamically without retraining

- New Programming Paradigm

Prompting replaces traditional coding in some contexts

- Democratization of AI

Non-experts can use AI effectively with good prompts

- Challenges in Evaluation

Emergent abilities are hard to measure and predict

- Ethical and Safety Concerns

Unexpected behaviours can lead to risks

This shift suggests we are moving from training models for tasks to guiding models through interaction.

Conclusion

In-context learning and emergent behaviour represent a fundamental shift in how we understand artificial intelligence. Instead of rigid systems trained for specific tasks, we now have flexible models capable of adapting on the fly and exhibiting capabilities that were never explicitly programmed.

Few-shot learning demonstrates how minimal examples can unlock powerful functionality, while emergent behaviour reveals that intelligence may arise from scale and complexity rather than explicit design. At the same time, the debate between emergence and memorization reminds us to remain cautious – these systems are powerful, but not yet fully understood.

As AI continues to evolve, the key challenge will be to harness these capabilities responsibly while deepening our understanding of what these models are truly doing beneath the surface. Upgrade your AI-readiness with our masterclass.