Why AI is not IT and will never be

Why AI is not IT and will never be

And why not knowing this is a wasted opportunity

Introduction: The great confusion

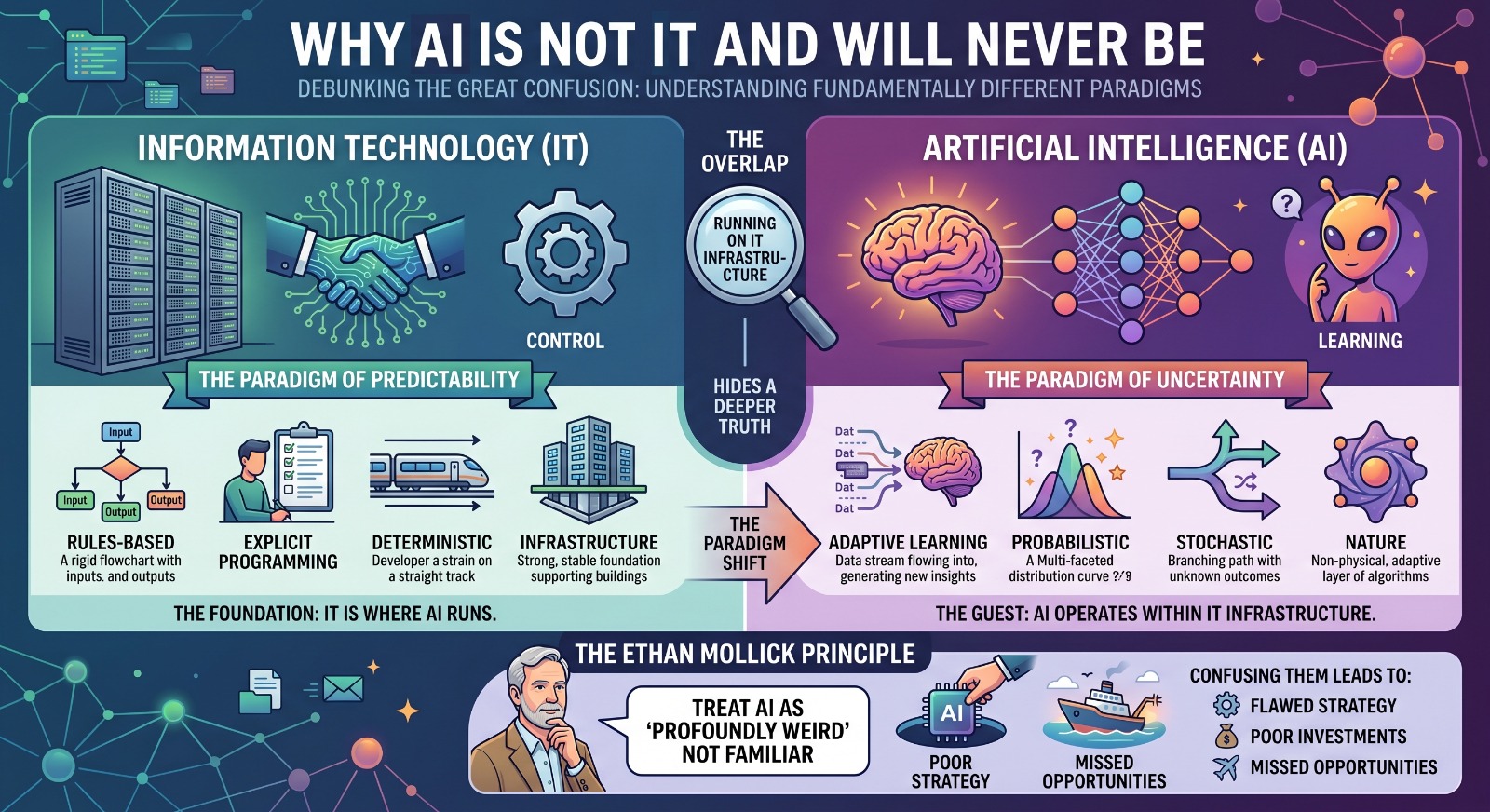

In conversations across boardrooms, universities, and policy circles, Information Technology and Artificial Intelligence are often treated as if they are interchangeable. This confusion is understandable because AI systems run on IT infrastructure. However, this overlap hides a deeper truth.

IT and AI are fundamentally different paradigms. One is built on control and predictability, while the other is built on learning and uncertainty. Confusing them leads not just to conceptual errors but to flawed strategy, poor investments, and missed opportunities.

As Ethan Mollick points out, companies should treat AI as what it is, which he calls “profoundly weird.” The problem begins when organizations try to make it behave like something familiar.

1. What is IT: a system of precision and predictability

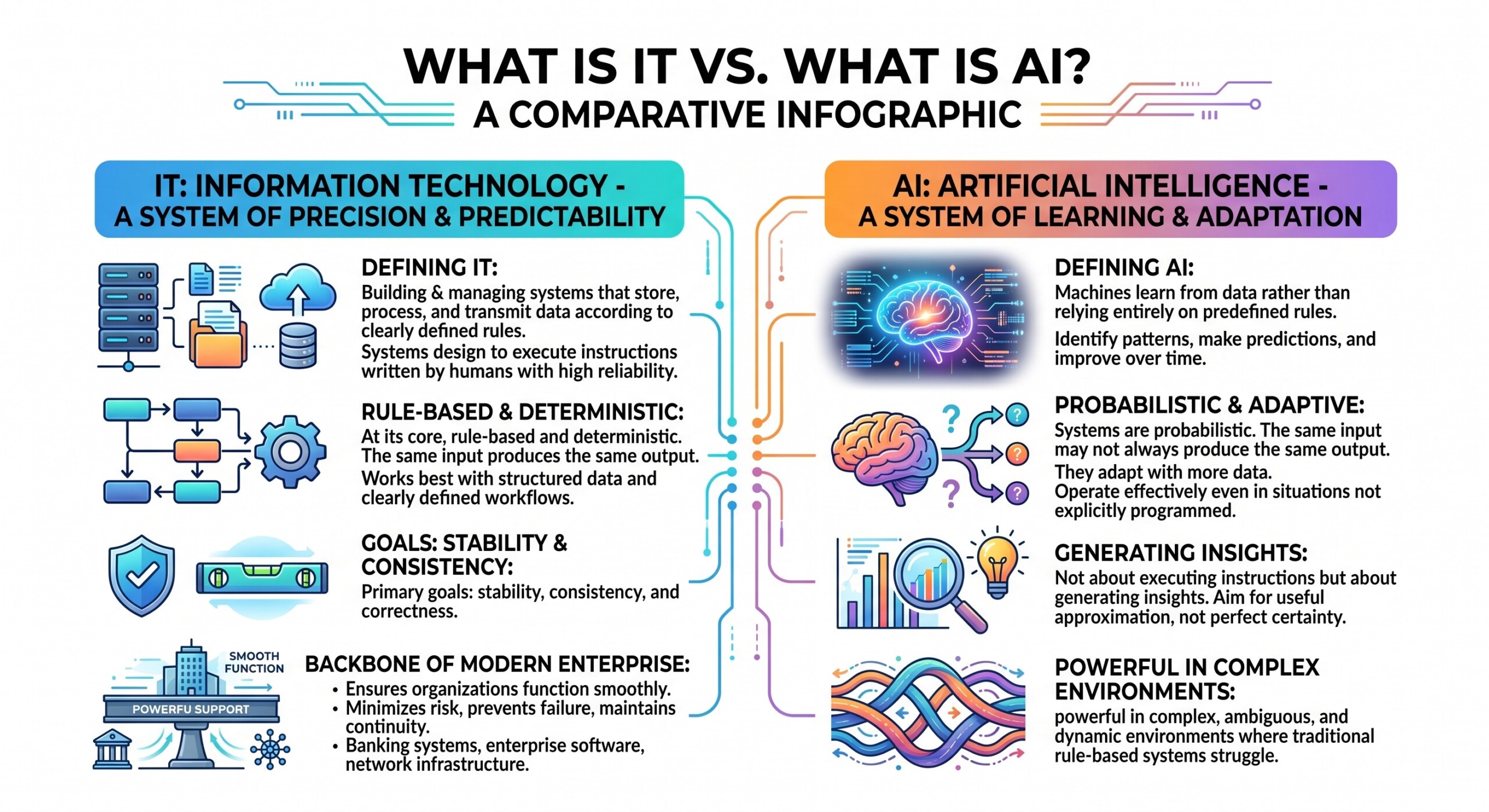

Information Technology is the discipline of building and managing systems that store, process, and transmit data according to clearly defined rules. These systems are designed to execute instructions written by humans with a high degree of reliability.

At its core, IT is rule based and deterministic. The same input produces the same output. It works best with structured data and clearly defined workflows. Its primary goals are stability, consistency, and correctness.

From banking systems to enterprise software and network infrastructure, IT ensures that organizations function smoothly. It minimizes risk, prevents failure, and maintains continuity. It is the backbone that keeps modern enterprises running.

2. What is AI: a system of learning and adaptation

Artificial Intelligence, in contrast, is built on the idea that machines can learn from data rather than rely entirely on predefined rules. AI systems identify patterns, make predictions, and improve over time.

These systems are probabilistic. The same input may not always produce the same output. They adapt as they are exposed to more data and can operate effectively even in situations that were not explicitly programmed.

AI is not about executing instructions but about generating insights. It does not aim for perfect certainty but for useful approximation. This makes it powerful in complex, ambiguous, and dynamic environments where traditional rule-based systems struggle. An excellent collection of learning videos awaits you on our Youtube channel.

3. The fundamental difference: logic versus learning

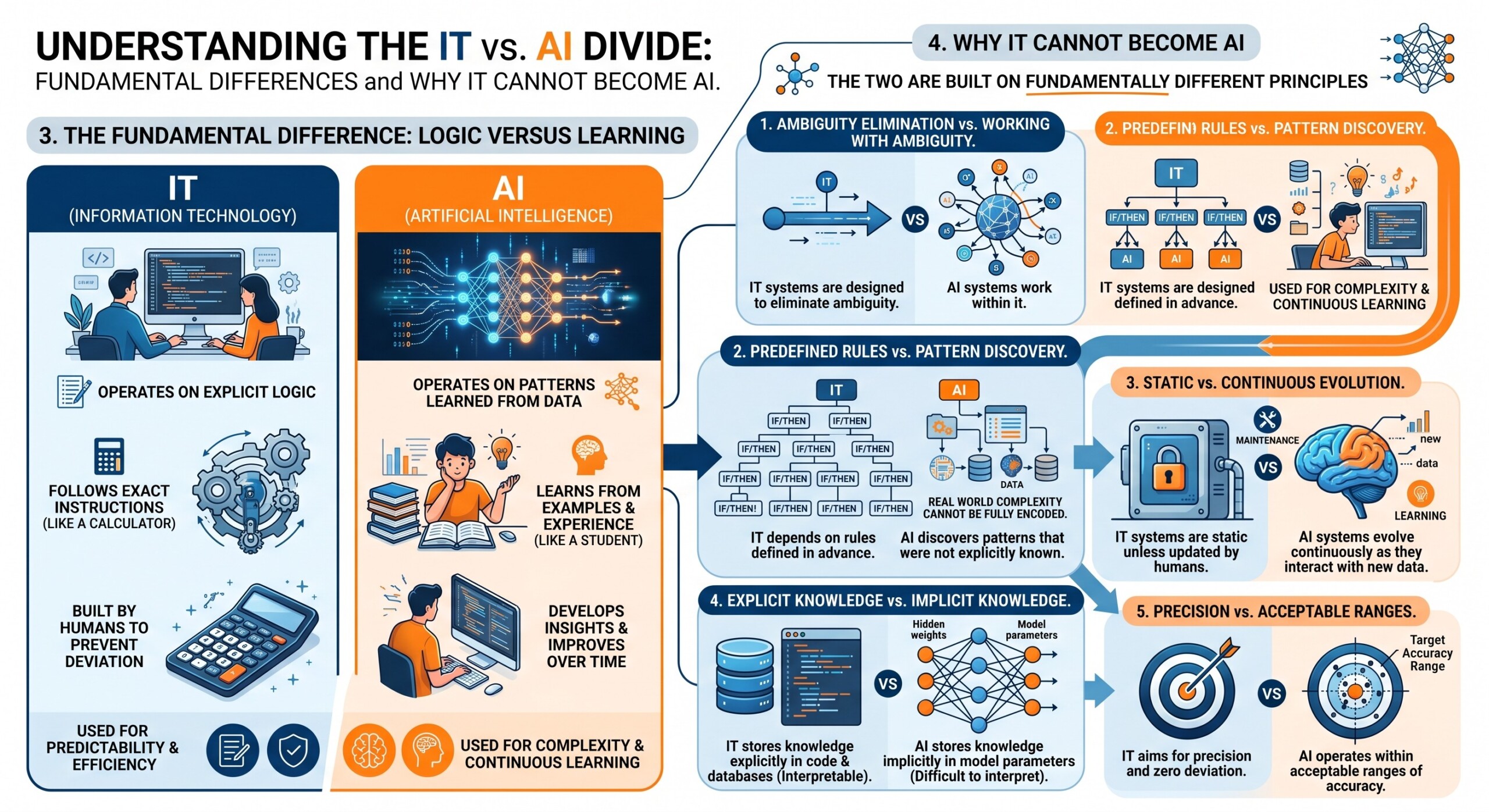

The distinction between IT and AI becomes clear when viewed through their core mechanisms. IT operates on explicit logic written by humans. AI operates on patterns learned from data.

A simple way to understand this is through analogy. IT is like a calculator that follows exact instructions. AI is like a student who learns from examples and improves with experience.

This difference is not just technical. It shapes how systems behave, how they are built, and how they should be used within organizations.

4. Why IT cannot become AI

The idea that IT can evolve into AI is appealing but incorrect. The two are built on fundamentally different principles.

IT systems are designed to eliminate ambiguity, while AI systems work within it. IT depends on rules defined in advance, while AI discovers patterns that were not explicitly known. Even with millions of rules, an IT system cannot replicate the flexibility of a learning model because real world complexity cannot be fully encoded.

IT systems are also static unless updated by humans. AI systems evolve continuously as they interact with new data. This creates a structural difference between maintenance and learning.

Another critical distinction lies in how knowledge is represented. IT stores knowledge explicitly in code and databases. AI stores knowledge implicitly in model parameters, making it difficult to interpret in the same way as traditional systems.

Finally, IT aims for precision and zero deviation, while AI operates within acceptable ranges of accuracy. This difference alone makes it impossible for one to become the other. A constantly updated Whatsapp channel awaits your participation.

5. The risk of forcing AI into IT thinking

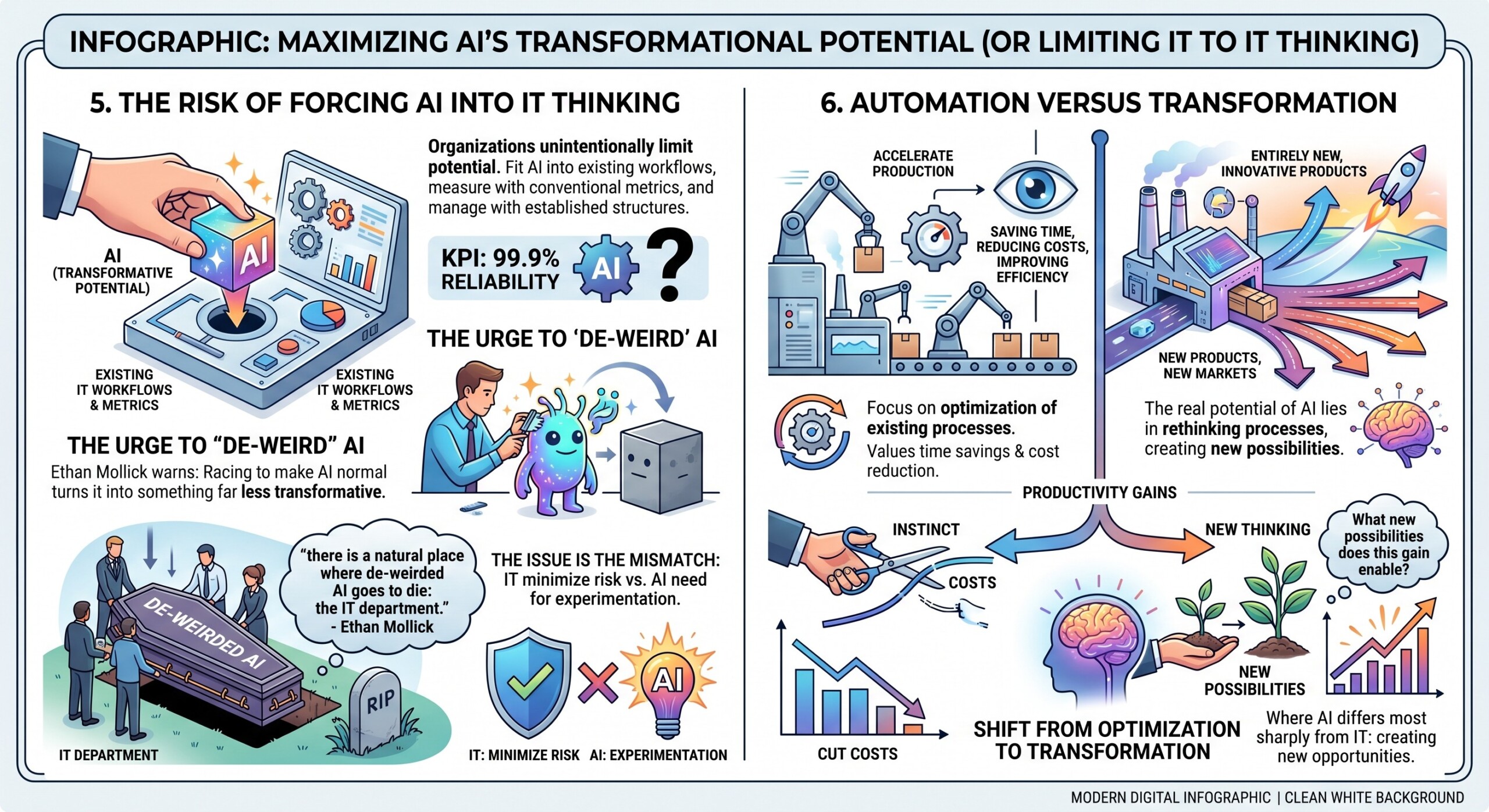

When organizations attempt to treat AI like traditional IT, they unintentionally limit its potential. They try to fit it into existing workflows, measure it using conventional metrics, and manage it through established structures.

This tendency aligns with what Ethan Mollick describes as the urge to “de-weird” AI. He warns that companies are racing to make AI normal and in doing so are turning it into something far less transformative.

He also observes that there is a natural place where this process leads. In his words, “there is a natural place where de-weirded AI goes to die: the IT department.” The issue is not with IT itself but with the mismatch between IT’s mandate to minimize risk and AI’s need for experimentation.

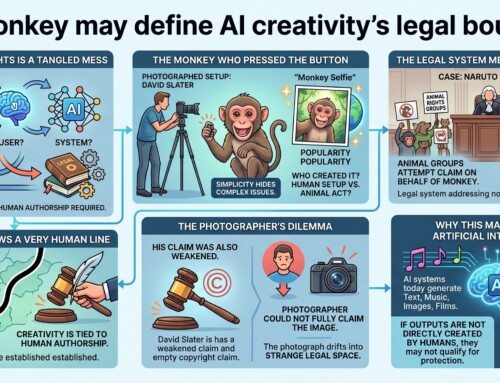

6. Automation versus transformation

One of the most important consequences of this confusion is that organizations reduce AI to a tool for automation. They focus on saving time, reducing costs, and improving efficiency in existing processes.

While these are valuable outcomes, they represent only a small fraction of what AI can achieve. The real potential of AI lies in rethinking processes, creating new products, and opening new markets.

When leaders see productivity gains, the instinct is often to cut costs. However, the more important question is what new possibilities those gains enable. This shift from optimization to transformation is where AI differs most sharply from IT. Excellent individualised mentoring programmes available.

7. Why AI still needs IT but is not IT

AI does not exist in isolation. It depends on IT infrastructure for data storage, processing power, networking, and deployment. IT provides the foundation on which AI systems are built and operated.

However, this dependency does not make them the same. IT is the system that enables operations, while AI is the layer that enables intelligence. One provides stability, the other introduces adaptability.

A useful way to think about this relationship is that IT is the body of the organization’s digital systems, while AI acts as a form of cognitive capability layered on top.

8. Organizational implications and hidden challenges

The misunderstanding between IT and AI has practical consequences inside organizations. When AI is treated as just another IT tool, it is often constrained by risk management frameworks and limited experimentation.

Ethan Mollick highlights an additional challenge. When organizations do not create the right incentives, employees may hide their use of AI tools. He notes that this can lead to an “enormous information gap,” where leadership cannot see the real impact AI is already having.

This makes it difficult to build strategy because the organization is effectively blind to its own transformation. Subscribe to our free AI newsletter now.

9. The future: coexistence without confusion

The future is not about replacing IT with AI or merging them into a single discipline. It is about understanding their differences and using them together effectively.

IT will continue to ensure reliability, scalability, and security. AI will drive insight, adaptability, and innovation. Their integration will power the next generation of enterprises, but their identities will remain distinct.

The key is to allow AI to operate with the flexibility it requires while relying on IT for the stability it provides.

Conclusion: a necessary separation

The statement that IT is not AI and will never be is not a dismissal of IT. It is a recognition of the unique role each plays. IT is about certainty, structure, and control. AI is about possibility, learning, and exploration. Confusing the two leads organizations to underestimate AI and misuse it.

As Ethan Mollick reminds us, AI is not just another technology but “a profoundly odd, risky and powerful” one. Trying to force it into familiar frameworks limits its impact.

Understanding the difference is the first step toward using both effectively. Upgrade your AI-readiness with our masterclass.